|

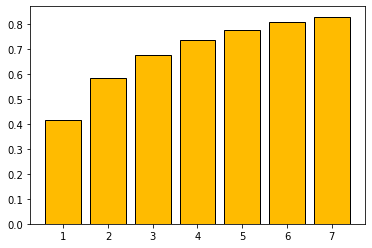

Probability mass function

| |||

|

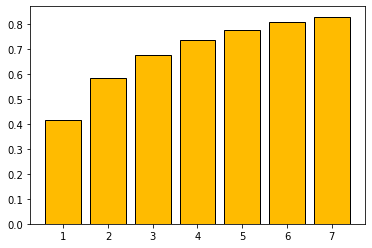

Cumulative distribution function

| |||

| Parameters | (none) | ||

|---|---|---|---|

| Support | |||

| PMF | |||

| CDF | |||

| Mean | |||

| Median | |||

| Mode | |||

| Variance | |||

| Skewness | (not defined) | ||

| Excess kurtosis | (not defined) | ||

| Entropy | 3.432527514776... [1] [2] [3] | ||

In mathematics, the Gauss‚ÄďKuzmin distribution is a discrete probability distribution that arises as the limit probability distribution of the coefficients in the continued fraction expansion of a random variable uniformly distributed in (0, 1). [4] The distribution is named after Carl Friedrich Gauss, who derived it around 1800, [5] and Rodion Kuzmin, who gave a bound on the rate of convergence in 1929. [6] [7] It is given by the probability mass function

Gauss–Kuzmin theorem

Let

be the continued fraction expansion of a random number x uniformly distributed in (0, 1). Then

Equivalently, let

then

tends to zero as n tends to infinity.

Rate of convergence

In 1928, Kuzmin gave the bound

In 1929, Paul Lévy [8] improved it to

Later, Eduard Wirsing showed [9] that, for őĽ = 0.30366... (the Gauss‚ÄďKuzmin‚ÄďWirsing constant), the limit

exists for every s in [0, 1], and the function ő®(s) is analytic and satisfies ő®(0) = ő®(1) = 0. Further bounds were proved by K. I. Babenko. [10]

See also

References

- ^ Blachman, N. (1984). "The continued fraction as an information source (Corresp.)". IEEE Transactions on Information Theory. 30 (4): 671‚Äď674. doi: 10.1109/TIT.1984.1056924.

-

^ Kornerup, Peter;

Matula, David W. (July 1995). "LCF: A Lexicographic Binary Representation of the Rationals". J.UCS the Journal of Universal Computer Science. Vol. 1. pp. 484‚Äď503.

CiteSeerX

10.1.1.108.5117.

doi:

10.1007/978-3-642-80350-5_41.

ISBN

978-3-642-80352-9.

{{ cite book}}:|journal=ignored ( help) - ^ Vepstas, L. (2008), Entropy of Continued Fractions (Gauss-Kuzmin Entropy) (PDF)

- ^ Weisstein, Eric W. "Gauss‚ÄďKuzmin Distribution". MathWorld.

- ^ Gauss, Johann Carl Friedrich. Werke Sammlung. Vol. 10/1. pp. 552‚Äď556.

- ^ Kuzmin, R. O. (1928). "On a problem of Gauss". Dokl. Akad. Nauk SSSR: 375‚Äď380.

- ^ Kuzmin, R. O. (1932). "On a problem of Gauss". Atti del Congresso Internazionale dei Matematici, Bologna. 6: 83‚Äď89.

- ^ L√©vy, P. (1929). "Sur les lois de probabilit√© dont d√©pendant les quotients complets et incomplets d'une fraction continue". Bulletin de la Soci√©t√© Math√©matique de France. 57: 178‚Äď194. doi: 10.24033/bsmf.1150. JFM 55.0916.02.

- ^ Wirsing, E. (1974). "On the theorem of Gauss‚ÄďKusmin‚ÄďL√©vy and a Frobenius-type theorem for function spaces". Acta Arithmetica. 24 (5): 507‚Äď528. doi: 10.4064/aa-24-5-507-528.

- ^ Babenko, K. I. (1978). "On a problem of Gauss". Soviet Math. Dokl. 19: 136‚Äď140.

|

Probability mass function

| |||

|

Cumulative distribution function

| |||

| Parameters | (none) | ||

|---|---|---|---|

| Support | |||

| PMF | |||

| CDF | |||

| Mean | |||

| Median | |||

| Mode | |||

| Variance | |||

| Skewness | (not defined) | ||

| Excess kurtosis | (not defined) | ||

| Entropy | 3.432527514776... [1] [2] [3] | ||

In mathematics, the Gauss‚ÄďKuzmin distribution is a discrete probability distribution that arises as the limit probability distribution of the coefficients in the continued fraction expansion of a random variable uniformly distributed in (0, 1). [4] The distribution is named after Carl Friedrich Gauss, who derived it around 1800, [5] and Rodion Kuzmin, who gave a bound on the rate of convergence in 1929. [6] [7] It is given by the probability mass function

Gauss–Kuzmin theorem

Let

be the continued fraction expansion of a random number x uniformly distributed in (0, 1). Then

Equivalently, let

then

tends to zero as n tends to infinity.

Rate of convergence

In 1928, Kuzmin gave the bound

In 1929, Paul Lévy [8] improved it to

Later, Eduard Wirsing showed [9] that, for őĽ = 0.30366... (the Gauss‚ÄďKuzmin‚ÄďWirsing constant), the limit

exists for every s in [0, 1], and the function ő®(s) is analytic and satisfies ő®(0) = ő®(1) = 0. Further bounds were proved by K. I. Babenko. [10]

See also

References

- ^ Blachman, N. (1984). "The continued fraction as an information source (Corresp.)". IEEE Transactions on Information Theory. 30 (4): 671‚Äď674. doi: 10.1109/TIT.1984.1056924.

-

^ Kornerup, Peter;

Matula, David W. (July 1995). "LCF: A Lexicographic Binary Representation of the Rationals". J.UCS the Journal of Universal Computer Science. Vol. 1. pp. 484‚Äď503.

CiteSeerX

10.1.1.108.5117.

doi:

10.1007/978-3-642-80350-5_41.

ISBN

978-3-642-80352-9.

{{ cite book}}:|journal=ignored ( help) - ^ Vepstas, L. (2008), Entropy of Continued Fractions (Gauss-Kuzmin Entropy) (PDF)

- ^ Weisstein, Eric W. "Gauss‚ÄďKuzmin Distribution". MathWorld.

- ^ Gauss, Johann Carl Friedrich. Werke Sammlung. Vol. 10/1. pp. 552‚Äď556.

- ^ Kuzmin, R. O. (1928). "On a problem of Gauss". Dokl. Akad. Nauk SSSR: 375‚Äď380.

- ^ Kuzmin, R. O. (1932). "On a problem of Gauss". Atti del Congresso Internazionale dei Matematici, Bologna. 6: 83‚Äď89.

- ^ L√©vy, P. (1929). "Sur les lois de probabilit√© dont d√©pendant les quotients complets et incomplets d'une fraction continue". Bulletin de la Soci√©t√© Math√©matique de France. 57: 178‚Äď194. doi: 10.24033/bsmf.1150. JFM 55.0916.02.

- ^ Wirsing, E. (1974). "On the theorem of Gauss‚ÄďKusmin‚ÄďL√©vy and a Frobenius-type theorem for function spaces". Acta Arithmetica. 24 (5): 507‚Äď528. doi: 10.4064/aa-24-5-507-528.

- ^ Babenko, K. I. (1978). "On a problem of Gauss". Soviet Math. Dokl. 19: 136‚Äď140.

![{\displaystyle -\log _{2}\left[1-{\frac {1}{(k+1)^{2}}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d8a5d1a2a434c302f66ad98221f4ed545d8f2984)