Openness versus quality: why we're doing it wrong, and how to fix it

The views expressed in this opinion piece are those of the author only. Responses and critical commentary are invited in the

comments section. The Signpost welcomes proposals for op-eds. If you have one in mind, please leave a message at the

opinion desk.

Wikipedia's motto, from its very inception in 2001, has been "The free encyclopedia that anyone can edit". This emphasizes the openness of the project, which stands today as the greatest example of crowdsourcing on the Internet. With 3.8 million articles, the English Wikipedia alone stands as the largest encyclopedia ever. Yet despite our success, trouble is looming on the horizon. Wikipedia's model, though highly successful thus far, creates an intrinsic conflict between openness, allowing the greatest number of people to edit, and quality, aspiring to clear language and the highest standards of accuracy. Which is more important? In making Wikipedia more open, you risk ending up with poor information, poor writing, and rampant vandalism – turning the project into a big joke. In becoming more restrictive, you gain respect and accuracy but risk alienating users through complex policies, guidelines, and policy creep, which inevitably leads to editor fall-off.

I penned an essay on my experiences with Wikipedia back in December 2009. There I reflected on my early fascination with the project, my experiences with its mechanism, and how editors fit into the overall model of the project. At the time I was optimistic about the future of this project, saying, for instance, that "time in itself solves all problems".

Now, two years later, I'm not so sure. Editor retention is a worrisome topic, and one that has been at the center of the Wikimedia Foundation's Strategy Initiative since 2009. The difference between the Foundation's numerical goals through 2015 and what has actually happened is quite stark, and earlier this month the Foundation's Director, Sue Gardner, brought the issue firmly into the limelight again in her presentation at Wikimedia UK (see the video).

What happened?

Let's take this statement as an axiom: Discussions on Wikipedia naturally lean towards stricter standards.

There are several reasons for this assertion. First is the growth of Wikipedia itself. In earlier times, editors were more concerned with plugging content holes and filling out red links than with specific, focused, well-cited, quality writing. Instances in which such quality was achieved were cataloged in BrilliantProse (note the name), an early version of today's Featured articles. As Wikipedia evolved, there were fewer content holes to fill, and editors began intensively improving articles. Processed by growth and parsed through instruction creep, BrilliantProse eventually became the featured articles we know today, in which high-quality prose is only one of ten criteria.

Second, we're self-conscious about how Wikipedia is perceived by the wider world. Regular editors spend many an hour laboring at prominent articles read by thousands of people every day, but find that outside Wikipedia their work is viewed as unusual. Some are even ridiculed by their peers, who perceive Wikipedia to be unreliable and poorly written. What are these editors to do? They return to their desks vowing to do better, and become protective of article quality. In discussions, some of these editors express the view that one good article is better than several rather poor ones. After all, we humans are social creatures; we seek to improve ourselves by improving our standing, and the standing of our work, in the view of other people. By writing better we hope to improve the public, outside perception of our work.

Third, it's very easy to increase standards incrementally because the repercussions for doing so take a while to appear. If you increase the quality requirements for a particular process, drama fails to occur. The standard becomes a little harder to reach, but the process generates better results. Examine any process following a major standards discussion and you'll see that the numbers will shift little in the short term. Short-term thinking, driven by idealization and natural growth, is at play here, as everyone accepts the current standards and interprets a bump in the upwards direction as a strictly positive thing. New editors have neither the credence nor the awareness to contribute to these kinds of discussions, thus involved parties tend to be veteran editors already familiar and comfortable with the standard.

Now let's look at the graph above, which shows that the active editing population hasn't grown, but has slowly dropped since 2007! Even more telling of this decline is the lack of activity in one of the most vital and most fluctuation-prone areas of Wikipedia— RfA. In November 2011 only two promotions took place; compare this with November 2006, for instance, when 33 candidates were promoted.

It's easy to miss, but every bump in quality we make is damaging to the new editor population. I like to think of Wikipedia as a tree, and editing as a ladder. You climb the ladder to reach different fruits ("articles"). Each time you make a process a tiny bit harder, you move the fruit a step higher up and add one more step to that ladder. That may not seem like such a big deal, but if you apply quality creep and repeat this process 10 times, you get one very daunting ladder—a ladder of such height that a new editor might say not even bother to climb it. The "low-hanging fruit" that should, in theory, account for this do not exist. The lowest prestigious piece of work a writer can achieve is a good article, and that too is daunting for a new editor inexperienced with Wikipedia's style and formatting. This, in a nutshell, is why Wikipedia is looking ill.

The Wikimedia Foundation, however, has been targeting usability as the core of our troubles. To this effect, they've redesigned the editing layout, softened the Wikipedia logo, introduced WikiLove, and done a host of other things to make Wikipedia a more comfortable place. While I agree that the Wikipedia interface should be friendlier, in key ways it's in a far better state today (I never liked Monobook and a new WYSIWYG interface would be much easier to use), in my mind the initiative has missed the point; thus far the Foundation has failed to address why people have been leaving, only giving them a more comfortable place to sit in during their stay.

But how do we fix it?

So you've read my rant and found yourself nodding at every sentence. Or you're completely opposed to everything I said and are already formulating an equally long rant on the talk page about why I'm completely and utterly wrong. Regardless of your take, we need to think about how we can kick Wikipedia back into the era of good feeling that my magical "five years ago" represents? Well, here's the kicker; we don't.

We've had a lot of time to develop and mature, and you may notice that in discussing why editor retention is falling, I never once explained why it is a bad thing—it really isn't. Now don't get me wrong, a growing population of active editors is always a good thing; but there's a limit to how far we can go, and in my mind, we've already passed it. The reason Wikipedia had such a rapidly expanding population in the first place was because we had so many content holes, we needed every hand available to keep our leaky boat afloat, and once our basis was established, there were plenty of idle hands eager to help. With the HMS Wikipedia now seaworthy, there is more quality content to write. With a limited pool of people with enough ability, interest, drive, and spare time to contribute such writing, a saturation limit develops, past which contributors are harder to find. And as standards continue climbing, this pool continues to shrink.

The Wikimedia Foundation should accept that there's a limit to how active a community can be, and that limit has been passed and distanced from. Openness and quality are a very real dichotomy, and one that has been around longer than the Foundation has, starting with the bitter split between Jimbo Wales and Larry Sanger. Although we can try various gimmicks to increase our credence among potential contributors, nothing short of creating a culture of forgiveness would push contributions back to the rise; and although Sue Gardner has often stressed the importance of not biting the newcomers, in a public system that sees a good deal of vandalism alongside legitimate contributions, a hard line is needed to keep out the trolls. A Wikipedia in which poor edits are reported upon with a shower of encouragements is an unmaintainable system. If editors have the will needed to maintain a presence in the system, they would appreciate real feedback more than petty encouragements.

The simplest thing you can do to reverse editor loss is this: whenever you come across a discussion on increasing the standards for a particular process, remember what it could mean for new editors, and pitch in by suggesting what it could mean for potential editors in the future. This would serve to remind people about the possible long-term consequences of their actions, regardless of immediate justifications.

Reader comments

Psychiatrists: Wikipedia better than Britannica; spell-checking Wikipedia; Wikipedians smart but fun; structured biological data

Mental health information on Wikipedia more accurate than Britannica and Kaplan & Sadock psychiatry textbook

The study focused on ten mental health topics (e.g. "antidepressants and suicide in young people" or "side-effects of antipsychotics"), five each in the areas of depression and schizophrenia. "Using the topic terms (or synonyms) as key words for the searches or through manual browsing, content relating to these topics was extracted from [Wikipedia and 13 other websites selected for prominent Google results for depression and schizophrenia] and from the most recent edition of Kaplan & Sadock’s Comprehensive Textbook of Psychiatry ... and the online version of Encyclopaedia Britannica" by two reviewers. For both depression and schizophrenia, three psychologists with clinical and research expertise in that area evaluated these extracts on accuracy, up-to-dateness, breadth of coverage, referencing and readability, on a scale from 1 to 5 ("e.g. Accuracy: 1 = many errors of fact or unsubstantiated opinions, 3=some errors of fact or unsubstantiated opinions, 5 = all information factually accurate"). As in an earlier study of the quality of health information on Wikipedia (Signpost coverage: " Wikipedia's cancer coverage is reliable and thorough, but not very readable"), readability was also measured using a Flesch–Kincaid readability test, which is calculated from word and sentence lengths.

For both depression and schizophrenia, Wikipedia scored highest in the accuracy, up-to-dateness, and references categories – surpassing all other resources, including WebMD, NIMH, the Mayo Clinic and Britannica online. In breadth of coverage, it was behind Kaplan & Saddock and others for both areas. And "of the online resources, Wikipedia was rated the least readable [by the human reviewers], although some of its topics received an average rating." Likewise, the Wikipedia content had relatively high Flesch–Kincaid Grade Level indices (around 16 for schizophrenia and 15 for depression – indicating that a tertiary level of education is necessary to understand the content), similar to that of Britannica but higher than most other resources examined.

The authors note that their "findings largely parallel those of other recent studies of the quality of health information on Wikipedia" (citing eight such studies published between 2007 and 2010):

- "Despite variability in the methodologies and conclusions of these studies, the overall implication is that Wikipedia articles on health topics typically contain relatively few factual errors, although they may lack breadth of coverage. ... Given the number of patients, would-be patients and concerned others using the internet to search for information on health issues, it seems that Wikipedia is an appropriate recommendation as an information source."

Psychologists gauge impact of Wikipedia's Rorschach test coverage

A paper in the Journal of Personality Assessment [2] tried to assess the impact of the Wikipedia article Rorschach test on psychologists' use of that test. As summarized by the authors, "In the summer of 2009, an emergency room physician [ User:Jmh649 – James Heilman, MD] posted images of all 10 Rorschach inkblots on ... Wikipedia. The images were accompanied by descriptions of “common responses” to each blot. ... a fierce debate ensued between some psychologists who claimed that posting the inkblots is a threat to test security and other individuals, including some psychologists and other mental health professionals, who argued that all information should be freely available, including full details of the Rorschach". (In fact, the debates on whether to display versions of the inkblots in the article go back to at least 2005, at first accompanied by rather spurious copyright claims – Rorschach died in 1922.) The authors note that the inkblots had already been revealed to the general public in a 1980s book and cite an earlier study [3] that had found "particularly damaging information" about personality assessment tests on the Internet as early as 2000, "including examples of test stimuli from... the Rorschach" (presumably including this site). Still, "Internet coverage of the Rorschach appeared to grow exponentially during" the 2009 debate about the Wikipedia article, which made it to the front page of the New York Times (Signpost coverage: " Rorschach test dispute reported").

The first part of the study examined the top 50 Google search results for " Rorschach" (excluding "watchmen" to filter out results about a comic book and film) and "inkblot test", coding them into four levels representing the "threat each site presents to test security and the extent to which the content of the site might aid an individual in dissimulating on the Rorschach". 44% of the sites were classified as Level 0 ("no threat"), e.g. home page of bands with "Rorschach" in their name, and 15% as Level 1 ("minimal threat"). The 22% Level 2 ("indirect threat") sites which "tended to discuss test procedures more explicitly" apparently included "several 'official' Rorschach Web sites, where one is able to register for Continuing Education Rorschach workshops, [and which] also allow visitors to purchase materials that contain sensitive test information. For example, certain training Web sites allow individuals to purchase training texts and instructional media without requiring a license or other professional credentials". The authors find it "disturbing" that many sites in this threat category "were authored by psychologists". 19% of the sites were classified as the highest level, "direct threat", e.g. many that contained depictions of one or more Rorschach inkblots, or specific information about how responses are interpreted. Together with results about the high percentage of Internet users consulting Wikipedia for health information (36% in the US in 2007 according to Pew research), the authors conclude that "we can no longer presume that examinees have not been exposed to this information prior to an assessment".

The second part of the study likewise starts out with a Google News search for "Rorschach" and "Wikipedia", noting that "of the 25 news stories reviewed, 13 included one or more of the Rorschach inkblots, with Card I as the most frequently displayed", and eventually arriving at five media stories about the controversy which allowed readers' comments ( [1], [2], [3], [4], [5]). The altogether 520 comments on these stories were "coded according to the opinion expressed by the writer regarding each of the following categories: (a) the field of psychology, (b) psychologists, and (c) the Rorschach." While the vast majority did not state a clear opinion on the first two categories, the authors note that "Of those comments that did express an opinion toward psychologists [ca. 16%] most were overwhelmingly negative." Many more of the commenters on the Wikipedia/Rorschach news stories expressed an opinion about the test itself: "In total, 182 (35%) of comments were classified as unfavorable toward the Rorschach, whereas only 55 (11%) were coded as favorable toward the Rorschach. The remaining 283 (54%) of comments were categorized as neutral or not mentioned." Among those who identified as mental health professionals, 61% expressed a favorable opinion about the test and 15% a negative one.

Asked for his comment on the paper, Heilman said: "My main criticism of their paper is that they seem to take as axiomatic that exposure to these images hurts test reliability without any real evidence to back it up. Otherwise it is an interesting piece." (The paper includes a section reviewing literature on "the impact of 'coaching' on psychological tests", however it does not mention results pertaining specifically to the Rorschach test, and mostly concerns subjects who deliberately try to "cheat" on such tests, rather than those who have accidentally been exposed to a test's material before.)

Spell-checking the English Wikipedia

University of Nebraska-Lincoln MBA candidate Jon Stacey reports on the results of a proof-of-concept tool to measure the rate of misspelled words in the English Wikipedia over time. [4] [5] A text parser (code available for download) was applied to a random sample of 2,400 articles. Instead of considering the latest revision, a random revision from the history of each article was used. The final corpus was obtained by stripping markup and non-ASCII characters as well as article sections such as the references and table of contents. Words were matched against a dictionary obtained by manually combining 12dicts and SCOWL ( source) with Wiktionary.

The results show that the percentage of misspellings has been growing steadily, reaching 6.23% for revisions created in 2011. Several weaknesses with the method are discussed, including the lack of Unicode support, the high rate of false positives, and the possibility that the rising rate might be associated with a rise in the complexity of content. The concluding remarks speculate on how semi-automated spell-checking may support editorial work at a large scale. (Wikipedians have used lists of common misspellings for many years, also integrated in semi-automatic editing tools such as AutoWikiBrowser.)

In related news, the developers of an open-source multilingual proofreading application called LanguageTool released a beta application for proofreading Wikipedia articles. wikiCheck proofreads articles from the English and German Wikipedias based on a set of customizable syntax and grammar rules. A bookmarklet is available to access the application from a browser.

Wikipedians are "smart but fun", and have expertise in topics they edit

Three researchers from

Stanford University and

Yahoo! Research used a novel method to construct "a data-driven portrait of Wikipedia editors", as described in a preprint currently undergoing review for publication.

[6] While earlier studies relied on Wikipedians participating in surveys (and identifying themselves as such), the authors mined data from users of the

Yahoo! Toolbar for Wikipedia URLs containing an &action=submit parameter, thereby arriving at a sample of 1900 editors of the English Wikipedia.

Their first main finding is that "on broad average, Wikipedia editors seem, on the one hand, more sophisticated than usual Web users, reading more news, doing more Web searches, and looking up more things in dictionaries and other reference works; on the other hand, they are also deeply immersed in pop culture, spending much online time on music- and movie-related websites." However, these "entertainment lovers ... form only a highly specialized subgroup that contributes many edits".

Based on the toolbar data, the paper also tries to answer the question "Do Wikipedia editors know their domain?" and related questions, positively: "across all topical domains Wikipedia editors show significant expertise. ... We also show that more substantial edits tend to come from experts", and that logged-in editors show more expertise than IP address editors. A final result is that "About half of the click chains culminating in an edit start with a Web search, with the other half originating on Wikipedia’s main page."

Wikipedia as a database for structured biological data

A special issue of Nucleic Acids Research features 11 articles describing how wikis and collaborative technology can be used to enhance biological databases. A commentary by Robert Finn, Paul Gardner and Alex Bateman [7] discusses in particular how to leverage Wikipedia, its collaborative infrastructure and large editor community to better integrate articles and biological data entries: the authors argue that the project offers an opportunity for crowdsourcing the curation and annotation of biological data, but faces major challenges for expert engagement, i.e. "how to get scientists en masse to edit articles" and "how to allow editors to receive credit for their work on an article".

Another article in the same issue [8] presents the Gene Wiki, an open-access and openly editable collection of Wikipedia articles about human genes. The article describes how structured data available on Gene Wiki articles is kept in sync with the data from primary databases via an automated system and how to automatically compute the quality of articles in the project at word or sentence-level using WikiTrust.

Individual and social drivers of participation in Wikipedia

A thesis entitled Individual and social motivations to contribute to Commons-based peer production was submitted by University of Minnesota student Yoshikazu Suzuki for an MA in mass communication. The thesis presents and discusses the results from a small series of interviews as well as a survey exploring individual and social motivations of Wikipedia contributors, drawing on social identity theory, volunteerism and uses and gratifications theory. The survey, run in July 2011 with support from the Wikimedia Research Committee, collected 208 responses from a random sample of 950 among the top English Wikipedia editors. The results, obtained by applying principal components analysis to the responses, reveal eight distinct motivational factors: providing information, the seeking of creative stimulation, concern for others’ well-being, the need to be entertained, the avoidance of negative self-affect, cognitive group membership, career benefits, and social desirability. An analysis of the relative strength of each factor indicates that providing information, the seeking of creative stimulation, and concerns for others’ well-being were the three strongest motivational dimensions. Grouping the eight factors into two macro-categories according to self- and other-focused motivations, the other-focused motivations were found to be significantly stronger than the self-focused motivations. The thesis reviews the implications of these results for the design of incentives for participation and editor retention. The full text of the thesis [9] and an executive summary are available under open access.

Mining article revision histories for insights into open collaboration

A paper in this month's edition of First Monday, ambitiously titled "Understanding collaboration in Wikipedia", [10] reports on a statistical analysis of a complete dump of the English Wikipedia (225 million article edits) with regard to several quantities, starting with two that were introduced in a 2004 paper by Andrew Lih "as a simple measure for the reputation of [an] article within the Wikipedia": the total number of edits an article has received ("rigor") and the number of (logged-in and anonymous) users who have edited the article ("diversity"). The First Monday paper cites a 2007 study from the same journal, which found that featured articles tend to have more edits and contributors (while controlling for a few other variables) [11] as a justification for using "rigor" and "diversity" as proxies for article quality, but includes other quantities such as the article size change for an edit. The paper cites earlier work on evaluating Wikipedia article quality (e.g. dismissing the well-known 2005 Nature study based on the mistaken assumption that it had "only focused on featured articles"), but does not discuss existing attempts at more sophisticated quantitative quality heuristics.

The First Monday paper highlighted that if consecutive edits by the same user are counted as one, the overall number of article revisions drops by more than 33%, "revealing that one in three revisions in Wikipedia consist of users responding to their own edits or continuing an ongoing edit begun by themselves". "Article diversity" ranged up to 12,437 contributors per article, with a median of 12 and an average of 32. One of the main conclusions is that "rather than reflecting the contributions and expertise of a large group of people, the typical article in Wikipedia reflects the efforts of a relatively small group of users (median of 12) who make a relatively small number of edits (median of 21)."

Supporting the assumption that most edits do not result in significant changes in content, the study finds that 31% of all revisions cause a size change of fewer than 10 characters, and 51% a change of fewer than 30 characters, with an apparently significant peak at a four-character difference, presumably related to the insertion or removal of the four brackets ("[[ ... ]]") that generate a wikilink.

The author notes the slight decrease in the overall number of edits since 2008, but tentatively explains it by the increasingly complete coverage of encyclopedic topics, and doesn't share the widespread concerns about declining or stagnating editor activity: "participation in Wikipedia seems to remain as healthy as ever as revisions made per article created each year has annually increased since 2001 without exception".

A different paper [12] from last year's "Collaborative Innovation Networks Conference" similarly promises far-reaching insights from "Deconstructing Wikipedia" solely based on revision history statistics without analyzing the actual content changes, using a much smaller sample – 30 featured articles from the English Wikipedia, but also including timestamps. The data did not confirm the hypothesis that "the editor who initiated an article would have a high level of involvement in the article’s creation": for only five of the 30 articles, the initial author was the most frequent contributor.

A second conclusion is that for all of the articles in the sample, "there is a single Wikipedian whose contributions far exceed all others", ranging from 8% to 82% of the articles with an average of 39% (but the analysis does not seem to have sought to quantify the extent to which this exceeds the contributions of the second most frequent contributor). The author indicates that this supports Jaron Lanier's "oracle illusion" criticism of a supposed presentation of Wikipedia as a product of "the crowd". Somewhat tautologically, the author observes "that the control of an individual editor seemed to be reduced as more editors joined the process", and points to the need to analyze "a significantly larger number of articles" to answer the question whether "too many cooks spoil the stew" (apparently unaware of the significant body of earlier literature on this subject, starting with a 2005 paper that presented an answer in its title: " Too many cooks don't spoil the broth", and including the 2007 study which the above reviewed First Monday paper relied on).

A third result of the paper, which likewise might not surprise those already familiar with Wikipedia's editing processes, is that "the creation process is continuous and can go on for a very long time", with even articles about historic events from the distant past continuing to receive edits.

The author, an assistant professor in management and marketing at Virginia State University, concludes the paper by urging his readers to start "thinking about how the wiki platform, itself, is influencing the creation process".

Briefly

- The Wikimedia Research Committee launched a public consultation on the future data/research infrastructure for Wikimedia, in an effort to understand how to best serve the research and developer community with open data from our projects. The consultation will remain open through January 2012 and the full set of responses will be shared under a CC0 license.

- Semantic enhancements: In "Enhancing Wikipedia with semantic technologies", [13] Lee et al. review existing interfaces for semantic search and present their own platform for enhancements. Based on small-scale user tests, they find that one of their three enhancements – range-based queries – are strongly preferred by users, who would find them desirable not only in Wikipedia but on the wider web. A longer summary is available on AcaWiki.

- English and Finnish Wikipedias egalitarian, Japanese hierarchical: A paper titled "Analyzing cultural differences in collaborative innovation networks by analyzing editing behavior in different-language Wikipedias" [14] (from the 2010 Collaborative Innovation Networks Conference, as was the revision statistics paper reviewed above) applied social network analysis to collaboration on featured articles on the English, German, Japanese, Korean, and Finnish Wikipedias. It "found notable differences in the communication behavior among egalitarian cultures such as the Finnish, and quite hierarchical ones such as the Japanese. While the English language Wikipedia shows a distinctive pattern, most likely because it is by far the largest and frequently exploring new concepts copied by others, it seems to follow more the Finnish egalitarian, than the Japanese hierarchical style".

- User:Emijrp shared a link on wiki-research-l listing 2,596 scholarly references on wikis, obtained by scraping Google Scholar results (on December 22, 2011), as part of a project to build a comprehensive bibliography about wikis – a challenging task that has seen various earlier attempts and was the subject of a workshop at this year's WikiSym (see the October and April editions of this research report).

- Should doctors use and edit Wikipedia?: An editorial in the Journal of the Royal Society of Medicine [15] asks whether doctors should reject the use of Wikipedia. The two-page article (one-day access: US$30.00) cites results about the popularity of Wikipedia among medical students, young physicians and the general public, and for some reason highlights the malicious edits of British journalist Johann Hari as example for the downsides of Wikipedia's free editability. It contains a review of the literature on the reliability of Wikipedia's medical information, which is less thorough than that of the Psychological Medicine article reviewed above, and comes to a less approving but still somewhat positive conclusion: "Although Wikipedia entries are often poorly structured and difficult to understand, they are comparable in accuracy to some online resources, such as health insurance websites." The authors seems to lean towards recommending against ignoring Wikipedia: "One risk of clinicians disengaging from Wikipedia is that only contributors motivated by personal experience (e.g. patient anecdote) or vested interests (e.g. individual clinicians, institutions or companies promoting their own ideas and products) will remain."

References

-

^ Reavley, N. J., Mackinnon, A. J., Morgan, A. J., Alvarez-Jimenez, M., Hetrick, S. E., Killackey, E., Nelson, B., et al. (2011). Quality of information sources about mental disorders: a comparison of Wikipedia with centrally controlled web and printed sources. Psychological Medicine, pp. 1–10.

DOI

-

^ Schultz, D. S., & Loving, J. L. (2012). Challenges Since Wikipedia: The Availability of Rorschach Information Online and Internet Users’ Reactions to Online Media Coverage of the Rorschach–Wikipedia Debate. Journal of Personality Assessment, 94(1), 73–81. Routledge.

DOI

-

^ Ruiz, M., Drake, E., Glass, A., Marcotte, D., & van Gorp, W. (2002). Trying to beat the system: Misuse of the Internet to assist in avoiding the detection of psychological symptom dissimulation. Professional Psychology: Research and Practice, 33, 294–299

PDF

-

^ Stacey, Jon (2011). Text mining Wikipedia for misspelled words.

HTML

- ^ Fogarty, Kevin (December 23, 2011). "Wikipedia test showes Americans' ubility too spel is detereeorating". ITworld.

-

^ West, Robert, Ingmar Weber, and Carlos Castillo (2011). Smart but Fun: A Data-Driven Portrait of Wikipedia Editors.

PDF

-

^ Finn, Robert D, Paul P Gardner, and Alex Bateman (2011). Making your database available through Wikipedia: the pros and cons. Nucleic Acids Research, 40(1)

DOI •

HTML

-

^ Good, Benjamin M, Erik L Clarke, Luca de Alfaro, and Andrew I Su (2011). The Gene Wiki in 2011: Community intelligence applied to human gene annotation. Nucleic Acids Research 40 (1): D1255-1261.

DOI •

HTML

-

^ Suzuki, Yoshikazu (2011) Individual and social motivations to contribute to Commons-based peer production, MA thesis, University of Minnesota.

PDF

-

^ Kimmons, Royce (2011). "Understanding collaboration in Wikipedia". First Monday 16 (12).

HTML

-

^ Wilkinson, D.M., and B.A. Huberman (2007). "Assessing the value of cooperation in Wikipedia". First Monday 12 (4).

PDF

-

^ Feldstein, A. (2011). "Deconstructing Wikipedia: Collaborative Content Creation in an Open Process Platform". Procedia – Social and Behavioral Sciences, 26, 76–84.

DOI

-

^ Lian Hoy Lee, Christof Lutteroth, and Gerald Weber (2011). Enhancing Wikipedia with Semantic Technologies. In iUBICOM'11: The 6th International Workshop on Ubiquitous and Collaborative Computing, 2011.

PDF

-

^ Nemoto, Keiichi, and Peter A. Gloor (2011). Analyzing Cultural Differences in Collaborative Innovation Networks by Analyzing Editing Behavior in Different-Language Wikipedias. Procedia – Social and Behavioral Sciences 26 (January 2011): 180–190.

DOI

-

^ Metcalfe, D., & Powell, J. (2011). Should doctors spurn Wikipedia? JRSM, 104 (12), 488–489.

PDF

Reader comments

Fundraiser passes 2010 watermark, brief news

Update on Fundraiser 2011

The Wikimedia Foundation has posted an update on the Wikimedia Foundation's 2011 annual fundraiser. The update featured images and short biographies of twelve faces that were selected for use during this year's fundraiser. As fundraising chief Megan Hernandez explained, "these past few weeks, we’ve rotated through a couple dozen appeals with people from different parts of the world with unique Wikipedia experiences and personal stories to tell. ... Right now and for the next few days, we have all the appeals up live together." As of time of writing, all twelve appeals that made it through the selection process are still in active rotation, along with Jimmy Wales' own personal appeal.

The annual fundraiser is the Wikimedia Foundation's biggest single source of income, and has been growing with the project since early efforts from 2004. As with last year's drive, this year's event kicked off with Jimbo Wales' "personal appeal", which consistently received the highest feedback in previous drives and has again this year (see previous Signpost coverage), with a change to a green banner curiously gathering increased contributions. The appeals featured then shifted their focus to the community, turning the spotlight on appeals from individual Wikimedians. As of 26 December, according to the fundraiser statistics, a total of $16.9 million has been raised, just surpassing last year's goal of $16 million.

In brief

- Grand Prix Brasil: Wikimedia Brasil is holding an editing "Grand Prix" to prepare and develop an offline version of the Portuguese Wikipedia. The Grand Prix is a race to develop 5,000 core articles, to be packaged with computers manufactured by Brazilian company Grupo Positivo. According to the Wikimedia Brasil community, this would mean this small part of the Portuguese Wikipedia would be installed on "approximately 13% of the national market of personal computers and with a greater penetration lower-income strata." The event starts in January 2012 with a registration deadline of 7 January; the goal is to have 100 participants in 15 teams, and potential contributors are encouraged to sign up. Prizes are available for contributors, including "buttons, stickers, notebooks and t-shirts".

- New community fellow: The Wikimedia Foundation has announced Sarah Stierch as the first recipient of the Wikimedia Community Fellowship for 2012. According to the Foundation's official blog, Stierch's fellowship "is intended to support her commitment to encouraging women’s participation in Wikimedia projects." Stierch, a graduate student in Museum Studies at George Washington University, was a 2011 Wikipedian in Residence at the Archives of American Art in Washington D.C. ( Signpost coverage), and conducted the Women and Wikimedia Survey 2011 on the gender gap on the male-dominated Wikipedia (see previous Signpost coverage). The Wikimedia Fellowships program is currently accepting applications through to the 15 January deadline.

- Wikimedia Israel targets unfulfilled government promise: On 25 December, Wikimedia Israel used a post on its blog ( automatic translation) to publish a letter addressed to several figures within the Israeli government. The letter, headed "Re: failure to implement the government decision regarding the release of Government Press Office photographs to the public", noted that although "on 8 May, Independence Day, the government decided to make the אלבום התמונות הלאומי ['national photo album'] and GPO-published images accessible to the public free of charge" (an "important" decision "accompanied by interviews and many articles in the media"), "more than half a year has passed and the government's decision has not yet been implemented". In unrelated news, Wikimedia France posted a summary of their December 9 ceremony at the Musée de Cluny for the winners of Wiki Loves Monuments (WLM) competition. The event also featured a private tour of the museum.

- New mailing list created: Stuart West, the Foundation's treasurer, recently founded a new treasurers' mailing list, to disseminate financial and auditing best practice among those responsible to financial transparency within both the Foundation itself and its many affiliated chapters (West was keen to stress, however, that "the list is public and anyone interested in financial reporting and transparency is welcome"). Its members are currently "meeting and greeting"; West took the opportunity to write a detailed post describing the current] governance structure of the Wikimedia Foundation.

- Office hours: Philippe Beaudette, the Wikimedia Foundation's "Head of Reader Relations", held an office hours session on 21 December along with Maggie Dennis ( moonriddengirl). The discussion focussed on the team's response work, including work emergency, BLP, legal and technical tickets. For example, Beaudette noted that "one of the things that we hear over and over again ... is that readers want a Share/Like button. Some of our experienced editors are opposed to it, but readers really want it". Beaudette held a similar office hours meeting on 22 December.

- Two temporary wikis closed: The Tenth Anniversary and ReaderFeedback wikis were both closed this week. The 'ten' Wikipedia was an organizational wiki to facilitate celebrations of Wikipedia's 10th anniversary; the ReaderFeedback wiki served as a testbed for the ReaderFeedback extension. The extension, which has similarities to the ArticleFeedback extension currently being used on the English Wikipedia, has not been in development for some time. In related news, the Indonesian Wiktionary has reached 60,000 entries and 100,000 total pages, the French Wikisource reached 150,000 text units, the Serbian Wikinews reached 70,000 articles, and the Occitan Wikipedia has reached 100,000 total pages.

- New administrators: The Signpost welcomes

Slon02 as the English Wikipedia's newest administrator.

Reader comments

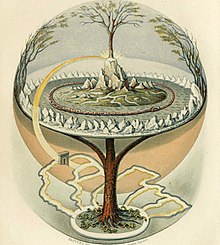

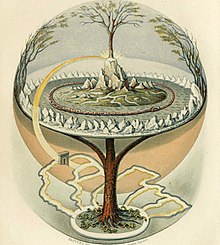

The Tree of Life

This week, we sat down with WikiProject Tree of Life, one of Wikipedia's oldest projects, predating the creation of the WikiProject concept. While WikiProject Tree of Life's current page was formulated in June 2002, the effort to organize Wikipedia's articles on all living organisms dates back to at least late 2001, when Manning Bartlett mentioned the existing Tree of Life project in his proposal for the establishment of WikiProjects. Today, the project's goals remain unchanged, despite the proliferation of descendent projects handling various groups of living things. Among the project's to-do list are creating missing articles for animals and plants, responding to cleanup tags, identifying unknown images of plants on Commons, and monitoring the project's watchlist. We interviewed members Shyamal, Bob the Wikipedian, JoJan, EncycloPetey, and Kevmin.

What motivated you to join WikiProject Tree of Life? Do you have any degrees or experience in biology? Are you a member of any of the kingdom- or species-specific projects under the scope of WikiProject Tree of Life?

- Shyamal: I have always been interested in the living world around and have a masters degree which included biology subjects. Having trained briefly in entomology before leaving it due mainly to reasons of economics, it has been a particular pleasure to be able to continue some occasional secondary research on entomological aspects via Wikipedia. My knowledge and experience with birds may perhaps be richer than in other groups.

- Bob the Wikipedian: I have half of a minor in biology, but I've been a long-term enthusiast for as long as I can remember! My first book was the color-plate global biome book Minelli and Borella World Atlas of Animals (my parents bought it so they could answer my queries about what gorillas eat and what noise hippos make). Zoobooks made for a great supplementary addition each month once I got old enough to read, and Encarta Encyclopedia fed my thirst for knowledge through grade school. Ever since college started, though, I've felt the need to seek further information and share it as well, and Wikipedia's Tree of Life has let me do just that. In my spare time, I conduct various informal research projects; last summer I collected local wild invertebrates all year and fed and tried to breed (unsuccessfully) hundreds of brown recluses. This year, I've been keeping an eastern fence lizard and several five-liners, which I recently put outside to see if I can have them brumate in captivity. On Wikipedia, I mostly work with code-related tasks, such as the {{ automatic taxobox}} template family and the {{ geological range}} template family, but also create stubs for newly discovered paleozoids.

- JoJan: I started WikiProject Gastropods in 2004 at the request of an administrator, who was an early member of Tree of Life. I had no degree in biology or particular experience. When I realized the enormous scope of this project (somewhere between 80,000 and 100,000 species), it got through my head that this was an almost impossible undertaking and would take a long time. I had to learn everything from scratch: the biology, the taxonomy (what a mess it was in those days when searching the Internet!), the scientific jargon etc.... This shows that one doesn't need to be an experienced biologist to participate in one of the projects under the umbrella of Tree of Life. All one needs is dedication and a willingness to learn. Even uploading of photos of species is much appreciated.

- EncycloPetey: I have a bachelor's degree in biology and did extensive graduate work. Currently, I participate in WP:PLANTS and WP:ALGAE, and also work in these areas on Wikispecies. My participation began when I discovered there were many major groups of plants, algae, and fossil plants with little or no coverage on Wikipedia. I wanted to make things better.

- Kevmin: I've been working with various subprojects of WP:ToL since I started contributing to Wikipedia. As someone slowly working towards a degree in paleontology almost all of my contributions are on extinct animals and plants.

As one of the oldest collaborations on Wikipedia, WikiProject Tree of Life was created with an unusually creative name. Please describe for our readers the project's long history and why the project is named after the tree of life. Have there been any attempts to change the name to something related to the project's scope, like "WikiProject Taxonomy"?

- Shyamal: I am not sure of the origin, but it is definitely a nice name that reflects the broadness of its scope (which goes beyond taxonomy). The image of evolution has evolved from that of a "ladder" to a "tree" and someday will perhaps be seen as a (tangled) "web of life".

- EncycloPetey: I rather suspect it was influenced by the name of the Tree of Life web project [6], which was the first serious effort to create an interactive phylogenetic diagram for all of life, with editing and maintenance done by experts in their respective fields.

- Bob the Wikipedian: You've inspired me to do a bit of research. It would seem the original proposal didn't have the words "Tree of Life" anywhere in it; the name actually was assigned upon the creation of the WikiProject on 22 June 2002. To quote the original scope presented when the project commenced, "This WikiProject aims to present the taxonomy of all living species (and maybe some extinct ones as well) in a tree structure. This is a particularly ambitious WikiProject, as there are millions of them." The project was listed as a daughter project of WP:WikiProject Biology (not created until three years later!) and a granddaughter of WP:WikiProject Science, and was to be modeled after the ITIS and NCBI systems (the naming conflict with the Tree of Life Web Project appears entirely coincidental).

How does the project's scope differ from other large umbrella projects? What relationship does WikiProject Tree of Life have with the numerous sub-projects?

- Shyamal: most discussions relating to the taxobox and general aspects like article structure and naming tend to be taken up in the umbrella project.

- Bob the Wikipedian: The Tree of Life serves as a sort of organization within which are formed the various projects such as WikiProject Animals, WikiProject Plants, etc., which all have their sub-organization-level projects as well. So when someone has a question or concern that affects part of the Tree of Life, they post it on the talk page of the most common denominator-- that is, if someone has a question about how to make an article about a dolphin have the correct information that would appear on any other mammal page, the topic gets posted at Wikipedia talk:WikiProject Mammals. If someone has an idea for how to improve the taxobox, this affects all branches of life, so a notice goes at Wikipedia talk:WikiProject Tree of Life.

- EncycloPetey: Aspects of style as well as questions of principles and policies are discussed here, rather than particular cases of content. Most content-specific questions are handled by sub-projects.

- Kevmin: As others have noted the project acts as a focus for questions that are more general in nature and affect multiple subprojects. These discussions include taxobox functionality and improvement, general article structuring and is a home project for the organisms and articles that do not have a subproject.

Do you feel there are particular kingdoms that are better represented on Wikipedia? Do some living things elicit more enthusiasm and activity from editors?

- Shyamal: for sure, we have a bias towards the visible and more appealing taxa. We also have geographic differences and have a particularly limited coverage for Africa, parts of Southeast Asia and South America that are particularly rich.

- Bob the Wikipedian: Honestly, I don't dabble much in separate kingdoms (I stick with Animalia myself), but I can say with certainty there are phyla that don't get enough attention; for instance, Porifera. Speaking for paleozooids, however, there is a dramatic bias toward dinosaurs, even though this is one of the smallest known groups of paleozooids! To properly reflect the species distribution, Wikipedia would need perhaps at least a thousand articles on prehistoric shellfish per each individual article on a dinosaur!

- EncycloPetey: Most definitely. We have pretty good coverage of vertebrates and insects, and some seed plants. We have little to no coverage of echinoderms (seastars and urchins), bryophytes (mosses and liverworts), or algae. Articles about micro-organisms are few and poorly covered, with some notable exceptions.

- Kevmin: Mammals and vertebrates in general always attract more attention then other groups. But conversely the coverage of extinct mammals and extinct groups outside of Dinosaurs is very spotty at best.

WikiProject Tree of Life has provided resources for editors to create articles for the numerous living things included in various lists of missing articles. How close is Wikipedia to providing a stub about every known creature? What will be the next step once that goal is achieved?

- Shyamal: that goal will likely not be reached here or on The Encylopedia of Life (EoL) project. The major differences between Wikipedia and other projects is (a) a holistic view - including links to biographies (b) cultural associations and the possibility of (c) reuse due to a better licensing scheme (See doi: 10.3897/zookeys.150.2189 on this issue). Things like semantic search (for example a friend was recently looking for literature on fishes preying on birds and almost all keyword based searches produce false positives - a semantic search would differentiate the object, subject and verb) will become more interesting in the future, and Wikipedia will surely form the best corpus available for that.

- Bob the Wikipedian: Ha! We certainly try, but to be quite honest, it will be MANY years down the road before we can even come close to cataloguing all known species. Even given that time, there's no official list of accepted species-- many species are rather ambiguous in that scientists don't agree on whether they are unique species, so the idea of Wikipedia eventually being the ultimate compendium of all known species is a bit misguided.

- JoJan: The WikiProject Gastropods has a working group of about 25 collaborators, some of which are very active. We have a bot active at this moment, working at a slow pace (as we are required to check it each time) creating stubs (or even articles qualified at the level of “start”) of several 10,000 species. This is already saving us a lot of time, enabling us to add the necessary data without going to the trouble of creating first an article from scratch.

- EncycloPetey: We don't even have stubs for all known species, and we aren't likely to have that in the near future. Classification is still a dynamic field, with continual new discoveries made of species and new findings published regarding the relationships of known ones. Sometimes a fossil that was originally thought to be an animal turns out to be an alga, and then the whole classification will change accordingly. If we did have all the stubs started and up-to-date on their classification, we'd still want to add coverage of the organisms' distribution, habitat, appearance, interactions with other organisms, and so forth. There's also a continual search for new and better images to accompany articles, and an on-going effort to cross-link with Wikispecies, Commons, and Wikipedias in other languages.

- Kevmin: We are very far from that goal for the living groups, and we are still missing most of the higher level articles for extinct groups, going as far up as orders and classes!

What are the project's most pressing concerns? How can a new contributor help today?

- Shyamal: I think experts need to consider using Wikipedia to share their literature reviews. There is a level of expertise needed in order to follow WP:UNDUE and WP:RS, something that is denied more often than not. There is an important role for all others including that of looking around at the living world and asking questions. One would really hope that the projects and the reference desk actually converge in a meaningful way - for instance it would be great if there is a place where people can upload images and ask for identifications of living things (among others). Questions and images would encourage research oriented editors to create and develop articles.

- Bob the Wikipedian: For me, as a part of the TaxForce ( WP:TAXFORCE -- we help out with the scientific classification or "taxonomy" around here), maintaining an effective taxonomy display is important. I've been working closely with the other members of the TaxForce to set up and maintain a relational database structure so that taxonomies can be automated quickly and efficiently, allowing complex scientific revisions to be quickly reflected on Wikipedia as they happen and are accepted into scientific culture.

- EncycloPetey: There's a great need to improve the articles on the high-level and well-known groups. The articles on the kingdoms Archaea, Bacteria, and Fungi have all been awarded FA status, but Plant and Animal are far from achieving even GA. There are many articles of this stature that are close to reaching the next level, but much of the focus has been on improving the articles on smaller groups or species. As a result, we have great articles on the Florida strangler fig and the Marsh rice rat, but poor articles on Amphibians and Palms, each rating only a "C".

Anything else you'd like to add?

- Shyamal: In the Victorian era, colonial officers collected specimens and sent them back to museums in their home countries (ex. the British Museum) and this led to a centralization of information in these places. We continue this process today without the colonization and in a far more decentralized form. Just a casual photograph that I added to Wikimedia Commons, of a fly ( File:DiopsidWynaad1.jpg Teleopsis sykesii), helped an expert on that group in producing a paper that settled some long-standing taxonomic confusion resulting from a mixup of labels in a museum collection. Another friend contributed the first ever photograph of a burrowing snake ( Melanophidium bilineatum), a species that had not been seen in the wild since it was described in 1870. So if ever the Wikimedia Foundation ends up with an excess of funds, please consider using it towards distributing digital cameras (even used ones) to people living in some of the last wilderness areas of the world and some means for getting their images onto Wikimedia Commons!

- JoJan: It would be a great help if the Museums of Natural History all over the world would share photos of their collections with us by uploading them to the Commons under an appropriate license (perhaps with the help of a bot). Many of these museums possess enormous collections of butterflies, beetles, shells etc...that, in most cases, stay out of sight of the public at large. Curators of these museums could, on the other hand, also send an invitation to us to come and photograph the collections or part of the collections that interest us. Such photos could then eventually end up in a taxobox or as an illustration in an article about that particular species and thus display an item of their collection for all to see. This is simply a win-win situation.

- EncycloPetey: I'm impressed and pleased to see coordinating efforts at Commons and Wikisource. Commons continually surprises me with images of plants I've never had the privelege to see before, even in a garden or book. As a taxonomist and bibliophile, I'm excited by the various Wikisource projects efforts to amass collections of papers by early naturalists who first described and named the various organisms. This effort makes it much easier to research and cite those early sources which laid the foundation of modern taxonomy.

- Kevmin: The current collaboration between ZooKeys and Wikimedia has opened up a source for many high quality images and diagrams, making the articles here richer for it.

Next week we'll ring in the New Year by reflecting on the projects we saw in 2011. Get a head start by visiting the

archive.

Reader comments

Going through the roster with Killervogel5 and a plethora of featured content

We interviewed Killervogel5, who this week has had the final list in the Philadelphia Phillies all-time roster promoted. Killervogel5, also known as KV5, has been editing Wikipedia since January 2006 and has contributed 57 featured lists, 4 featured topics, 8 good articles and 83 did you knows. KV5 gives us background information on the featured lists, his plans for the future, and suggestions for those who wish to write featured lists.

On the Philadelphia Phillies roster project: I began the Phillies roster project in May 2010, and it was completed in December 2011, so the project as a whole took me 19 months to finish. Originally, there were 21 articles in the topic, but during the course of the various and sundry FLC nominations, it was reduced to 18 through mergers. The most time-consuming part was definitely the table building. That was the first part of the project that I tackled and it turned out to be a smart move. If I hadn't had all the tables done, it would have been nearly impossible to write the ledes for each list and I probably would have quit somewhere about ... probably letter F or so.

The most difficult part of this project was adjusting to the changing FL standards. At the time that I nominated Philadelphia Phillies all-time roster (A) for featured list, a site-wide movement for better compliance with Wikipedia's policies on accessibility was gaining ground at FLC. I offered to make that first list a testcase for successfully implementing the improved accessibility standards, but this occurred after the lists had already been completed in userspace and moved to the mainspace. So I had to go through and change the format of each list. (I have to give a shout-out to RexxS for his help on the ACCESS requirements.)

I'd be remiss if I didn't mention the most fun part: all of these lists were moved to the mainspace at the same time, and they became a gigantic DYK. I had a lot of good times developing a good hook for that.

I chose to improve the Phillies' roster lists because it is an intersection of my two big loves on Wikipedia: the Philadelphia Phillies, a team I've followed since I was a young child; and writing featured lists, which gives me great satisfaction. I really do enjoy taking on large list-related projects. Some previous baseball-related list projects of mine include the series of Rawlings Gold Glove Award and Silver Slugger Award lists and several lists of Major League Baseball managers.

On KV5's plans for the future: I haven't quite decided on my next project yet, but rest assured, I'll try to make it something monumental! I have started improving the List of Philadelphia Phillies owners and executives, using a book I received as a gift last Christmas (yes, I asked for a reference book for Christmas just for Wikipedia), but I've gotten away from it so maybe I'll tackle that next.

On writing featured lists: Featured lists require significantly less prose, but there is a lot of specialized mark-up that writers need to know to comply with accessibility standards. For new writers trying their hands at FLC, I always suggest peer review before an FLC nomination. There are so many little things here and there that can hang up an FLC nomination. Always check and double-check the reliability of your sources. We have the reliable source noticeboard or the FLC nomination talk page if you have questions on those, or you can seek out help at an appropriate WikiProject talk page. Finally, always review the appropriate criteria and make sure that, in the case of featured lists, your list meets the standards for prose, the lead, comprehensiveness, structure, style, and stability.

Featured articles

Eleven featured articles were promoted this week:

- Mark Hanna ( nom) by Wehwalt. Mark Hanna (b. 1837) was a businessman and the political manager of American president William McKinley. After being expelled from college, Hanna served briefly in the American Civil War before finding employment with his new wife's father. Soon made a partner in the firm, after his 40th birthday Hanna turned to politics, becoming a political manager (he is often credited with originating the modern presidential campaign) and a US Senator. Hanna died of typhoid fever in February 1904.

- Crescent Honeyeater ( nom) by Casliber and Mdk572. The Crescent Honeyeater (right) is a songbird from south-east Australia. The species has dark grey plumage and paler underparts, highlighted by yellow wing patches, with the females being slightly duller in colour than the males. The Crescent Honeyeater forms long term pairs, with the female building the nest and caring for young. The current environmental classification of the bird is least concern.

- " Single Ladies (Put a Ring on It)" ( nom) by Jivesh boodhun. "Single Ladies", a song by Beyoncé Knowles from her album I Am... Sasha Fierce, was inspired by Knowles' secret marriage to Jay-Z. The lyrics address men being unwilling to commit in relationships and are put to dance-pop and R&B tunes with dancehall and bounce influences. The song, which won three Grammy awards and was named one of the best songs of 2008, peaked at number one in the United States and has been certified quadruple-platinum.

- U.S. Route 2 in Michigan ( nom) by Imzadi1979. US Route 2, which connects Everett, Washington, to the Upper Peninsula of Michigan, runs 305.151 miles (491.093 km) and was designated on 11 November 1926. The route includes several memorial highway designations and historic bridges, and passes through two national and two state forests.

- "Rehab" (Rihanna song) ( nom by 1111tomica. "Rehab", from Rihanna's album Good Girl Gone Bad, was written by Justin Timberlake. The lyrics, with vocals provided by Rihanna and Timberlake, discuss the singer's former lover as if he were a disease. Although critical opinion was divided on the song, "Rehab" went on to reach the Top 20 in five countries.

- Diffuse panbronchiolitis ( nom) by Rcej. Also known as DPB, this inflammatory lung disease is of unknown cause and is found predominantly in East Asian males. Usually striking around the age of 40, the disease causes lesions in the lungs and inflammation; it can lead to bronchiectasis, an irreversible lung condition that involves the enlargement of the bronchioles, and the pooling of mucus in the bronchiolar passages

- Kenneth R. Shadrick ( nom) by Ed!. Often incorrectly called the first American killed in the Korean War, Shadrick (b. 4 August 1931) joined the US military after dropping out of high school. He was killed by machine gun fire from a North Korean T-34 tank on 5 July 1950; his death was reported widely and hundreds attended his funeral.

- " One Tree Hill" ( nom) by Melicans. "One Tree Hill", a song from Irish rock band U2's 1987 album The Joshua Tree, was written to honor Bono's friend Greg Carroll, who had recently died in a motorcycle accident. The song, written to reflect Bono's thoughts on Carroll's funeral, was further developed through a jam session with the rest of the band. Critically acclaimed, "One Tree Hill" was initially not performed in concert as Bono feared for his emotional state. Today the song is performed sporadically.

- American Livestock Breeds Conservancy ( nom) by Dana boomer. The ALBC is a non-profit organization which promotes and preserves rare breeds of livestock. Founded in 1977, the organization currently has 3,000 members, 9 staff members, 19 members on its board, and an operating budget of almost half a million dollars. The organization has seen several successes, saving breeds from extinction and (for a time) keeping a gene bank of rare breeds.

- Robert de Chesney ( nom) by Ealdgyth. Robert de Chesney, Bishop of Lincoln during the middle of the 12th century, was an early patron of Thomas Becket; however, his nephew Gilbert Foliot was one of Becket's "implacable foes". Active in his diocese, de Chesney wrote at least 240 documents and disputed with St Albans Abbey over his right as bishop to supervise the abbey. He died in December 1166 and was buried in Lincoln Cathedral.

- Les pêcheurs de perles ( nom) by Brianboulton. Les pêcheurs de perles, meaning The Pearl Fishers, is an opera in three acts by the French composer Georges Bizet (pictured at right), to a libretto by Eugène Cormon and Michel Carré. First launched in a season of 18 performances beginning 30 September 1863, the opera received scathing reviews from the press and was not revived until 1886, after Bizet's death. In these later performances, the score was modified; however, since the 1970s attempts have been made to perform the opera as Bizet intended.

Featured lists

Seven featured lists were promoted this week:

- List of Brighton & Hove Albion F.C. seasons ( nom) by Struway2. Brighton & Hove Albion Football Club, an English association football club based in the city of Brighton and Hove, East Sussex, was founded in 1901. The team have spent 7 seasons in the fourth tier of the English football league system (with two divisional titles), 55 in the third (with 3 divisional titles), 18 in the second, and 4 in the top tier.

- Warren Spahn Award ( nom) by Muboshgu. The Warren Spahn Award is awarded annually by the Oklahoma Sports Museum to the best left-handed pitcher in Major League Baseball (MLB). Created in 1999 and named after famous left-handed pitcher Warren Spahn, the awards are not recognized by the MLB.

- Twenty-five Year Award ( nom) by Found5dollar. The Award is given yearly by the American Institute of Architects to buildings that have "stood the test of time for 25 to 35 years" and "[exemplify] design of enduring significance". The first building awarded, in 1969, was the Rockefeller Center in New York City; the most recent winner is the John Hancock Tower in Boston.

- Radiohead discography ( nom) by GreatOrangePumpkin. British alternative rock band Radiohead have released eight studio albums, twenty-four singles, seven extended plays, thirty music videos, seven video albums, and two compilations since their debut album, Pablo Honey, was released in 1993. Their most successful album, OK Computer, was released in 1997 and went on to be certified triple platinum; their most recent, The King of Limbs, was released in February 2011.

- Philadelphia Phillies all-time roster ( nom) by Killervogel5. The Philadelphia Phillies, an American baseball team, have had 1,892 players since its founding in 1883. Based on family names, the most represented letter is M, with 202 players; the least represented is X, with 0. The most successful is R, with 4 out of 97 players holding records (Wall of Fame pictured above)

- List of colleges and universities in Minnesota ( nom) by Ruby2010. Of the nearly 200 colleges and universities in the US state of Minnesota, Hamline University in St. Paul, founded in 1854, is the oldest. The largest is the University of Minnesota, while the largest private one is the University of St. Thomas. Six tertiary education facilities that were once located in Minnesota have since closed.

- List of best-selling singles of the 1960s (UK) ( nom) by A Thousand Doors. This list of best-selling singles (defined as releases having fewer than four tracks and not lasting longer than 25 minutes) was originally compiled by the Official Charts Company and covers the period from 1960 to 1969. The most represented musical act was The Beatles (pictured at right), with 18 singles (including five of the top ten). The highest ranking single by a non-British act was "The Carnival Is Over" by the Australian band The Seekers, which peaked at number 6.

Featured topics

One featured topic was promoted this week:

- 1997 Atlantic hurricane season ( nom) by 12george1, Hurricanehink, and Juliancolton. The new featured topic, containing one good article and two featured articles, covers the 1997 Atlantic hurricane season and its two notable storms, Hurricane Danny and Hurricane Erika. The season saw seven named storms (including one major hurricane), a tropical depression, and an unnamed subtropical storm.

Featured pictures

Two featured pictures were promoted this week:

- Liberty Leading the People ( nom; related article), created by Eugène Delacroix and nominated by Sven Manguard. Liberty Leading the People (right), also known by its French title La Liberté guidant le peuple, is an 1830 painting commemorating the July Revolution. It has been described as Delacroix's most famous painting, even having featured on the 100 franc banknote in 1993. After a nomination in 2010 failed for a lack of support, this nomination passed 5 to 1.

- Destruction of the USS Arizona during the attack on Pearl Harbor ( nom; related article, created by an unknown author and nominated by Dusty777. This new featured picture dates from the 7 December 1941 Japanese attack on the US naval base at Pearl Harbor. A total number of 353 Japanese fighters, bombers and torpedo planes sank or damaged eight US battleships, three cruisers, three destroyers, an anti-aircraft training ship, and a minelayer, killing 2,402 and wounding 1,282. The Japanese themselves suffered losses of 29 aircraft, five midget submarines, and 65 servicemen. Although six battleships were salvaged, the Arizona was unable to be raised; 1,177 servicemen died on the ship (below).

Reader comments

Three open cases, one set for acceptance, arbitrators formally appointed by Jimmy Wales

This week saw the opening of the Muhammad images case to address which depictions of the prophet Muhammad, if any, were appropriate to display in the respective articles, as community discussion had not rendered a consensus on this. Evidence by multiple users has been submitted, and some workshop proposals have been tabled.

The case regarding TimidGuy's ban appeal proceeded into its second week. The case was opened by TimidGuy to appeal the site ban imposed off-wiki by Jimmy Wales. Part of the case is being conducted off-wiki due to privacy matters. It is one of the most active arbitration cases at present, with substantial activity in both the evidence and workshop pages.

Betacommand 3 proceeded to its ninth week. There has been no activity on the evidence pages this week, though several proposals were made at the workshop, including by drafting arbitrator SirFozzie.

Case requests

Two new cases were requested this week. The first related to actress Demi Moore and conflicting information in reliable sources and tweets by the actress regarding her birth-name. It was declined due to a lack of prior dispute resolution, with an RFC or mediation suggested as alternatives by the committee.

The other request this week concerned the perceived uncivil conduct by Malleus Fatuorum, and his blocking, unblocking and reblocking by administrators Thumperward, John, and Hawkeye7, respectively. The request aimed to address whether Malleus's conduct was uncivil and warranted blocking, and whether the subsequent unblock and re-block constituted a wheel-war. At the time of writing, more than 100 users had commented on the request, and the case trended towards acceptance by the committee.

The two open requests for clarification regarding the Eastern European mailing list case and the Abortion motion had no activity this week.

Jimmy appoints 2012 Arbitration Committee

Jimmy Wales ceremonially appointed the recently elected eight arbitrators to the committee this week. In his statement, Courcelles, Risker, Kirill Lokshin, Roger Davies, Hersfold, SilkTork, and AGK were appointed to two-year terms, and Jclemens to a one-year term, as determined by both a community RfC last month and a more recent decision by the election coordinators on the matter of the one-year term. Jimmy encouraged the committee to review its history, and strive to find the right balance between being too lenient or too strict in their judgments, to be neither too quick or too slow, and neither inconsistent nor arbitrary in its decisions.

He announced his intention to give up some of his traditional powers, and that to do this in an organised fashion he would form a council of editors to discuss various aspects of the history and state of the wiki, including its governance processes, to come up with ways to delegate these powers to the community. Details as to how such a council will be selected or how it will operate are yet to be announced.

Reader comments

Wikimedia in Go Daddy boycott, and why you should 'Join the Swarm'

Wikimedia in domain name hosting move

Wikimedia's

domain names (including wikipedia.org) will no longer be managed by U.S.-based registrar

Go Daddy, it was decided this week following concerns over the registrar's political activities.

The process that led to the decision to ditch the company that has managed Wikimedia's domains since at least 2007 seems to have begun with a December 23 post on the social news website reddit. The post, which has since received 35,000 votes and hundreds of responses, was a simple request directed at Jimmy Wales to "transfer Wikimedia domains away from Go Daddy to show you're serious about opposing SOPA". It refers to the registrar's then open support of the Stop Online Piracy Act, to which many Wikimedians and redditors are emphatically opposed. Many reddit commenters pledged donations if Wales committed to moving Wikimedia domains away from Go Daddy, part of a wider reddit campaign to get organisations to leave Go Daddy.

The response to the post was swift. The same day as the post, Wales committed to a move away from Go Daddy on his Twitter page, although an orderly transition is likely to take some time. (Wales also announced the transfer of Wikia domains as part of the same process.) A twist came shortly after the announcement, when Go Daddy issued a press release stating that it was withdrawing its support for SOPA. The statement, a world away from their earlier description of their opposition to SOPA as "myopic", does not seem to have yet prompted any change of action by Wales or the Foundation.

In brief

Not all fixes may have gone live to WMF sites at the time of writing; some may not be scheduled to go live for many weeks.

- How you can help: Join the Swarm: As reported on the wikitech-l mailing list, the WMF "TestSwarm" interface is back up and running on integration.mediawiki.org. The interface allows users to contribute to all important JavaScript testing of MediaWiki simply by specifying a username and pressing a button. The browser window, left open, will then check at 30-second intervals whether there are any tests it can run.

- MediaWiki core group explored: Director of Platform Engineering Robert Lanphier used a post on the Wikimedia blog to highlight the "core" grouping within the WMF platform engineering team. Lanphier writes how the group "is responsible for our sites' stability, security, performance and architectural cleanliness. This ends up translating into a lot of code review, along with infrastructure projects like disk-backed object cache, heterogeneous deployment, continuous integration, and performance-related work." The group is also notable for the fact that its members all started as volunteers, and has an open position (Software Security Engineer); Lanphier appealed for applications from interested developers.

- 84 hours of pageview statistics missing: Approximately 3.5 days' worth of page view statistics, covering the period 23 December to 26 December, appear to have been lost after a change that contained an error was made to the code responsible for their creation in the early hours of 23 December (UTC). The alarm was raised on the English Wikipedia technical village pump; unfortunately, it seems likely that stats.grok.se and other page view counters will continue to report reduced viewer figures for 23 and 26 December, and zero counts for 24 and 25 December.

- LocalisationUpdates resume: On a more positive note, the WMF installation of the LocalisationUpdate extension has now been fixed. The extension, which copies across up-to-date translations daily from translatewiki.net, had been experiencing problems since early November.

- WMF features office hours: The WMF features team, which drives big projects such as the Visual Editor, will hold an "office hours" RC talk on 4 January, Community Organizer Steven Walling clarified this week ( foundation-l mailing list).

- Module experts acknowledged?: A discussion on the wikitech-l mailing list pointed to the definition and use of developer specialisations. The system would label developers (either paid or volunteer) as being the standard first point of call in an area, and there would be the potential for reviewing tie-ins, similar to how Mozilla developers handle patch review. The suggestion is one of many made in recent months to combat the problem of lengthy code review times.

- Internal programming languages: The issue of the provision of a safe programming language to template coders was once again discussed this week (

wikitech-l mailing list). The often mooted topic centres around what an ideal "template language" would look like, so it could be safe, to allow new templates to be created, and to simplify the coding of existing templates.

Reader comments

Openness versus quality: why we're doing it wrong, and how to fix it

The views expressed in this opinion piece are those of the author only. Responses and critical commentary are invited in the

comments section. The Signpost welcomes proposals for op-eds. If you have one in mind, please leave a message at the

opinion desk.