| This is an archive of past discussions. Do not edit the contents of this page. If you wish to start a new discussion or revive an old one, please do so on the current talk page. |

| Archive 25 | ← | Archive 28 | Archive 29 | Archive 30 | Archive 31 | Archive 32 | → | Archive 35 |

Thanks for adding wayback links. One appears to go to an irrelevant page, and I was not sure what to do about it, so I marked it as a dead link. Dudley Miles ( talk) 10:40, 26 February 2016 (UTC)

- It would be a better idea to replace the link with something that actually works.— cyberpower Chat:Limited Access 03:51, 27 February 2016 (UTC)

Talk page check true/failed

The talk page message instructs:

- When you have finished reviewing my changes, please set the checked parameter below to true to let others know.

Wouldn't it say "..to true or failed"? Or an inline comment next to the sourcecheck line giving more detail on options. -- Green C 18:36, 26 February 2016 (UTC)

- I would prefer it to just leave the checkmark and say that "Archived sources have been checked", quite frankly. If they fail, they should be removed, and even though checked, the outcome isn't always "to be working". Additionally, one on such edit, one of the added archives was broken but correctable, the other was useless. Rather than "working" or "failed", "checked" is quite sufficient.

- Please use a notifier such as

{{U|D'Ranged 1}}to reply to me—I'm not watching your talk page due to its high traffic! Thanks!— D'Ranged 1 | VTalk : 18:54, 26 February 2016 (UTC)

- That's not how it's setup. You are supposed to set it to "failed", see the {{

sourcecheck}} template for how it works and the talk page for further discussion. The reason for this, I assume, is so the bot (and bot maker) can learn from mistakes. I agree there is confusion when some work and some fail, but that's fixable with correct wording. --

Green

C

19:18, 26 February 2016 (UTC)

- Actually I don't really do anything with them. It was put there at the request of some editors, so they can commit to checking the bot's edits. It serves to benefit other editors to let them know that someone checked the newest archives already. The bot's methods for retrieving a working archive is about as optimized as it's going to get at this point, and while it's accuracy for working archives is not 100%, which is impossible I might add, it's pretty high, roughly 95% based on random samples I surveyed. I'll leave this discussion open to let you guys decide how to proceed. Cyberbot's wording can be edited on wiki, on it's configuration page.—

cyberpower

Chat:Offline

04:03, 27 February 2016 (UTC)

- I think the information of failed links would be useful to accumulate into a database. The bot could check if a link is there before adding it. Thus instead of editors having to check "failed" in multiple articles (assuming the same link is used in multiple articles) it would only need to be checked once, thus saving labor and better accuracy. The database could also form the nucleolus of a new bot in the future, such as checking other archives besides Internet Archive or some manual process. None of this has to be done right now, but at least we can ask editors to keep the information updated. I'll look into the wiki and sourcecheck template for wording. -- Green C 13:23, 27 February 2016 (UTC)

- Actually I don't really do anything with them. It was put there at the request of some editors, so they can commit to checking the bot's edits. It serves to benefit other editors to let them know that someone checked the newest archives already. The bot's methods for retrieving a working archive is about as optimized as it's going to get at this point, and while it's accuracy for working archives is not 100%, which is impossible I might add, it's pretty high, roughly 95% based on random samples I surveyed. I'll leave this discussion open to let you guys decide how to proceed. Cyberbot's wording can be edited on wiki, on it's configuration page.—

cyberpower

Chat:Offline

04:03, 27 February 2016 (UTC)

- That's not how it's setup. You are supposed to set it to "failed", see the {{

sourcecheck}} template for how it works and the talk page for further discussion. The reason for this, I assume, is so the bot (and bot maker) can learn from mistakes. I agree there is confusion when some work and some fail, but that's fixable with correct wording. --

Green

C

19:18, 26 February 2016 (UTC)

- An example of a mix of true/failed.

Talk:2006_Lebanon_War#External_links_modified. I also updated the talk page message but I guess the bot has to be restarted for it to be loaded. --

Green

C

14:07, 27 February 2016 (UTC)

- Actually Cyberbot is already accumulating an extensive DB with details about the links, including a reviewed field. The aim is to build an interface on that so users can review what Cyberbot has done, fix, and call Cyberbot on selected articles.— cyberpower Chat:Limited Access 16:52, 27 February 2016 (UTC)

A barnstar for you!

|

The Original Barnstar |

| Thank you for editing the "nurburgring lap times" page. i think i submitted a contribution there, but it seems that nobody has approved it. could you do that please? sorry, i am new to this. Gixxbit ( talk) 15:20, 27 February 2016 (UTC) |

- I'm sorry, I haven't edited that page.— cyberpower Chat:Limited Access 17:01, 27 February 2016 (UTC)

Way to get the bot to run on an article?

What are the ways to get this bot to run on an article? Thanks! — Lentower ( talk) 02:14, 25 February 2016 (UTC)

- Not at current.—

cyberpower

Chat:Limited Access

02:15, 25 February 2016 (UTC)

- Thanks for replying. — Lentower ( talk) 17:28, 27 February 2016 (UTC)

Additional option(s) would be helpful

I think I mentioned before, but worth mentioning again, how happy I am to see this bot running. It is salvaging a lot of dead links, and that's a great thing.

I have noticed one nit, not a big deal, but I don't have a good solution, and hope you can consider implementing something. The short statement of the problem is that the template left on the talk page has two options for the “checked parameter” and there are other options.

At present, the template is posted with the parameter set to false, which generates the following text string:

Archived sources still need to be checked

That's fine, but if you check, the only other option is to change it to true which generates:

Archived sources have been checked to be working

What do I do if I check and they are not OK? Neither option works. As an additional complication, the template often covers more than one reference, and the answers may be different.

There are other cases:

- I recently checked one archived link, identified in Talk:Charli_Turner_Thorne, concluded that the archive was done correctly, but the archive existed because USA basketball changed their naming schema, and I would prefer to use the corrected live link, rather than the Internet Archive link. I could technically say true, as I did check, and it was fine, but that doesn't convey the fact that I changed the link.

- There were two links, I checked one, it was fine, but did not check the other.

- There were two links, I checked both, one was fine, and replaced the other one with a different source

- There was one link, it was not fine, so I tracked down a working link.

In each case, I am setting the parameter to true, and adding an explanation, but I don't really like the fact that it says in big bold type that the checked links are working.

I think working out all the possible cases is overkill, but I would like three cases:

- Not yet checked

- Checked and all are fine

- Some or all checked, but some or all not fine, read on for full explanation:

Option three could use some word-smithing.-- S Philbrick (Talk) 21:04, 31 January 2016 (UTC)

- See

Template:Sourcecheck—

cyberpower

Chat:Online

21:07, 31 January 2016 (UTC)

- Thanks, that helps.-- S Philbrick (Talk) 21:55, 31 January 2016 (UTC)

Would it be possible to have the option of adding "|checked=failed" added to the instructions that are left on talk pages? I had no idea this was possible before reading this (I was coming to post something similar) and it's a fairly common outcome when I check the IABot links. Thanks for all of your work - Antepenultimate ( talk) 21:11, 27 February 2016 (UTC)

- See a few sections down.—

cyberpower

Chat:Offline

04:38, 28 February 2016 (UTC)

- Thanks for adding the additional guidance to the talk page instructions! Antepenultimate ( talk) 05:42, 28 February 2016 (UTC)

The external link which I modified (as a bad link) but which has since been restored, points to a general (advertising) holding page which is being used to host a variety of links to other sites relating to aircraft services, but which is too general to be of any use to someone searching for the specific type of aircraft the article is about. Therefore I made my edit. I suspect that the linked site was initially appropriate and relevant, but which has since become unmaintained; the site name has been retained, but is now being used to host advertising links. When you have had opportunity to examine that destination site, your observations on the matter would be welcome. -- Observer6 ( talk) 19:42, 25 February 2016 (UTC)

- Use your own discretion.— cyberpower Chat:Limited Access 03:25, 27 February 2016 (UTC)

I did use my own discretion when deleting the inappropriate (bad) link. Now that the inappropriate link has been restored from the archives by CyberbotII, I suspect that CyberbotII will repeat its former (automated?) 'restore' action if I delete the inappropriate link for a second time.

In the absence of third party recognition that my edit was appropriate, I will leave the article as it stands, for others to discover its inadequacy. Regards --

Observer6 (

talk)

20:02, 27 February 2016 (UTC)

- Cyberbot doesn't add links to articles. It attaches respective archives to existing links. If you are referring to the addition of a bad archive, you have two options. Find a better archive, or revert Cyberbot's edit to that link and add the

{{ cbignore}}tag to it instead. That will instruct Cyberbot to quit messing with the link.— cyberpower Chat:Limited Access 20:15, 27 February 2016 (UTC)

- Cyberbot doesn't add links to articles. It attaches respective archives to existing links. If you are referring to the addition of a bad archive, you have two options. Find a better archive, or revert Cyberbot's edit to that link and add the

I appreciate your help.There are several external 'Reference' links at the end of the article in question. The first link is useless. I removed it. CyberbotII reversed my action by restoring the bad link from archive. I do not know of a suitable alternative link. If I now just add {{

cbignore}}, Cyberbot will cease messing with the link. but that unsuitable link will remain. Having checked the poor reference several times, I am still convinced that it is totally unsuitable. Maybe I should change the bots feedback msg from false to true, and delete the link again. Would this be the correct procedure? Or will this create havoc or start a never-ending cycle of my removals and Cyberbots automatic restorations?

I've tried reverting cyberbots edit, removed bad ref, and by addeding {{

cbignore}}. But the {{

cbignore}} tag now wants to appear on the page! I will suspend action until it is clear to me what I should do.--

Observer6 (

talk)

00:44, 28 February 2016 (UTC)

- I've looked and looked again. I see nothing of the sort here and I am utterly confused now. Cyberbot is operating within its scope of intended operation, I see no edit warring of any kind, nor is the bot arbitrarily adding links to the page. There's not much more I can do here.— cyberpower Chat:Offline 04:36, 28 February 2016 (UTC)

Thank you. Matter has been resolved by Green Cardamom-- Observer6 ( talk) 16:42, 28 February 2016 (UTC)

Please note that the tag was removed because the discussion was completed. I put the tag for deletion. Regards, -- Prof TPMS ( talk) 02:21, 29 February 2016 (UTC)

Sourcecheck's scope is running unchecked

I keep adding {{

cbignore}} and failed to your {{

Sourcecheck}}) additions.

While the goal is laudible, the failure rate seems very high. Can you limit the scope of your bot please? I don't think there's much point even attempting to provide archive links for {{ Cite book}}, as it will rarely be useful. For instance:

- Revision 707318941 as of 04:16, 28 February 2016 by User:Cyberbot II on the 1981 South Africa rugby union tour page.

I am almost at the stage of placing {{

nobots|deny=InternetArchiveBot}} on all pages I have created or made major editing contributions to in order to be rid of these unhelpful edits. --

Ham105 (

talk)

03:40, 29 February 2016 (UTC)

- Is it just for cite book that it's failing a lot on?— cyberpower Chat:Limited Access 03:45, 29 February 2016 (UTC)

Cyberbot edit: ICalendar

Thanks for adding an archive-url! I set the deadurl tag to "no" again, because the original URL is still reachable. Also, is there a way for Cyberbot to choose the "most current" archived version for the archive-url? -- Evilninja ( talk) 02:56, 29 February 2016 (UTC)

- It chooses one that's closest to the given access date to ensure the archive is a valid one.— cyberpower Chat:Limited Access 03:53, 29 February 2016 (UTC)

Unarchivable links

Hello, I don't think Cyberbot II should be doing this sort of thing, especially with a misleading edit summary. Graham 87 15:17, 29 February 2016 (UTC)

- You're right, it shouldn't. It's not programmed to do that, and that's clearly a bug.— cyberpower Chat:Online 15:47, 29 February 2016 (UTC)

Cyberbot feedback

Hi, I'm not sure from all the banners above about not being Cyberbot, being busy in RL, etc. whether this is the place to leave some Cyberbot feedback, but the "talk to my owner" link sent me here, so I'll leave it here.

I occasionally review Cyberbot links and usually they are OK. However, I just reviewed the two on the article Greenwich Village and both of them basically just added archived copies of 404 pages. It might be good if there was some sort of compare or go back in time feature for the bot to select an archive, so this doesn't happen, as obviously an archived version of a page saying "404" or "Oops we can't find the page" or whatever is not helpful here. Cheers, TheBlinkster ( talk) 15:22, 27 February 2016 (UTC)

- Cyberbot already does that.—

cyberpower

Chat:Limited Access

17:05, 27 February 2016 (UTC)

- If it should be, it doesn't seem to be working. A couple from this morning...

- In

Herefordshire

"Constitution: Part 2" (PDF). Herefordshire Council. Archived from

the original (PDF) on 9 June 2011. Retrieved 5 May 2010.

{{ cite web}}: Unknown parameter|deadurl=ignored (|url-status=suggested) ( help)

- In

Herefordshire

"Constitution: Part 2" (PDF). Herefordshire Council. Archived from

the original (PDF) on 9 June 2011. Retrieved 5 May 2010.

- In

Paul Keetch Staff writer (10 July 2007).

"MP still recovering in hospital".

BBC News Online. Archived from

the original on 11 November 2012. Retrieved 10 July 2007.

{{ cite news}}: Unknown parameter|deadurl=ignored (|url-status=suggested) ( help)

- In

Paul Keetch Staff writer (10 July 2007).

"MP still recovering in hospital".

BBC News Online. Archived from

the original on 11 November 2012. Retrieved 10 July 2007.

- Regards, -- Cavrdg ( talk) 10:26, 28 February 2016 (UTC)

Came here to say the same thing. An archive copy of a 404 is no better than a live 404. Actually it's worse as the end user doesn't get any visual clue the link will not be there. Palosirkka ( talk) 12:24, 28 February 2016 (UTC)

Is Cyberbot using Internet Archive's "Available" API? If so it would catch these. API results for the above examples returns no page available:

- https://archive.org/wayback/available?url=http://news.bbc.co.uk/2/hi/uk_news/england/hereford/worcs/6287440.stmdate

- https://archive.org/wayback/available?url=http://www.herefordshire.gov.uk/docs/002_Part_2_Articles__Dec09.pdf

"This API is useful for providing a 404 or other error handler which checks Wayback to see if it has an archived copy ready to display. "

-- Green C 15:15, 28 February 2016 (UTC)

- Funny enough it is, and it gets its result from that. I have no idea why Cyberbot is getting a result now.—

cyberpower

Chat:Online

17:57, 28 February 2016 (UTC)

- Would Cyberbot see an API timeout or otherwise unexpected result (blank JSON) as a positive result? --

Green

C

18:39, 28 February 2016 (UTC)

- No chance. If it gets no data, it couldn't know what URL to use. It wouldn't be able to update the ref and Cyberbot will ignore that ref.

- Would Cyberbot see an API timeout or otherwise unexpected result (blank JSON) as a positive result? --

Green

C

18:39, 28 February 2016 (UTC)

- ... and 302s? This, at 19:56:36 UTC, at

Central Devon (UK Parliament constituency)

"Final recommendations for Parliamentary constituencies in the counties of Devon, Plymouth and Torbay".

Boundary Commission for England. 24 November 2004. Archived from

the original on 21 July 2011. Retrieved 25 April 2010.

{{ cite web}}: Unknown parameter|deadurl=ignored (|url-status=suggested) ( help) redirects to a page archive reports 'Page cannot be crawled or displayed due to robots.txt. The API https://archive.org/wayback/available?url=http://www.boundarycommissionforengland.org.uk/review_areas/downloads/FR_NR_Devon_Plymouth_Torbay.doc gives {"archived_snapshots":{}}

- ... and 302s? This, at 19:56:36 UTC, at

Central Devon (UK Parliament constituency)

"Final recommendations for Parliamentary constituencies in the counties of Devon, Plymouth and Torbay".

Boundary Commission for England. 24 November 2004. Archived from

the original on 21 July 2011. Retrieved 25 April 2010.

- Regards --

Cavrdg (

talk)

20:28, 29 February 2016 (UTC)

- Cyberbot is using a newer version of the API, built for Cyberbot, complements of the dev team of IA. It could be that there is a major bug in commanding which status codes to retrieve. Instead of retrieving the 200s it's retrieving all. I'll have to contact IA, and double check Cyberbot's commands to the API to make sure it's not a bug in Cyberbot.— cyberpower Chat:Online 20:59, 29 February 2016 (UTC)

- Regards --

Cavrdg (

talk)

20:28, 29 February 2016 (UTC)

This is the actual API command issued by Cyberbot in function isArchived() in API.php:

It returns two characters "[]" which is not valid JSON, but I

verified it's correctly interpreted and sets $this->db->dbValues[$id]['archived'] = 0. In case it falls through to another function, similar commands in retrieveArchive():

- http://web.archive.org/cdx/search/cdx?url=http://www.boundarycommissionforengland.org.uk/review_areas/downloads/FR_NR_Devon_Plymouth_Torbay.doc&to=201010&output=json&limit=-2&matchType=exact&filter=statuscode:(200%7C203%7C206)

- http://web.archive.org/cdx/search/cdx?url=http://www.boundarycommissionforengland.org.uk/review_areas/downloads/FR_NR_Devon_Plymouth_Torbay.doc&from=201010&output=json&limit=2&matchType=exact&filter=statuscode:(200%7C203%7C206)

Same results. I can't find anywhere else in the code that downloads data from archive.org and if it's not getting the archive URL from here, then from where? Must be the API. -- Green C 21:08, 29 February 2016 (UTC)

- That code you looked at is outdated. You may benefit from

this which is the current deployment of Cyberbot. It was using the CDX server for some time now, but a fairyl recent update encouraged by IA, to enhance performance, and reduce server stress on their end was put in that changed the API back to a newer version of the availability API. Have a look.—

cyberpower

Chat:Online

21:14, 29 February 2016 (UTC)

- That helps. Very different. I'll study that. -- Green C 21:29, 29 February 2016 (UTC)

Not helping here

I don't know what the expected result should be, but https://en.wikipedia.org/?title=Sidemount_diving&oldid=707596914 does not seem to have been a useful edit. • • • Peter (Southwood) (talk): 06:44, 1 March 2016 (UTC)

- I've contacted IA, as this no longer sounds like a Cyberbot bug anymore. Cyberbot cannot possibly insert any archive URLs unless it gets it from the IA, or it's cached in it's DB, and even that requires an initial response from IA.— cyberpower Chat:Online 20:23, 1 March 2016 (UTC)

IABot feature

Hi Cyberpower678. I have noticed that IABot does not preserve section marks (#) in URLs, e.g. in this edit it did not preserve the "#before", "#f1" and "#after" which are present in the original URLs in the archive URLs. Not sure if this is intentional of not. Regards. DH85868993 ( talk) 06:02, 2 March 2016 (UTC)

- Cyberbot uses the data that is returned by IA's API, so the section anchors are lost in the API. I'm hesitant to have the bot to manually format them back in as that can introduce broken links, and I'm already working on fixing these problems with the current setup.—

cyberpower

Chat:Offline

06:06, 2 March 2016 (UTC)

- No worries. Thanks. DH85868993 ( talk) 06:10, 2 March 2016 (UTC)

chapter-url confusion?

I don't know that this was due to the use of "chapter-url", but this edit doesn't seem helpful: the entire citation was reduced to "{{cite}}". Nitpicking polish ( talk) 14:56, 2 March 2016 (UTC)

- Well...that's a new one.— cyberpower Chat:Online 15:34, 2 March 2016 (UTC)

Soft 404s are making you web archiving useless

Cyber, there are current over 90,000 pages in Category:Articles with unchecked bot-modified external links and I'm finding that in more than half the cases, you are linking to a webarchive of a soft 404. Because of this, I don't think this bot task is helping, and it's probably best to turn it off until you can find some way to detect this. Oiyarbepsy ( talk) 05:38, 3 March 2016 (UTC)

I just did a random check of 10 pages and found Cyberbot correctly archived in 7 cases, and an error with the bot or IA API in 3 cases. The IA API may be at fault here. It's often returning a valid URL when it shouldn't be ( case1, case2, case3). I tend to agree that 90,000 pages will takes many years, if ever, to clean up manually (these are probably articles no one is actively watching/maintaining). (BTW I disagree the bot is "useless", link rot is a semi-manual process to fix and the problem is fiendishly difficult and pressing, there is wide consensus for a solution). -- Green C 15:04, 3 March 2016 (UTC)

What happened here?

[1] ~ Kvng ( talk) 05:03, 3 March 2016 (UTC)

- Probably related to 2 sections above.— cyberpower Chat:Limited Access 17:32, 3 March 2016 (UTC)

I tagged 10 dead links on Whoopi Goldberg and Cyberbot II fixed 7 of them

Hi Cyberpower678. Using

Checklinks I uncovered 11 dead links on the

Whoopi Goldberg article. I was able to find archive versions for 1 of them and "fixed" it. I

tagged 10 as being dead (not fixable by me]. Cyberbot II fixed 7 that I tagged as dead and unfixable. That leaves 3 still broken (reference #30, #72, and #73). I checked them by hand. I also checked them again with Checklinks and Checklinks reported the same 3 as being

dead. How did Cyberbot find those seven archived versions? Ping me back. Cheers! {{u|

Checkingfax}} {

Talk}

06:22, 4 March 2016 (UTC)

- It makes advanced calls to the API. I'm not sure what check links does, but I do know Cyberbot has become more advanced than check links in some areas, and can at some point replace check links when I have Cyberbot's control interface up and running.— cyberpower Chat:Limited Access 18:20, 4 March 2016 (UTC)

Cyberbot II will not stop "rescuing" a source

Hello, on the article MythBusters (2013 season), Cyberbot II keeps on trying to "rescue" a source with an archived URL. The archived URL actually is broken (it seems to be an empty page), and does not contain the material that is referenced. I have tried reverting this change, but Cyberbot II keeps on adding the same broken archived URL back. Could you please stop the bot from adding back the archived URL which I keep on trying to revert? Thanks. Secret Agent Julio ( talk) 13:34, 6 March 2016 (UTC) — Preceding unsigned comment added by Secret Agent Julio (alt) ( talk • contribs)

404 Checker

http://404checker.com/404-checker

I ran a test with this URL recently added to Talk:JeemTV by Cyberbot - the 404 checker correctly determines it's a 404. Running IA API results through this might cut down on the number of false positives. I have not done in depth testing. Likely there are other similar tools available. -- Green C 18:03, 4 March 2016 (UTC)

- We are working on Cyberbot's own checker, however, the API results should already be filtering this out. As you said there's an API error that needs to be fixed most likely or a hidden Cyberbot bug, I have not yet found. I'm not going to fix problems by masking the symptoms, but fixing the problem at the source. Doing that will keep the bot running smoothly, and the code maintainable and readable.—

cyberpower

Chat:Limited Access

18:15, 4 March 2016 (UTC)

- Glad to hear. I agree ideally it should be fixed at IA. They have a long todo list, new database Elasticsearch to wrangle, new website to roll out (March 15), recent outages etc. A safeguard secondary check would provide peace of mind IA is giving good data. Is there a plan to revisit Cyberbot's existing work, once the bugs are identified? Thanks. --

Green

C

18:27, 4 March 2016 (UTC)

- Unfortunately no. There is no plan. Also secondary checks cost time and resources, something that doesn't need to be done if the 404 bug is fixed, but Cyberbot cannot tell what it edited already. In the long run when I have the interface setup, I could possibly compile and feed Cyberbot a list of articles to look at and tell it to touch all links even with an archive. That will forcibly change broken archives into either a dead tag or a new working archive, but that's a bit away from now. The priority is to get the current code base stable, reliable, and modular, so Cyberbot can run on multiple wikis.—

cyberpower

Chat:Online

21:22, 4 March 2016 (UTC)

- You have the right priority of software development, looking forward. Perhaps I could make it my concern for known incorrect edits made to articles that need to be fixed, looking backwards. If there is a list of known problems introduced (like the 404, blank archiveurl argument, etc), I might be able to code something that address some of these, sooner than later. I would process on a local server and post updates via AWB. Finding CB's past edits should be possible using the API. Let me know what you think. --

Green

C

02:06, 5 March 2016 (UTC)

- Started this project and have made good progress, in a day or two will begin manual test runs with AWB. It will find and fix (some) response code errors, blank archiveurl's etc.. have established a list of unique articles modified by IABot up to March 4 (~90k). -- Green C 13:51, 6 March 2016 (UTC)

- You have the right priority of software development, looking forward. Perhaps I could make it my concern for known incorrect edits made to articles that need to be fixed, looking backwards. If there is a list of known problems introduced (like the 404, blank archiveurl argument, etc), I might be able to code something that address some of these, sooner than later. I would process on a local server and post updates via AWB. Finding CB's past edits should be possible using the API. Let me know what you think. --

Green

C

02:06, 5 March 2016 (UTC)

- Unfortunately no. There is no plan. Also secondary checks cost time and resources, something that doesn't need to be done if the 404 bug is fixed, but Cyberbot cannot tell what it edited already. In the long run when I have the interface setup, I could possibly compile and feed Cyberbot a list of articles to look at and tell it to touch all links even with an archive. That will forcibly change broken archives into either a dead tag or a new working archive, but that's a bit away from now. The priority is to get the current code base stable, reliable, and modular, so Cyberbot can run on multiple wikis.—

cyberpower

Chat:Online

21:22, 4 March 2016 (UTC)

- Glad to hear. I agree ideally it should be fixed at IA. They have a long todo list, new database Elasticsearch to wrangle, new website to roll out (March 15), recent outages etc. A safeguard secondary check would provide peace of mind IA is giving good data. Is there a plan to revisit Cyberbot's existing work, once the bugs are identified? Thanks. --

Green

C

18:27, 4 March 2016 (UTC)

Question

Is there any way to direct your bot to a set of articles, like Final Fantasy ones, or the ones of the Wikiproject: Square Enix? It seems like an amazing tool, and would save so much time checking and archiving links for the articles we watch. Let me know if there is a way to request its attention. :) Judgesurreal777 ( talk) 05:49, 6 March 2016 (UTC)

- Not at the moment. It's something that will be added in the foreseeable future.— cyberpower Chat:Online 16:39, 6 March 2016 (UTC)

Hey!

Cyberpower678! I haven't heard from you in awhile, man! What have you been up to? You going MIA on me? :-) ~Oshwah~ (talk) (contribs) 08:56, 6 March 2016 (UTC)

- Not going MIA, I've been focused on college and bot work in the last few weeks.— cyberpower Chat:Online 16:39, 6 March 2016 (UTC)

Dead link

Hi cyberpower678, I have a problem which you may be able to help me. I have created hundreds of (mostly stub) articles about the minor populated places in Turkey. My main source is Statistical Institute, a reliable governmental Institute. However each year they change their link address and I am forced to change the link accordingly. Finally I quitted and most of the articles are now pointing to dead links. After seeing your (or rather Cyberbot II s) contribution to Karacakılavuz, I realized that even dead links can be retrieved from archives. But I don't know how. Can you please help me ? Thanks Nedim Ardoğa ( talk) 18:45, 6 March 2016 (UTC)

- Why not create a template instead that generates the URL when the template is given parameters? That way, when they update the addresses, all you have to do is update the template.— cyberpower Chat:Online 18:49, 6 March 2016 (UTC)

Love Cyberbot II

After I spent a day updating a gazillion references on List of Falcon 9 and Falcon Heavy launches, your bot found an archive version of an important reference. Wonderful assistance, many thanks for creating it! — JFG talk 08:33, 7 March 2016 (UTC)

Bug report

- There are different results between these two URLs:

- The Wayback Machine may require a "web" prefix. See this edit. -- Green C 17:54, 7 March 2016 (UTC)

Sources removed

here - there were four, the bot left it with just one. -- Redrose64 ( talk) 23:34, 25 February 2016 (UTC)

- There shouldn't be more than one cite in a ref though. Fixing this would demand a major structural change of the code.— cyberpower Chat:Online 23:37, 25 February 2016 (UTC)

- It would practically be a rewrite.—

cyberpower

Chat:Online

23:39, 25 February 2016 (UTC)

-

WP:CITEBUNDLE permits more than one inside a single

<ref>...</ref>pair. -- Redrose64 ( talk) 10:53, 26 February 2016 (UTC)- I cleaned this up by hand and am just wondering if the bot could ignore

<ref>calls and just look for uses of{{ Cite}}? That would eliminate limiting the number of uses of{{ Cite}}within a<ref> ... </ref>pair. Just a suggestion, and maybe won't require a rewrite! - Please use a notifier such as

{{U|D'Ranged 1}}to reply to me—I'm not watching your talk page due to its high traffic! Thanks!— D'Ranged 1 | VTalk : 18:55, 26 February 2016 (UTC)- Not a good compromise. Many dead refs have the tag right outside of the </ref> tag. Your suggestion will end up having the bot ignore many references. The majority of dead sources are references. This will ultimately defeat the purpose of this bot.

- Well shit, this will be one pain in the ass to fix. This will take some time to get a proper fix in.—

cyberpower

Chat:Limited Access

03:28, 27 February 2016 (UTC)

- Alright, I think I'm ready do a test of the new code. I've made various improvements including not mangling miscellaneous text inside the reference.—

cyberpower

Chat:Limited Access

17:18, 7 March 2016 (UTC)

-

Fixed per

[2].—

cyberpower

Chat:Limited Access

18:55, 7 March 2016 (UTC)

Fixed per

[2].—

cyberpower

Chat:Limited Access

18:55, 7 March 2016 (UTC)

-

- Alright, I think I'm ready do a test of the new code. I've made various improvements including not mangling miscellaneous text inside the reference.—

cyberpower

Chat:Limited Access

17:18, 7 March 2016 (UTC)

- I cleaned this up by hand and am just wondering if the bot could ignore

-

WP:CITEBUNDLE permits more than one inside a single

Farallon Islands

This edit didn't go so well.

— Trappist the monk ( talk) 01:57, 29 February 2016 (UTC)

- What's wrong with the edit?— cyberpower Chat:Limited Access 02:36, 29 February 2016 (UTC)

- The original URL has "|" symbols which are not URL encoded. It worked as a bare URL but when imported into cite web it breaks the template which requires special characters to be encoded. I think this might do it in enwiki.php line 131:

- $link['newdata']['link_template']['parameters']['url'] = urlencode( $link[$link['link_type']]['url']) ;

- --

Green

C

02:59, 29 February 2016 (UTC)

- Ah I see. Green Cardamom, I didn't know you understood Cyberbot's code so well. Nice one. :D—

cyberpower

Chat:Limited Access

03:29, 29 February 2016 (UTC)

- Thanks :) I studied to figure out two posts up with no luck, but this one was easier. BTW I don't think IA can archive books.google.* as Google has software to prevent capture for copyright reasons. It's unusual for books.google to be dead (the one below is not actually dead). And, Google has a robots.txt so more recent captures won't exist. It might be safe for CB to ignore books.google.* .. there is a separate project to migrate Google PD books to archive.org called BUB so eventually many links to google books can be saved/replaced that way. --

Green

C

04:47, 29 February 2016 (UTC)

- If that's the case, the

{{ cite book}}template can be removed from the list of citation templates to handle. I simply added all the templates that have a URL parameter. But I may have added some where the sites used are simply impossible to archive and use.— cyberpower Chat:Offline 05:27, 29 February 2016 (UTC)- I would say so. It will not always be Google Books. If possible black list Google during a cite book, otherwise yes the whole template. --

Green

C

15:31, 29 February 2016 (UTC)

- I would advise against actually blacklisting any sites. That makes for an impossible to maintain list. I'd say remove the common cause of a the problem, which means you can go ahead and remove cite book from the list if you feel it is appropriate to do so. Cyberbot will apply changes after it completes its batch of 5000 articles.—

cyberpower

Chat:Online

15:45, 29 February 2016 (UTC)

Done. --

Green

C

15:54, 29 February 2016 (UTC)

Done. --

Green

C

15:54, 29 February 2016 (UTC)

- I have now implemented Green's coding suggesting to fix this bug.— cyberpower Chat:Online 19:39, 7 March 2016 (UTC)

- I would advise against actually blacklisting any sites. That makes for an impossible to maintain list. I'd say remove the common cause of a the problem, which means you can go ahead and remove cite book from the list if you feel it is appropriate to do so. Cyberbot will apply changes after it completes its batch of 5000 articles.—

cyberpower

Chat:Online

15:45, 29 February 2016 (UTC)

- I would say so. It will not always be Google Books. If possible black list Google during a cite book, otherwise yes the whole template. --

Green

C

15:31, 29 February 2016 (UTC)

- If that's the case, the

- Thanks :) I studied to figure out two posts up with no luck, but this one was easier. BTW I don't think IA can archive books.google.* as Google has software to prevent capture for copyright reasons. It's unusual for books.google to be dead (the one below is not actually dead). And, Google has a robots.txt so more recent captures won't exist. It might be safe for CB to ignore books.google.* .. there is a separate project to migrate Google PD books to archive.org called BUB so eventually many links to google books can be saved/replaced that way. --

Green

C

04:47, 29 February 2016 (UTC)

- Ah I see. Green Cardamom, I didn't know you understood Cyberbot's code so well. Nice one. :D—

cyberpower

Chat:Limited Access

03:29, 29 February 2016 (UTC)

- --

Green

C

02:59, 29 February 2016 (UTC)

Deleting references

[3]. When there is more than one citation template in a ref, it deletes all but one of the cites. As a quick fix, due to problems with these cases, can it avoid/skip these cases for the moment? Such as, if there is more than one "http" inside a reference, skip it - it can always come back later when the code is updated to handle multiple refs. Otherwise it seems to be doing some damage. -- Green C 17:52, 1 March 2016 (UTC)

- Another related problem.

[4] In the second edit, it removed two

{{ dead}}but only fixed one due to multiple cite web templates inside a single ref. -- Green C 17:56, 1 March 2016 (UTC)- I'm already working on an update for that. I'm restructuring some of the code to handle multiple sources in a ref, as well as enhance string generation.—

cyberpower

Chat:Online

19:47, 1 March 2016 (UTC)

- Ok good luck. Seems like a hard problem. Wide variety of ways multiple cites can be included/delineated. --

Green

C

20:16, 1 March 2016 (UTC)

- I know practically a rewrite in some areas. I've been focused on this specific for the past 3 days.—

cyberpower

Chat:Online

20:21, 1 March 2016 (UTC)

- I bet. I wouldn't know how to go about it. There could be multiple URL's inside a single cite, with multiple cites inside a single ref, with unknown delineations between them! Some might use "," or <br> or "*" or .. it's nearly impossible to guess ahead of time. Maybe it's possible to regex a database dump to extract all bundled cites into a work file, then see if there is a way to unpack them all algorithmically into individual cites, and those that fail refine the algorithm until they all pass. Then use that algorithm in the bot. That way the bot can be sure it won't run into anything unexpected in the wild. But maybe you have this part solved already. --

Green

C

21:39, 1 March 2016 (UTC)

- There are definitely no multiple urls in a single cite, because that would break the cite template. For detecting the contents of the reference and all citations and bare links, I have this problem solved already. I'm more or less 80% done with the update.—

cyberpower

Chat:Online

21:41, 1 March 2016 (UTC)

- Is there a testcases page where we can devise possible refs to see how the bot might respond? That could also help to ensure new code doesn't break formerly working testcases. --

Green

C

02:01, 2 March 2016 (UTC)

- There is, but I can definitely guarantee that existing test cases won't break.— cyberpower Chat:Limited Access 02:04, 2 March 2016 (UTC)

- Is there a testcases page where we can devise possible refs to see how the bot might respond? That could also help to ensure new code doesn't break formerly working testcases. --

Green

C

02:01, 2 March 2016 (UTC)

- There are definitely no multiple urls in a single cite, because that would break the cite template. For detecting the contents of the reference and all citations and bare links, I have this problem solved already. I'm more or less 80% done with the update.—

cyberpower

Chat:Online

21:41, 1 March 2016 (UTC)

- I bet. I wouldn't know how to go about it. There could be multiple URL's inside a single cite, with multiple cites inside a single ref, with unknown delineations between them! Some might use "," or <br> or "*" or .. it's nearly impossible to guess ahead of time. Maybe it's possible to regex a database dump to extract all bundled cites into a work file, then see if there is a way to unpack them all algorithmically into individual cites, and those that fail refine the algorithm until they all pass. Then use that algorithm in the bot. That way the bot can be sure it won't run into anything unexpected in the wild. But maybe you have this part solved already. --

Green

C

21:39, 1 March 2016 (UTC)

- I know practically a rewrite in some areas. I've been focused on this specific for the past 3 days.—

cyberpower

Chat:Online

20:21, 1 March 2016 (UTC)

- Ok good luck. Seems like a hard problem. Wide variety of ways multiple cites can be included/delineated. --

Green

C

20:16, 1 March 2016 (UTC)

- I'm already working on an update for that. I'm restructuring some of the code to handle multiple sources in a ref, as well as enhance string generation.—

cyberpower

Chat:Online

19:47, 1 March 2016 (UTC)

-

Fixed per

[5]—

cyberpower

Chat:Online

20:52, 7 March 2016 (UTC)

Fixed per

[5]—

cyberpower

Chat:Online

20:52, 7 March 2016 (UTC)

I'M SORRY!!!

In case anyone noticed this, I am truly sorry. I was doing some controlled tests and clicked the run button without realizing it. Next thing you know Cyberbot was making a mess. You may trout me if you wish. But I am sorry.— cyberpower Chat:Online 00:34, 8 March 2016 (UTC)

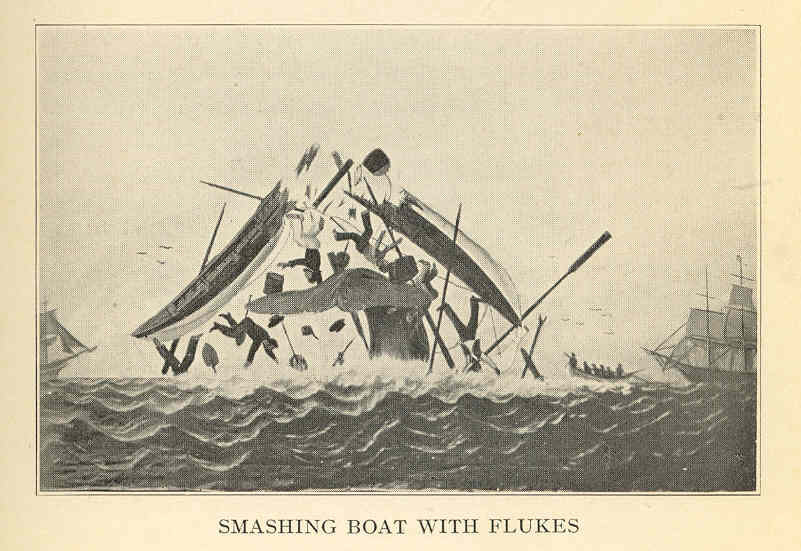

- Oh, alright.

Smash!

You've been

squished by a whale!

Don't take this too seriously. Someone just wants to let you know you did something really silly.

- There, hopefully a lesson has been learned. tutterMouse ( talk) 07:39, 8 March 2016 (UTC)

- It was a fluke, you say?

Other templates removed upon archive link addition

As an FYI, other templates that are located between the citation template and the {{ dead link}} get removed when Cyberbot adds the archive link to a reference. See this for example, the {{ self-published source}} template between the cited dead link and the {{ dead link}} was removed; I wouldn't be surprised if other templates get removed upon the bot edit. Thanks — Mr. Matté ( Talk/ Contrib) 17:45, 3 March 2016 (UTC)

-

Fixed per

[6]—

cyberpower

Chat:Limited Access

16:20, 8 March 2016 (UTC)

Fixed per

[6]—

cyberpower

Chat:Limited Access

16:20, 8 March 2016 (UTC)

Cyberbot II edit on Grabby Awards

|archiveurl=archive.org/web/20050603234403/http://www.grabbys.com/a1.grab.04.5.winners.htmlshould have been|archiveurl=https://web.archive.org/web/20050603234403/http://www.grabbys.com/a1.grab.04.5.winners.html

https://web. at the front of the url, but probably something work checking / testing.

Thanks for your hard work in cleaning up! Much appreciated!

Please use a notifier such as {{U|D'Ranged 1}} to reply to me—I'm not watching your talk page due to its high traffic!

Thanks!— D'Ranged 1 | VTalk : 18:22, 26 February 2016 (UTC)

- /facedesk I thought I fixed this several times several whiles ago. :|—

cyberpower

Chat:Limited Access

03:54, 27 February 2016 (UTC)

- I wrote some redundancy checks into the link analyzer to not to fix mangled URLs. I'll have to do a DB purge for it to work however, as the Db is riddled with bad links. In short this is

Fixed—

cyberpower

Chat:Online

14:08, 9 March 2016 (UTC)

Fixed—

cyberpower

Chat:Online

14:08, 9 March 2016 (UTC)

- I wrote some redundancy checks into the link analyzer to not to fix mangled URLs. I'll have to do a DB purge for it to work however, as the Db is riddled with bad links. In short this is

Robots and dead dead links

Seeing 404 problems in archive URLs but not created by Cyberbot, they are older, added by other bots or manual. The problem is this: Wayback will archive any web site that doesn't have a robots.txt disallowing. However, if a website retroactively adds a robot.txt, then Wayback will delete all it's archives(!). This means, yes, dead links to archive.org -- my bot will pick some of these up remove them and adds cbignore, but it's not designed to look at every case nor run continuously like Cyberbot. Something to consider for the future. We will need another bot that is verifying the archive URLs are still active (though it only needs to check occasionally, once a year or something). -- Green C 17:14, 9 March 2016 (UTC)

- How do you know this?—

cyberpower

Chat:Limited Access

17:15, 9 March 2016 (UTC)

- It was documented on their website (it's been a while since I looked so can't point to it right now). You can ask IA to verify but all evidence points to it. And how else would they handle cases where owners want their archives removed from IA such as copyright content (news sites etc). -- Green C 17:21, 9 March 2016 (UTC)

Cyberbot deleted ref content

In this edit, CYberbot II mangled a ref while fixing a dead link on the ref's cite. Search for Charles Lu. The ref consisted of some intro text followed by a cite like this:

- <ref>Some introductory text. {{cite ...}}</ref>

and the bot edit deleted the intro text from the cite. While this style of ref may be unusual I believe it is perfectly acceptable and should not have been mangled. IMO when fixing a dead link contained in a cite template the bot has no business touching anything outside the bounds of the {{cite ...}}.

TuxLibNit ( talk) 21:18, 29 February 2016 (UTC)

- I'm already working on an update to fix this as well as other issues related to formatting edits. Also Cyberbot needs to remove the dead tag, which is outside of the cite template, and sometimes outside of the ref itself.—

cyberpower

Chat:Online

21:25, 29 February 2016 (UTC)

-

Fixed per

[7]—

cyberpower

Chat:Limited Access

18:14, 8 March 2016 (UTC)

Fixed per

[7]—

cyberpower

Chat:Limited Access

18:14, 8 March 2016 (UTC)

- Thanks for the fix. TuxLibNit ( talk) 23:18, 9 March 2016 (UTC)

-

404 waybacks

Isn't the bot supposed to check that the wayback page is valid? All five of these were bad: [8] Kendall-K1 ( talk) 22:08, 9 March 2016 (UTC)

- Hello I'm not the author of Cyberbot but FYI I'm working on another bot to fix these cases. It will hopefully correct them soon. Going forward Cyberbot should be doing a better job as it was caused by a bug at IA we believe. --

Green

C

22:50, 9 March 2016 (UTC)

- As of yesterday, that is a stale bug. IA has fixed a bug that caused the bot to report bad archives. Also please do not use the nobots tag so hastily. That tag is a last resort, if Cyberbot is repeatedly proving to be useless for the page.—

cyberpower

Chat:Online

23:58, 9 March 2016 (UTC)

- Could you please fix the wording on the talk page template? Right now it just says "If necessary, add

{{ cbignore}}after the link to keep me from modifying it. Alternatively, you can add{{ nobots|deny=InternetArchiveBot}}to keep me off the page altogether." Thanks. Kendall-K1 ( talk) 00:26, 10 March 2016 (UTC)

- Could you please fix the wording on the talk page template? Right now it just says "If necessary, add

- As of yesterday, that is a stale bug. IA has fixed a bug that caused the bot to report bad archives. Also please do not use the nobots tag so hastily. That tag is a last resort, if Cyberbot is repeatedly proving to be useless for the page.—

cyberpower

Chat:Online

23:58, 9 March 2016 (UTC)

Wayback bug?

The API is sometimes returning pages blocked by robots (code 403) when using ×tamp. Example from Environmental threats to the Great Barrier Reef:

- Without timestamp: https://archive.org/wayback/available?url=http://www.amsa.gov.au/shipping%5Fsafety/great%5Fbarrier%5Freef%5Freview/gbr%5Freview%5Freport/contents%5Fhtml%5Fversion.asp

-- Green C 03:46, 10 March 2016 (UTC)

Oh look, a kitteh for Cybepower678!!!

thank you for the protection on my proposed article Brandun DeShay ^_^

Yleonmgnt (

talk)

06:48, 10 March 2016 (UTC)

Cyberbot tried to fix a dead url within comment tags

Hello. With this edit, Cyberbot picked up a dead link from within <!-- --> tags and proceeded to try and fix the associated url. Why it was like that is, the ref went dead, I found an alternative that covered most but not all of the cited content, so added that ref and left the original one commented out together with a note about what wasn't covered by the replacement. I'm wondering whether the bot should in general respect comment tags, or whether that situation's just too unusual to bother about? cheers, Struway2 ( talk) 09:50, 10 March 2016 (UTC)

- It used to respect comment tags by filtering them out of the page text before processing the page. However, due to comments found in references itself, cyberbot uses the filtered reference, a reference with the comments filtered out, processes, and then tries to replace the filtered reference with an updated filtered reference. The problem is, in simple string search and replace, the filtered reference doesn't exist on the page text, just the original with comments in it, and the replace function fails leaving several sources unaltered. I found that handling the page with comments instead might benefit articles more, than it would filtering out comments. As such Cyberbot has made many more changes to links with comments in the now. Comments in general don't have anything the bot would want to touch normally, so I would classify this as a rare enough occurrence that it can be ignored safely. It shouldn't wreck a comment in general to begin with.—

cyberpower

Chat:Limited Access

16:02, 10 March 2016 (UTC)

- Thank you for a thoughtful reply. cheers, Struway2 ( talk) 16:27, 10 March 2016 (UTC)

| This is an archive of past discussions. Do not edit the contents of this page. If you wish to start a new discussion or revive an old one, please do so on the current talk page. |

| Archive 25 | ← | Archive 28 | Archive 29 | Archive 30 | Archive 31 | Archive 32 | → | Archive 35 |

Thanks for adding wayback links. One appears to go to an irrelevant page, and I was not sure what to do about it, so I marked it as a dead link. Dudley Miles ( talk) 10:40, 26 February 2016 (UTC)

- It would be a better idea to replace the link with something that actually works.— cyberpower Chat:Limited Access 03:51, 27 February 2016 (UTC)

Talk page check true/failed

The talk page message instructs:

- When you have finished reviewing my changes, please set the checked parameter below to true to let others know.

Wouldn't it say "..to true or failed"? Or an inline comment next to the sourcecheck line giving more detail on options. -- Green C 18:36, 26 February 2016 (UTC)

- I would prefer it to just leave the checkmark and say that "Archived sources have been checked", quite frankly. If they fail, they should be removed, and even though checked, the outcome isn't always "to be working". Additionally, one on such edit, one of the added archives was broken but correctable, the other was useless. Rather than "working" or "failed", "checked" is quite sufficient.

- Please use a notifier such as

{{U|D'Ranged 1}}to reply to me—I'm not watching your talk page due to its high traffic! Thanks!— D'Ranged 1 | VTalk : 18:54, 26 February 2016 (UTC)

- That's not how it's setup. You are supposed to set it to "failed", see the {{

sourcecheck}} template for how it works and the talk page for further discussion. The reason for this, I assume, is so the bot (and bot maker) can learn from mistakes. I agree there is confusion when some work and some fail, but that's fixable with correct wording. --

Green

C

19:18, 26 February 2016 (UTC)

- Actually I don't really do anything with them. It was put there at the request of some editors, so they can commit to checking the bot's edits. It serves to benefit other editors to let them know that someone checked the newest archives already. The bot's methods for retrieving a working archive is about as optimized as it's going to get at this point, and while it's accuracy for working archives is not 100%, which is impossible I might add, it's pretty high, roughly 95% based on random samples I surveyed. I'll leave this discussion open to let you guys decide how to proceed. Cyberbot's wording can be edited on wiki, on it's configuration page.—

cyberpower

Chat:Offline

04:03, 27 February 2016 (UTC)

- I think the information of failed links would be useful to accumulate into a database. The bot could check if a link is there before adding it. Thus instead of editors having to check "failed" in multiple articles (assuming the same link is used in multiple articles) it would only need to be checked once, thus saving labor and better accuracy. The database could also form the nucleolus of a new bot in the future, such as checking other archives besides Internet Archive or some manual process. None of this has to be done right now, but at least we can ask editors to keep the information updated. I'll look into the wiki and sourcecheck template for wording. -- Green C 13:23, 27 February 2016 (UTC)

- Actually I don't really do anything with them. It was put there at the request of some editors, so they can commit to checking the bot's edits. It serves to benefit other editors to let them know that someone checked the newest archives already. The bot's methods for retrieving a working archive is about as optimized as it's going to get at this point, and while it's accuracy for working archives is not 100%, which is impossible I might add, it's pretty high, roughly 95% based on random samples I surveyed. I'll leave this discussion open to let you guys decide how to proceed. Cyberbot's wording can be edited on wiki, on it's configuration page.—

cyberpower

Chat:Offline

04:03, 27 February 2016 (UTC)

- That's not how it's setup. You are supposed to set it to "failed", see the {{

sourcecheck}} template for how it works and the talk page for further discussion. The reason for this, I assume, is so the bot (and bot maker) can learn from mistakes. I agree there is confusion when some work and some fail, but that's fixable with correct wording. --

Green

C

19:18, 26 February 2016 (UTC)

- An example of a mix of true/failed.

Talk:2006_Lebanon_War#External_links_modified. I also updated the talk page message but I guess the bot has to be restarted for it to be loaded. --

Green

C

14:07, 27 February 2016 (UTC)

- Actually Cyberbot is already accumulating an extensive DB with details about the links, including a reviewed field. The aim is to build an interface on that so users can review what Cyberbot has done, fix, and call Cyberbot on selected articles.— cyberpower Chat:Limited Access 16:52, 27 February 2016 (UTC)

A barnstar for you!

|

The Original Barnstar |

| Thank you for editing the "nurburgring lap times" page. i think i submitted a contribution there, but it seems that nobody has approved it. could you do that please? sorry, i am new to this. Gixxbit ( talk) 15:20, 27 February 2016 (UTC) |

- I'm sorry, I haven't edited that page.— cyberpower Chat:Limited Access 17:01, 27 February 2016 (UTC)

Way to get the bot to run on an article?

What are the ways to get this bot to run on an article? Thanks! — Lentower ( talk) 02:14, 25 February 2016 (UTC)

- Not at current.—

cyberpower

Chat:Limited Access

02:15, 25 February 2016 (UTC)

- Thanks for replying. — Lentower ( talk) 17:28, 27 February 2016 (UTC)

Additional option(s) would be helpful

I think I mentioned before, but worth mentioning again, how happy I am to see this bot running. It is salvaging a lot of dead links, and that's a great thing.

I have noticed one nit, not a big deal, but I don't have a good solution, and hope you can consider implementing something. The short statement of the problem is that the template left on the talk page has two options for the “checked parameter” and there are other options.

At present, the template is posted with the parameter set to false, which generates the following text string:

Archived sources still need to be checked

That's fine, but if you check, the only other option is to change it to true which generates:

Archived sources have been checked to be working

What do I do if I check and they are not OK? Neither option works. As an additional complication, the template often covers more than one reference, and the answers may be different.

There are other cases:

- I recently checked one archived link, identified in Talk:Charli_Turner_Thorne, concluded that the archive was done correctly, but the archive existed because USA basketball changed their naming schema, and I would prefer to use the corrected live link, rather than the Internet Archive link. I could technically say true, as I did check, and it was fine, but that doesn't convey the fact that I changed the link.

- There were two links, I checked one, it was fine, but did not check the other.

- There were two links, I checked both, one was fine, and replaced the other one with a different source

- There was one link, it was not fine, so I tracked down a working link.

In each case, I am setting the parameter to true, and adding an explanation, but I don't really like the fact that it says in big bold type that the checked links are working.

I think working out all the possible cases is overkill, but I would like three cases:

- Not yet checked

- Checked and all are fine

- Some or all checked, but some or all not fine, read on for full explanation:

Option three could use some word-smithing.-- S Philbrick (Talk) 21:04, 31 January 2016 (UTC)

- See

Template:Sourcecheck—

cyberpower

Chat:Online

21:07, 31 January 2016 (UTC)

- Thanks, that helps.-- S Philbrick (Talk) 21:55, 31 January 2016 (UTC)

Would it be possible to have the option of adding "|checked=failed" added to the instructions that are left on talk pages? I had no idea this was possible before reading this (I was coming to post something similar) and it's a fairly common outcome when I check the IABot links. Thanks for all of your work - Antepenultimate ( talk) 21:11, 27 February 2016 (UTC)

- See a few sections down.—

cyberpower

Chat:Offline

04:38, 28 February 2016 (UTC)

- Thanks for adding the additional guidance to the talk page instructions! Antepenultimate ( talk) 05:42, 28 February 2016 (UTC)

The external link which I modified (as a bad link) but which has since been restored, points to a general (advertising) holding page which is being used to host a variety of links to other sites relating to aircraft services, but which is too general to be of any use to someone searching for the specific type of aircraft the article is about. Therefore I made my edit. I suspect that the linked site was initially appropriate and relevant, but which has since become unmaintained; the site name has been retained, but is now being used to host advertising links. When you have had opportunity to examine that destination site, your observations on the matter would be welcome. -- Observer6 ( talk) 19:42, 25 February 2016 (UTC)

- Use your own discretion.— cyberpower Chat:Limited Access 03:25, 27 February 2016 (UTC)

I did use my own discretion when deleting the inappropriate (bad) link. Now that the inappropriate link has been restored from the archives by CyberbotII, I suspect that CyberbotII will repeat its former (automated?) 'restore' action if I delete the inappropriate link for a second time.

In the absence of third party recognition that my edit was appropriate, I will leave the article as it stands, for others to discover its inadequacy. Regards --

Observer6 (

talk)

20:02, 27 February 2016 (UTC)

- Cyberbot doesn't add links to articles. It attaches respective archives to existing links. If you are referring to the addition of a bad archive, you have two options. Find a better archive, or revert Cyberbot's edit to that link and add the

{{ cbignore}}tag to it instead. That will instruct Cyberbot to quit messing with the link.— cyberpower Chat:Limited Access 20:15, 27 February 2016 (UTC)

- Cyberbot doesn't add links to articles. It attaches respective archives to existing links. If you are referring to the addition of a bad archive, you have two options. Find a better archive, or revert Cyberbot's edit to that link and add the

I appreciate your help.There are several external 'Reference' links at the end of the article in question. The first link is useless. I removed it. CyberbotII reversed my action by restoring the bad link from archive. I do not know of a suitable alternative link. If I now just add {{

cbignore}}, Cyberbot will cease messing with the link. but that unsuitable link will remain. Having checked the poor reference several times, I am still convinced that it is totally unsuitable. Maybe I should change the bots feedback msg from false to true, and delete the link again. Would this be the correct procedure? Or will this create havoc or start a never-ending cycle of my removals and Cyberbots automatic restorations?

I've tried reverting cyberbots edit, removed bad ref, and by addeding {{

cbignore}}. But the {{

cbignore}} tag now wants to appear on the page! I will suspend action until it is clear to me what I should do.--

Observer6 (

talk)

00:44, 28 February 2016 (UTC)

- I've looked and looked again. I see nothing of the sort here and I am utterly confused now. Cyberbot is operating within its scope of intended operation, I see no edit warring of any kind, nor is the bot arbitrarily adding links to the page. There's not much more I can do here.— cyberpower Chat:Offline 04:36, 28 February 2016 (UTC)

Thank you. Matter has been resolved by Green Cardamom-- Observer6 ( talk) 16:42, 28 February 2016 (UTC)

Please note that the tag was removed because the discussion was completed. I put the tag for deletion. Regards, -- Prof TPMS ( talk) 02:21, 29 February 2016 (UTC)

Sourcecheck's scope is running unchecked

I keep adding {{

cbignore}} and failed to your {{

Sourcecheck}}) additions.

While the goal is laudible, the failure rate seems very high. Can you limit the scope of your bot please? I don't think there's much point even attempting to provide archive links for {{ Cite book}}, as it will rarely be useful. For instance:

- Revision 707318941 as of 04:16, 28 February 2016 by User:Cyberbot II on the 1981 South Africa rugby union tour page.

I am almost at the stage of placing {{

nobots|deny=InternetArchiveBot}} on all pages I have created or made major editing contributions to in order to be rid of these unhelpful edits. --

Ham105 (

talk)

03:40, 29 February 2016 (UTC)

- Is it just for cite book that it's failing a lot on?— cyberpower Chat:Limited Access 03:45, 29 February 2016 (UTC)

Cyberbot edit: ICalendar

Thanks for adding an archive-url! I set the deadurl tag to "no" again, because the original URL is still reachable. Also, is there a way for Cyberbot to choose the "most current" archived version for the archive-url? -- Evilninja ( talk) 02:56, 29 February 2016 (UTC)

- It chooses one that's closest to the given access date to ensure the archive is a valid one.— cyberpower Chat:Limited Access 03:53, 29 February 2016 (UTC)

Unarchivable links

Hello, I don't think Cyberbot II should be doing this sort of thing, especially with a misleading edit summary. Graham 87 15:17, 29 February 2016 (UTC)

- You're right, it shouldn't. It's not programmed to do that, and that's clearly a bug.— cyberpower Chat:Online 15:47, 29 February 2016 (UTC)

Cyberbot feedback

Hi, I'm not sure from all the banners above about not being Cyberbot, being busy in RL, etc. whether this is the place to leave some Cyberbot feedback, but the "talk to my owner" link sent me here, so I'll leave it here.

I occasionally review Cyberbot links and usually they are OK. However, I just reviewed the two on the article Greenwich Village and both of them basically just added archived copies of 404 pages. It might be good if there was some sort of compare or go back in time feature for the bot to select an archive, so this doesn't happen, as obviously an archived version of a page saying "404" or "Oops we can't find the page" or whatever is not helpful here. Cheers, TheBlinkster ( talk) 15:22, 27 February 2016 (UTC)

- Cyberbot already does that.—

cyberpower

Chat:Limited Access

17:05, 27 February 2016 (UTC)

- If it should be, it doesn't seem to be working. A couple from this morning...

- In

Herefordshire

"Constitution: Part 2" (PDF). Herefordshire Council. Archived from

the original (PDF) on 9 June 2011. Retrieved 5 May 2010.

{{ cite web}}: Unknown parameter|deadurl=ignored (|url-status=suggested) ( help)

- In

Herefordshire

"Constitution: Part 2" (PDF). Herefordshire Council. Archived from

the original (PDF) on 9 June 2011. Retrieved 5 May 2010.

- In

Paul Keetch Staff writer (10 July 2007).

"MP still recovering in hospital".

BBC News Online. Archived from

the original on 11 November 2012. Retrieved 10 July 2007.

{{ cite news}}: Unknown parameter|deadurl=ignored (|url-status=suggested) ( help)

- In

Paul Keetch Staff writer (10 July 2007).

"MP still recovering in hospital".

BBC News Online. Archived from

the original on 11 November 2012. Retrieved 10 July 2007.

- Regards, -- Cavrdg ( talk) 10:26, 28 February 2016 (UTC)

Came here to say the same thing. An archive copy of a 404 is no better than a live 404. Actually it's worse as the end user doesn't get any visual clue the link will not be there. Palosirkka ( talk) 12:24, 28 February 2016 (UTC)

Is Cyberbot using Internet Archive's "Available" API? If so it would catch these. API results for the above examples returns no page available:

- https://archive.org/wayback/available?url=http://news.bbc.co.uk/2/hi/uk_news/england/hereford/worcs/6287440.stmdate

- https://archive.org/wayback/available?url=http://www.herefordshire.gov.uk/docs/002_Part_2_Articles__Dec09.pdf

"This API is useful for providing a 404 or other error handler which checks Wayback to see if it has an archived copy ready to display. "

-- Green C 15:15, 28 February 2016 (UTC)

- Funny enough it is, and it gets its result from that. I have no idea why Cyberbot is getting a result now.—

cyberpower

Chat:Online

17:57, 28 February 2016 (UTC)

- Would Cyberbot see an API timeout or otherwise unexpected result (blank JSON) as a positive result? --

Green

C

18:39, 28 February 2016 (UTC)

- No chance. If it gets no data, it couldn't know what URL to use. It wouldn't be able to update the ref and Cyberbot will ignore that ref.

- Would Cyberbot see an API timeout or otherwise unexpected result (blank JSON) as a positive result? --

Green

C

18:39, 28 February 2016 (UTC)

- ... and 302s? This, at 19:56:36 UTC, at

Central Devon (UK Parliament constituency)

"Final recommendations for Parliamentary constituencies in the counties of Devon, Plymouth and Torbay".

Boundary Commission for England. 24 November 2004. Archived from

the original on 21 July 2011. Retrieved 25 April 2010.

{{ cite web}}: Unknown parameter|deadurl=ignored (|url-status=suggested) ( help) redirects to a page archive reports 'Page cannot be crawled or displayed due to robots.txt. The API https://archive.org/wayback/available?url=http://www.boundarycommissionforengland.org.uk/review_areas/downloads/FR_NR_Devon_Plymouth_Torbay.doc gives {"archived_snapshots":{}}

- ... and 302s? This, at 19:56:36 UTC, at

Central Devon (UK Parliament constituency)

"Final recommendations for Parliamentary constituencies in the counties of Devon, Plymouth and Torbay".

Boundary Commission for England. 24 November 2004. Archived from

the original on 21 July 2011. Retrieved 25 April 2010.

- Regards --

Cavrdg (

talk)

20:28, 29 February 2016 (UTC)

- Cyberbot is using a newer version of the API, built for Cyberbot, complements of the dev team of IA. It could be that there is a major bug in commanding which status codes to retrieve. Instead of retrieving the 200s it's retrieving all. I'll have to contact IA, and double check Cyberbot's commands to the API to make sure it's not a bug in Cyberbot.— cyberpower Chat:Online 20:59, 29 February 2016 (UTC)

- Regards --

Cavrdg (

talk)

20:28, 29 February 2016 (UTC)

This is the actual API command issued by Cyberbot in function isArchived() in API.php:

It returns two characters "[]" which is not valid JSON, but I

verified it's correctly interpreted and sets $this->db->dbValues[$id]['archived'] = 0. In case it falls through to another function, similar commands in retrieveArchive():

- http://web.archive.org/cdx/search/cdx?url=http://www.boundarycommissionforengland.org.uk/review_areas/downloads/FR_NR_Devon_Plymouth_Torbay.doc&to=201010&output=json&limit=-2&matchType=exact&filter=statuscode:(200%7C203%7C206)

- http://web.archive.org/cdx/search/cdx?url=http://www.boundarycommissionforengland.org.uk/review_areas/downloads/FR_NR_Devon_Plymouth_Torbay.doc&from=201010&output=json&limit=2&matchType=exact&filter=statuscode:(200%7C203%7C206)

Same results. I can't find anywhere else in the code that downloads data from archive.org and if it's not getting the archive URL from here, then from where? Must be the API. -- Green C 21:08, 29 February 2016 (UTC)

- That code you looked at is outdated. You may benefit from

this which is the current deployment of Cyberbot. It was using the CDX server for some time now, but a fairyl recent update encouraged by IA, to enhance performance, and reduce server stress on their end was put in that changed the API back to a newer version of the availability API. Have a look.—

cyberpower

Chat:Online

21:14, 29 February 2016 (UTC)

- That helps. Very different. I'll study that. -- Green C 21:29, 29 February 2016 (UTC)

Not helping here

I don't know what the expected result should be, but https://en.wikipedia.org/?title=Sidemount_diving&oldid=707596914 does not seem to have been a useful edit. • • • Peter (Southwood) (talk): 06:44, 1 March 2016 (UTC)

- I've contacted IA, as this no longer sounds like a Cyberbot bug anymore. Cyberbot cannot possibly insert any archive URLs unless it gets it from the IA, or it's cached in it's DB, and even that requires an initial response from IA.— cyberpower Chat:Online 20:23, 1 March 2016 (UTC)

IABot feature

Hi Cyberpower678. I have noticed that IABot does not preserve section marks (#) in URLs, e.g. in this edit it did not preserve the "#before", "#f1" and "#after" which are present in the original URLs in the archive URLs. Not sure if this is intentional of not. Regards. DH85868993 ( talk) 06:02, 2 March 2016 (UTC)

- Cyberbot uses the data that is returned by IA's API, so the section anchors are lost in the API. I'm hesitant to have the bot to manually format them back in as that can introduce broken links, and I'm already working on fixing these problems with the current setup.—

cyberpower

Chat:Offline

06:06, 2 March 2016 (UTC)

- No worries. Thanks. DH85868993 ( talk) 06:10, 2 March 2016 (UTC)

chapter-url confusion?

I don't know that this was due to the use of "chapter-url", but this edit doesn't seem helpful: the entire citation was reduced to "{{cite}}". Nitpicking polish ( talk) 14:56, 2 March 2016 (UTC)

- Well...that's a new one.— cyberpower Chat:Online 15:34, 2 March 2016 (UTC)

Soft 404s are making you web archiving useless

Cyber, there are current over 90,000 pages in Category:Articles with unchecked bot-modified external links and I'm finding that in more than half the cases, you are linking to a webarchive of a soft 404. Because of this, I don't think this bot task is helping, and it's probably best to turn it off until you can find some way to detect this. Oiyarbepsy ( talk) 05:38, 3 March 2016 (UTC)

I just did a random check of 10 pages and found Cyberbot correctly archived in 7 cases, and an error with the bot or IA API in 3 cases. The IA API may be at fault here. It's often returning a valid URL when it shouldn't be ( case1, case2, case3). I tend to agree that 90,000 pages will takes many years, if ever, to clean up manually (these are probably articles no one is actively watching/maintaining). (BTW I disagree the bot is "useless", link rot is a semi-manual process to fix and the problem is fiendishly difficult and pressing, there is wide consensus for a solution). -- Green C 15:04, 3 March 2016 (UTC)

What happened here?

[1] ~ Kvng ( talk) 05:03, 3 March 2016 (UTC)

- Probably related to 2 sections above.— cyberpower Chat:Limited Access 17:32, 3 March 2016 (UTC)

I tagged 10 dead links on Whoopi Goldberg and Cyberbot II fixed 7 of them

Hi Cyberpower678. Using

Checklinks I uncovered 11 dead links on the

Whoopi Goldberg article. I was able to find archive versions for 1 of them and "fixed" it. I

tagged 10 as being dead (not fixable by me]. Cyberbot II fixed 7 that I tagged as dead and unfixable. That leaves 3 still broken (reference #30, #72, and #73). I checked them by hand. I also checked them again with Checklinks and Checklinks reported the same 3 as being

dead. How did Cyberbot find those seven archived versions? Ping me back. Cheers! {{u|

Checkingfax}} {

Talk}

06:22, 4 March 2016 (UTC)

- It makes advanced calls to the API. I'm not sure what check links does, but I do know Cyberbot has become more advanced than check links in some areas, and can at some point replace check links when I have Cyberbot's control interface up and running.— cyberpower Chat:Limited Access 18:20, 4 March 2016 (UTC)

Cyberbot II will not stop "rescuing" a source

Hello, on the article MythBusters (2013 season), Cyberbot II keeps on trying to "rescue" a source with an archived URL. The archived URL actually is broken (it seems to be an empty page), and does not contain the material that is referenced. I have tried reverting this change, but Cyberbot II keeps on adding the same broken archived URL back. Could you please stop the bot from adding back the archived URL which I keep on trying to revert? Thanks. Secret Agent Julio ( talk) 13:34, 6 March 2016 (UTC) — Preceding unsigned comment added by Secret Agent Julio (alt) ( talk • contribs)

404 Checker

http://404checker.com/404-checker

I ran a test with this URL recently added to Talk:JeemTV by Cyberbot - the 404 checker correctly determines it's a 404. Running IA API results through this might cut down on the number of false positives. I have not done in depth testing. Likely there are other similar tools available. -- Green C 18:03, 4 March 2016 (UTC)

- We are working on Cyberbot's own checker, however, the API results should already be filtering this out. As you said there's an API error that needs to be fixed most likely or a hidden Cyberbot bug, I have not yet found. I'm not going to fix problems by masking the symptoms, but fixing the problem at the source. Doing that will keep the bot running smoothly, and the code maintainable and readable.—

cyberpower

Chat:Limited Access

18:15, 4 March 2016 (UTC)

- Glad to hear. I agree ideally it should be fixed at IA. They have a long todo list, new database Elasticsearch to wrangle, new website to roll out (March 15), recent outages etc. A safeguard secondary check would provide peace of mind IA is giving good data. Is there a plan to revisit Cyberbot's existing work, once the bugs are identified? Thanks. --

Green

C

18:27, 4 March 2016 (UTC)

- Unfortunately no. There is no plan. Also secondary checks cost time and resources, something that doesn't need to be done if the 404 bug is fixed, but Cyberbot cannot tell what it edited already. In the long run when I have the interface setup, I could possibly compile and feed Cyberbot a list of articles to look at and tell it to touch all links even with an archive. That will forcibly change broken archives into either a dead tag or a new working archive, but that's a bit away from now. The priority is to get the current code base stable, reliable, and modular, so Cyberbot can run on multiple wikis.—

cyberpower

Chat:Online

21:22, 4 March 2016 (UTC)