| This is the

talk page for discussing improvements to the

Convex optimization article. This is not a forum for general discussion of the article's subject. |

Article policies

|

| Find sources: Google ( books · news · scholar · free images · WP refs) · FENS · JSTOR · TWL |

|

| This article is rated C-class on Wikipedia's

content assessment scale. It is of interest to the following WikiProjects: | ||||||||||

| |||||||||||

This article may be too technical for most readers to understand. |

The layout and presentation could be much better. I know it's a stub, but what little there is could at least be readable. 163.1.159.21 16:48, 14 May 2005 (UTC)

I see "convex optimization" applied to nonlinear functions with multiple minima. In that context are people really talking just about some convex portion of the domain around a local minimum? Similarly, is it still convex optimization if you are looking for the minimum of a Y-shaped valley where the minimum is at the bottom of the Y? What if it wasn't Y-shaped but more V-shaped so that you could draw an ellipse over either valley in which the function is convex? —Ben FrantzDale 19:50, 31 January 2007 (UTC)

- For convex optimization the function to be optimized has to be convex. But then any local minimum is global minimum, so local optimization is the same as global optimization. At least that's how I know it. Oleg Alexandrov ( talk) 02:38, 1 February 2007 (UTC)

- Perhaps some edge-case examples would be helpful. For example, is Rosenbrock's banana function a convex function? I tend to think not (since the valley curves). —Ben FrantzDale 20:56, 5 February 2007 (UTC)

- Well, if a function is not convex, then you can't do convex optimization. But you can still do optimization however, it is just you are not guaranteed a global minimum. I fail to see what your point is, with the above. Sorry. :) Oleg Alexandrov ( talk) 04:42, 6 February 2007 (UTC)

- My point is I think I understand what it means for a function to be convex, but I'm not 100% sure. For that reason I'd like to see some edge-cases explained. Is a curving valley such as the banana function convex? (I think it is not.) My sense is that a convex function is one that has an n-dimensional valley that doesn't curve too much so that if you keep heading down hill you'll be moving closer to the minimum. (Whereas in a curved valley you might be heading downhill but away from the actual minimum.) Is that right? —Ben FrantzDale 13:37, 6 February 2007 (UTC)

There is no graph on the "banana function" page, so I can't tell. Here is a convex function, Image:Convex-function-graph-1.png, and here is a function which is not convex, Image:Quasiconvex function.png. A greater help would be to read the convex function article rather than this one. Oleg Alexandrov ( talk) 02:15, 7 February 2007 (UTC)

- I've read up on convex function. The thing I want to understand is what makes convex optimization special. It feels like it's because, with a convex function, moving by ε downhill always moves you closer to the minimum. (Intuitively it seems like that property would make an optimization problem much more tractable.) Alternately, I'd like to understand what goes wrong in optimizing a function that isn't quite convex. —Ben FrantzDale 04:28, 7 February 2007 (UTC)

- Not much goes wrong if the function to optimize is not convex. You may just have more than one local minimum. Try to see how your "ε downhill" intuition would work for

Image:Nonquasiconvex function.png which is not convex.

Oleg Alexandrov (

talk)

04:47, 7 February 2007 (UTC)

- Actually that picture is not a very good example since the function in question is not convex in general, but it is convex around the local minima. See again

Image:Quasiconvex function.png which is not convex at all but for which your "ε downhill" argument seems to still work.

Oleg Alexandrov (

talk)

04:55, 7 February 2007 (UTC)

- Both of those pictures obviously satisfy my "ε downhill" argument around their minima. I folloed the external links to a full-length PDF textbook. On page 16 of that (p. 30 of the PDF) it says

- In this context Rockafellar stated, in his 1993 SIAM Review survey paper [Roc93],

- In fact the great watershed in optimization isn’t between linearity and nonlinearity, but convexity and nonconvexity.

- In this context Rockafellar stated, in his 1993 SIAM Review survey paper [Roc93],

- Presumably there is something special about convex functions that I am missing. (Or else, the analisys doesn't work for non-convex functions but actual algorithms might work reasonably well for "almost convex" functions.) Thanks. —Ben FrantzDale 05:39, 7 February 2007 (UTC)

- Both of those pictures obviously satisfy my "ε downhill" argument around their minima. I folloed the external links to a full-length PDF textbook. On page 16 of that (p. 30 of the PDF) it says

- Actually that picture is not a very good example since the function in question is not convex in general, but it is convex around the local minima. See again

Image:Quasiconvex function.png which is not convex at all but for which your "ε downhill" argument seems to still work.

Oleg Alexandrov (

talk)

04:55, 7 February 2007 (UTC)

- Not much goes wrong if the function to optimize is not convex. You may just have more than one local minimum. Try to see how your "ε downhill" intuition would work for

Image:Nonquasiconvex function.png which is not convex.

Oleg Alexandrov (

talk)

04:47, 7 February 2007 (UTC)

- OK, I don't know convex optimization so well. What I know is that convexity is profoundly important in the sense that if the function is convex, you are guaranteed to have a global minimum, and if it is strictly convex, that minimum is unique. This is immensely important, since then you will arrive at the minimum starting from anywhere. If your function is not convex, you always need to worry, especially in problems involving millions of variables, that the solution you found is only a lousy local minimum, and there are many other solutions in the search space which are much more optimal but which can't be easily found. There are many algorithms which can help in that case, which search for a solution randomly, etc, but the problem of finding the global minimum of a non-convex function is (I think!)

NP-hard, meaning that it is impossible to do in practice.

Oleg Alexandrov (

talk)

16:30, 7 February 2007 (UTC)

- Thanks. Perhaps someone with more background on the subject can comment. What you said sounds right, although as I understand convexity, there are plenty of non-convex functions for which there is exactly one minimum and that is the only point with a zero derivative, which seems naively like it would be just as easy to minimize. Like I said, I'm trying to grock what makes convexity special, hence the "what goes wrong if..." questions. Thanks agian.

—Ben FrantzDale

16:50, 7 February 2007 (UTC)

- It is just very hard to prove in general that a given non-convex function has a unique minimum. And most non-convex functions have many local minima. So practically speaking, non-convex function == no unique minimum, then what I said above applies. Oleg Alexandrov ( talk) 04:17, 8 February 2007 (UTC)

- Thanks. Perhaps someone with more background on the subject can comment. What you said sounds right, although as I understand convexity, there are plenty of non-convex functions for which there is exactly one minimum and that is the only point with a zero derivative, which seems naively like it would be just as easy to minimize. Like I said, I'm trying to grock what makes convexity special, hence the "what goes wrong if..." questions. Thanks agian.

—Ben FrantzDale

16:50, 7 February 2007 (UTC)

- OK, I don't know convex optimization so well. What I know is that convexity is profoundly important in the sense that if the function is convex, you are guaranteed to have a global minimum, and if it is strictly convex, that minimum is unique. This is immensely important, since then you will arrive at the minimum starting from anywhere. If your function is not convex, you always need to worry, especially in problems involving millions of variables, that the solution you found is only a lousy local minimum, and there are many other solutions in the search space which are much more optimal but which can't be easily found. There are many algorithms which can help in that case, which search for a solution randomly, etc, but the problem of finding the global minimum of a non-convex function is (I think!)

NP-hard, meaning that it is impossible to do in practice.

Oleg Alexandrov (

talk)

16:30, 7 February 2007 (UTC)

- After thinking about it more, it looks like gradient descent solvers can work on nonconvex functions, but e.g., the simplex method does not because the real minimum could "leak" out of the simplex as the simplex shrinks. —Ben FrantzDale 20:43, 10 April 2007 (UTC)

- Gradient descent works for non-convex problems, but one end up only with local minima only. Now, the simplex method works only for linear problems as far as I know, so even more particular than convex problems. Oleg Alexandrov ( talk) 03:03, 11 April 2007 (UTC)

- Hmm. There are non-convex nonlinear functions with a single global minimum (e.g., a bowl with an extra dent at the bottom). Basically I'm trying to figure out why convexity is important. If it is important rather than just "functions with a single global minimum, then there has to be a case for which nonconvex functions that may still have a single global minimum cause an algorithm to fail. It could be that I don't understand the simplex method exactly. —Ben FrantzDale 04:42, 11 April 2007 (UTC)

The theory of convex optimization pertains to convex minimization, not maximization (apart from a maximum boundary principle). Maximizing (even quadratic QP) convex functions (on convex subsets) is NP hard. Shouldn't the title of the article be clarified to "Convex Minimization"? Kiefer.Wolfowitz ( talk) 14:32, 7 June 2009 (UTC) I updated the discussion to specific "convex minimization" where appropriate. Kiefer.Wolfowitz ( talk) 15:49, 7 June 2009 (UTC)

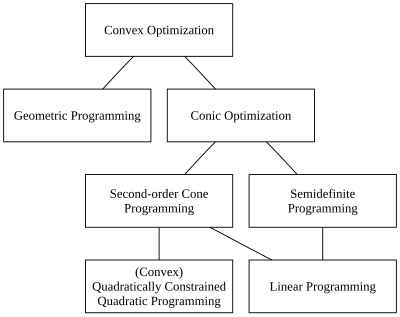

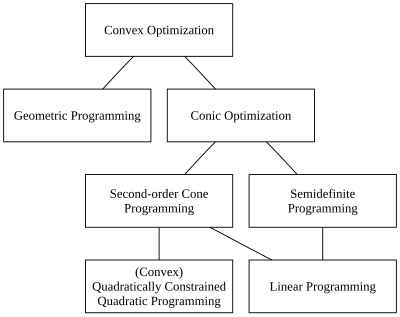

Would somebody improve the illustration of the "problem hierarchy", please?

Convex quadratic programming (QP) (with linear constraints) is more general than linear programming.

I would not object to somebody changing the QP with Q constraints to simply QP (with linear constraints). Thanks. Kiefer.Wolfowitz ( talk) 19:47, 7 January 2010 (UTC)

- I removed the still wrong diagram. Kiefer.Wolfowitz ( talk) 16:39, 21 July 2010 (UTC)

The first sentence is gramatically wrong: "is focuses" -> "focuses" —Preceding unsigned comment added by 139.179.161.17 ( talk) 12:36, 28 February 2010 (UTC)

Does anyone know where I can find some information about "abstract convex programming"? By this I mean minimising a convex function over a convex feasible set. That is, there are no explicit constraints, all you know is that the feasible set is convex. —Preceding unsigned comment added by 203.167.251.186 ( talk) 04:48, 19 July 2010 (UTC)

- The best theoretical and computational work is very challenging. Start with Dimitri Bertsekas's books or his on-line lecture notes. Some current research is described in Claude Lemaréchal's lectures on "Lagrangian Relaxation" at a summer school, which was published in Springer's lecture notes in computer science (Combinatorial Optimization).

- (Such questions are better reserved for your professor or for on-line lists: Look at Optimization Online.)

- Avoid the term "Abstract convex programming", which is established for a very mathematical approach to more general problems (Rolewicz & Pallaschke, Ivan Singer, the late Rubinov, etc.). Thanks! Best regards, Kiefer.Wolfowitz ( talk) 16:37, 21 July 2010 (UTC)

- Thanks. Much appreciated. —Preceding unsigned comment added by 203.167.251.186 ( talk) 21:35, 26 July 2010 (UTC)

In the introductory section it is mentioned that

With recent improvements in computing and in optimization theory, convex minimization is nearly as straightforward as linear programming.

While that is certainly true in theory (existence of a rich duality theory and polynomial-time algorithms), I am not so sure to which extent this is true in practice. For linear programs, simplex and interior-point methods can solve problems with input size (nonzero entries on constraint matrix) on the order of millions on a desktop computer. But even for a well studied and highly structured class of convex optimization problems, namely, semidefinite optimization, current solvers do not fare very well even for problems with input size on the order of a few thousand nonzeroes. See the benchmarks at http://plato.asu.edu/bench.html.

It is obvious that this is not a fair comparison, given that the codes for LPs are obviously much more mature. However, semidefinite optimization solvers (as well as general convex optimization software) faces an apparently intrinsic problem of numerical stability.

If anybody else can confirm that I am not completely outdated about this, could you please add these remarks to the page, or let me know so that I can write the remarks myself? Thanks.

-- Marcelkcs ( talk) 15:20, 4 June 2011 (UTC)

This article would benefit from a section on applications of convex optimization, which might include concrete examples. Akshayka ( talk) 20:11, 14 January 2019 (UTC)

I would like to raise concerns about the recent edits to the article. Specifically, I find the claim that "the field of convex optimization also considers the far more difficult problem of maximizing convex functions" questionable. According to Boyd/Vandenberghe, which is considered a standard reference, a convex optimization problem has three additional requirements as compared to a general optimization problem, namely 1) the objective function must be convex (in the case of minimization), 2) the inequality constraint functions must be convex, and 3) the equality constraint functions must be affine (Section 4.2.1, pp. 136-). In a concave maximization problem, requirements 2) and 3) are fulfilled but the negative of the objective function (which is concave) is maximized. Since they are equivalent, both these problems are referred to as convex optimization problems (p. 137). A problem that does not fulfil these requirements, for example the problem of minimizing a convex function subject to an equality constraint that is not affine, is referred to as a nonlinear optimization problem and is treated with global optimization methods. The term "convex minimization" seems to focus only on requirement 1) above, therefore a reference would be needed to show that this is standard terminology. Isheden ( talk) 19:36, 26 October 2011 (UTC)

- Convex "optimization" is vague. Convex minimization is preferred for minimization problems. Advanced research, following Rockafellar, uses indicator functions or penalty representations of constraints. There is no need to set up a convex subset in the definition (although it has some pedagogical value, and is used by Polyak and Bertsekas's books).

- The Boyd/Vandenberghe book is good, especially for pedagogy and for its engineering applications. It also considers quasi-convex problems, etc. (Don't they mention quasi-convex constraint functions, also?)

- There is a theory of convex maximization, which should be covered in an article on convex optimization. I gave a reference to a theoretical result in Rockafellar, and I mentioned classic articles by Sahni and by Vavasis, et alia.

- Problems with non-convex constraints are not always solved by global optimization methods. Often they are approximately locally minimized with local methods or by global methods. Kiefer. Wolfowitz 19:53, 26 October 2011 (UTC)

I beg to differ. In Rockafellar (1997), Section 28 (p. 273), the term "ordinary convex program" is defined in a very similar way to Boyd's definition of a "convex optimization problem". Rockafellar even suggests a more rigorous definition on the same page as a tuple of functions satisfying the conditions given. Indicator functions and penalty representation are discussed in Boyd as well, see 11.2 for the use of the indicator function to make inequality constraints implicit in the objective, and 5.4.4 for the price interpretation of violating an inequality constraint. Perhaps what you mean is that the Lagrangian (see 5.1.1), which includes the constraints, is a convex function, and that "convex minimization" is about finding the minimum of this function (called the Lagrange dual function, see 5.1.2)? I think that what you call the theory of convex maximization would fit better in the convex analysis article, since this is a basic property of a convex function. The section "Generalized convexity" as it is formulated now would fit better in the article convex function. And you're right that local methods are often used when finding the global optimum is difficult, but the point of convex optimization is that it is in a certain sense the broadest class of nonlinear optimization problems for which any local optimum is the global optimum. Isheden ( talk) 21:51, 26 October 2011 (UTC)

- The article should focus on convex minimization. I suggested moving the article to convex minimization long ago.

- Convex minimization is important for non-convex problems (and their convexifications). See Chapter 14 of Lemaréchal & Hiriart-Urruty or Bertsekas, for example.

- An article entitled "convex optimization" should include "convex maximization" and should mention the NP-hardness of mildly non-convex quadratic problems. It should also include the discussion of unimodal and non-convex problems from c. 1973, which is often mis-attributed to Judin & Nemirovksy: I think the author was Ivanov, who explained why non-convex unimodal problems are worst-case intractible (information complexity). Kiefer. Wolfowitz 22:13, 26 October 2011 (UTC)

I think different interpretations of the word "convex" might cause confusion here. In the term convex optimization, "convex" refers to the optimization problem, not to the objective function. A convex optimization problem or convex program may be either a minimization or a maximization problem. As cited from the article nonlinear optimization: "If the objective function is concave (maximization problem), or convex (minimization problem) and the constraint set is convex, then the program is called convex and general methods from convex optimization can be used in most cases." For this reason, I am against moving the article to convex minimization. Moreover, "convex optimization" and "convex optimization problem" are much more commonly used than "convex minimization" and "convex minimization problem", respectively, as determined by searches on Google Books. I think the article name should be based on the more common use in the literature. I noted also that the term "convex programming" is even more commonly used. On the other hand, I agree that the article should discuss the importance of convex optimization for non-convex optimization problems. I also think it is a good idea to mention that even mildly non-convex problems are often NP-hard. Isheden ( talk) 10:46, 27 October 2011 (UTC)

- Although Isheden asked me to weigh in on this, it's clear to me that he's more on top of the subject than I am. When I have any questions about convex optimization I walk across the road to bug Stephen Boyd. As long as Stephen himself doesn't weigh in on this discussion I'm happy to serve as a go-between here. (I'm just the customer in this case, I use locally convex optimization myself in my work on modern climate change.) -- Vaughan Pratt ( talk) 05:52, 30 October 2011 (UTC)

- Hi Professor Pratt

- It's an honor that you write here. We are very happy that you have joined Wikipedia. :)

- Isheden has rightly clarified an established use of the "CO problem"; it would be worthwhile looking in Nemirovskii et alia, in Nesterov's book (also), in Lemaréchal et alia, and in Bertsekas also, to see how widespread be "CO problem". Also, a look in Papadimitriou & Steiglitz, in Smale/Blum/Shub et alia, and in Vavasis would be prudent, so you don't endorse something that will cause you trouble in the coffee lounge. ;)

- However, the article title is convex optimization, not CO problem. I think it should have a summary style, with sections providing an overview and links to the specialty articles. These sections would cover

- convex minimization (including the "CO problem"),

- duality formulations and methods (for convex and convex problems, following e.g. Lemaréchal or Bertsekas---and not your distinguished colleagues Gill, Murry and Wright!),

- extensions of convex analysis and optimization (especially quasi-convex a la Singer and Rubinov, or the theoretically interesting results on subgradient methods of Nesterov/Robinson/Murty/Kiwiel etc.)

- convex maximization,

- Sorry for sketching a plan for a lot of work. (It's late here.) Best regards, Kiefer. Wolfowitz 06:24, 30 October 2011 (UTC)

- However, the article title is convex optimization, not CO problem. I think it should have a summary style, with sections providing an overview and links to the specialty articles. These sections would cover

- There are many points to discuss and a lot of work ahead of us. However, let me start with the question about the article name. I have tried to search through the works by the authors you mentioned to find out what the most common term is. I limited the search to textbooks that have some kind of reference to convex optimization in the title, and are searchable using Google books, namely the books by Ben-Tal/Nemirovskiĭ, Nesterov/Nemirovskii, Nesterov, Hiriart-Urruty/Lemaréchal, Boyd/Vandenberghe and Rockafellar. I counted the number of occurrences of the phrases "convex programming", "convex optimization", "convex minimization", "concave maximization", "convex maximization", and "concave minimization", excluding hits with the next word being "problem" or "problems". The total number of hits turned out as 64, 156, 6, 0, 0, 0 (in the same order). In each book, "convex programming" and/or "convex optimization" clearly outnumbered "convex minimization". Therefore it seems to me that "convex optimization" or "convex programming" are more accepted terms than "convex minimization". Isheden ( talk) 23:58, 30 October 2011 (UTC)

What's the question here? Convex optimization is certainly a subject, as borne out by Isheden's numbers. How long has "convex minimization" been in Wikipedia? Could that account by itself for the 6 hits it got, vs the 156 hits for "convex optimization?"

Usually optimization minimizes something, such as your cost. It can always be turned into maximizing something, such as what you have left after subtracting your cost.

I'm having difficulty understanding these arguments for an article on something other than convex optimization. -- Vaughan Pratt ( talk) 07:45, 2 November 2011 (UTC)

- The search was carried out exclusively in some of the references suggested by User:Kiefer.Wolfowitz. The term "convex minimization" is sometimes used in the literature, although infrequently. As a next step, I intend to check the references for their definition of convex optimization. My understanding is that convex optimization is about solving convex optimization problems (as opposed to non-convex problems), which can be put in terms of either minimization or maximization (to be verified). Isheden ( talk) 13:42, 2 November 2011 (UTC)

- Look at the title of the book of HU & Claude Lemarechal, which I suggest is at least as authoritative as the California EE profs.

- Look at Variational analysis by Rockafellar and Wets, or the 2-volume monographs by Morkukhovich. The whole terminology of variational analysis, particularly epigraphical/cosmic convergence, relies on minimization being the standard.

-

Kiefer.

Wolfowitz

09:12, 3 November 2011 (UTC)

- No one is questioning that the standard form of a convex optimization problem is the one described in the article, based on minimization. What I do question is your claim that convex optimization includes maximization of convex functions.

- Also, the subject of this article is applied mathematics according to the classification. The theory from the other fields you mention, some of which I must admit I've never heard of, should be kept to a minimum in this article. The article must be accessible for people like me or User:Vaughan Pratt, who want to use the convex optimization framework in other fields. Detailed discussions of theoretical concepts might fit better in the convex analysis article or in an article on variational analysis. Isheden ( talk) 09:53, 3 November 2011 (UTC)

- Isheden,

- You are making demands that have no basis in Wikipedia policy, and in particular your argument that this article is classified as applied mathematics violates the policy that WP is not a reliable source (if I understood your suggestion).

- I don't know what Wikipedia policy says, but it seems natural to me that convex analysis is more theoretical in nature and convex optimization more applied.

- Convex optimization embraces more than the contents of the book by the California EE profs. You should examine Ekeland & Teman and also Ioffe & Tikhomirov, for starters, or Jon Borwein's & Mordukhovich's books for more recent treatments of convex minimization.

-

Kiefer.

Wolfowitz

11:25, 3 February 2012 (UTC)

- I don't know what books you are referring to here. What are the titles? Most references I've found do not specify what the scope of convex optimization is other than the solving convex optimization problems, which in turn are characterized as problems that are tractable to solve computationally. This does not apply to maximization of convex functions in general, unless all points on the boundary can be checked. Or have I missed something here? Isheden ( talk) 23:38, 10 February 2012 (UTC)

- Isheden, this has been going on for months, and I'm growing tired. Let me state bluntly that you don't know what you are talking about. You are stating a "characterization" of tractable problems that is just simply false. You should look at the papers of Nesterov and Kiwiel. I've told you this before.

Kiefer.

Wolfowitz

04:44, 11 February 2012 (UTC)

- Since this discussion has ceased to be constructive, I think it's better to make a cut here and concentrate on expanding the article. Isheden ( talk) 09:40, 11 February 2012 (UTC)

- Isheden, this has been going on for months, and I'm growing tired. Let me state bluntly that you don't know what you are talking about. You are stating a "characterization" of tractable problems that is just simply false. You should look at the papers of Nesterov and Kiwiel. I've told you this before.

Kiefer.

Wolfowitz

04:44, 11 February 2012 (UTC)

- Faigle, Kern, and another author treat convex maximization in the section on convex optimization. (I believe both Faigle and Kern have been elected to the governing board of MPS/MOS.)

- Boyd and Vandenberghe's Convex Optimization treats convex maximization in problem (8.16).

I trust the "factual accuracy" bs is over. Kiefer. Wolfowitz 12:04, 3 February 2012 (UTC)

- No, unfortunately that does not settle the discussion (although it is more about terminology than factual accuracy). I don't understand what Boyd/Vandenberghe problem 8.16 has to do with this, and while the result on convex maximization can be mentioned further down in the article, I'm not convinced that referring to it as convex optimization represents the majority view. In case you are interested in Wikipedia policies, please have a look at

WP:Wikipedia is not about winning and

WP:UNDUE. I believe additional opinions on this from other people would be helpful.

Isheden (

talk)

21:15, 3 February 2012 (UTC)

- Let me know when you find a defined population from which a survey sample was taken, so I might try to understand what you're possibly thinking about with "majority view", an irrelevant concept on WP. I provided a reliable high quality source, which settles the matter of the sentence to which you have been objecting, according to WP policy. Kiefer. Wolfowitz 04:40, 11 February 2012 (UTC)

I'm confused. The theory section states:

- if a local minimum exists, then it is a global minimum.

- the set of all (global) minima is convex.

- for each strictly convex function, if the function has a minimum, then the minimum is unique.

Technically you can have a set of one, but the middle line above loosely implies there can be multiple global minima, which is contradicted by the final statement. Can whatever is meant be better expressed please? Aarghdvaark ( talk) 06:58, 30 August 2012 (UTC)

- The third bullet point applies only if it's a strictly convex function. — Arthur Rubin (talk) 07:58, 30 August 2012 (UTC)

The page is confusing because it seems to be using "minimum" to sometimes refer to the "minimizer". The minimum is a scalar value in the range of the objective function. The minimizers are the points in the domain at which the minima are reached. The global minimum for any optimization problem, or function, or set, is (assuming it exists) unique by definition. Also discussion the set of all local minima, while perhaps ok (though is it much use to talk of its convexity?) is not as interesting as the set of minimizers where those local minima are reached. — Preceding unsigned comment added by 129.81.103.111 ( talk) 17:50, 17 July 2015 (UTC)

The applications section is quite poorly written. Almost all of its citations point to a single set of slides of Boyd et al., but those examples prove only that some problems can be modeled as convex optimization problems, not that convex optimization is actually used in practice to solve any of these problems. I've updated the phrasing appropriately in that section, but I would love it if someone could provide more concrete examples of convex optimization used in real world problems (not just in slides or academic papers). — Preceding unsigned comment added by J2kun ( talk • contribs) 19:35, 21 January 2024 (UTC)

| This is the

talk page for discussing improvements to the

Convex optimization article. This is not a forum for general discussion of the article's subject. |

Article policies

|

| Find sources: Google ( books · news · scholar · free images · WP refs) · FENS · JSTOR · TWL |

|

| This article is rated C-class on Wikipedia's

content assessment scale. It is of interest to the following WikiProjects: | ||||||||||

| |||||||||||

This article may be too technical for most readers to understand. |

The layout and presentation could be much better. I know it's a stub, but what little there is could at least be readable. 163.1.159.21 16:48, 14 May 2005 (UTC)

I see "convex optimization" applied to nonlinear functions with multiple minima. In that context are people really talking just about some convex portion of the domain around a local minimum? Similarly, is it still convex optimization if you are looking for the minimum of a Y-shaped valley where the minimum is at the bottom of the Y? What if it wasn't Y-shaped but more V-shaped so that you could draw an ellipse over either valley in which the function is convex? —Ben FrantzDale 19:50, 31 January 2007 (UTC)

- For convex optimization the function to be optimized has to be convex. But then any local minimum is global minimum, so local optimization is the same as global optimization. At least that's how I know it. Oleg Alexandrov ( talk) 02:38, 1 February 2007 (UTC)

- Perhaps some edge-case examples would be helpful. For example, is Rosenbrock's banana function a convex function? I tend to think not (since the valley curves). —Ben FrantzDale 20:56, 5 February 2007 (UTC)

- Well, if a function is not convex, then you can't do convex optimization. But you can still do optimization however, it is just you are not guaranteed a global minimum. I fail to see what your point is, with the above. Sorry. :) Oleg Alexandrov ( talk) 04:42, 6 February 2007 (UTC)

- My point is I think I understand what it means for a function to be convex, but I'm not 100% sure. For that reason I'd like to see some edge-cases explained. Is a curving valley such as the banana function convex? (I think it is not.) My sense is that a convex function is one that has an n-dimensional valley that doesn't curve too much so that if you keep heading down hill you'll be moving closer to the minimum. (Whereas in a curved valley you might be heading downhill but away from the actual minimum.) Is that right? —Ben FrantzDale 13:37, 6 February 2007 (UTC)

There is no graph on the "banana function" page, so I can't tell. Here is a convex function, Image:Convex-function-graph-1.png, and here is a function which is not convex, Image:Quasiconvex function.png. A greater help would be to read the convex function article rather than this one. Oleg Alexandrov ( talk) 02:15, 7 February 2007 (UTC)

- I've read up on convex function. The thing I want to understand is what makes convex optimization special. It feels like it's because, with a convex function, moving by ε downhill always moves you closer to the minimum. (Intuitively it seems like that property would make an optimization problem much more tractable.) Alternately, I'd like to understand what goes wrong in optimizing a function that isn't quite convex. —Ben FrantzDale 04:28, 7 February 2007 (UTC)

- Not much goes wrong if the function to optimize is not convex. You may just have more than one local minimum. Try to see how your "ε downhill" intuition would work for

Image:Nonquasiconvex function.png which is not convex.

Oleg Alexandrov (

talk)

04:47, 7 February 2007 (UTC)

- Actually that picture is not a very good example since the function in question is not convex in general, but it is convex around the local minima. See again

Image:Quasiconvex function.png which is not convex at all but for which your "ε downhill" argument seems to still work.

Oleg Alexandrov (

talk)

04:55, 7 February 2007 (UTC)

- Both of those pictures obviously satisfy my "ε downhill" argument around their minima. I folloed the external links to a full-length PDF textbook. On page 16 of that (p. 30 of the PDF) it says

- In this context Rockafellar stated, in his 1993 SIAM Review survey paper [Roc93],

- In fact the great watershed in optimization isn’t between linearity and nonlinearity, but convexity and nonconvexity.

- In this context Rockafellar stated, in his 1993 SIAM Review survey paper [Roc93],

- Presumably there is something special about convex functions that I am missing. (Or else, the analisys doesn't work for non-convex functions but actual algorithms might work reasonably well for "almost convex" functions.) Thanks. —Ben FrantzDale 05:39, 7 February 2007 (UTC)

- Both of those pictures obviously satisfy my "ε downhill" argument around their minima. I folloed the external links to a full-length PDF textbook. On page 16 of that (p. 30 of the PDF) it says

- Actually that picture is not a very good example since the function in question is not convex in general, but it is convex around the local minima. See again

Image:Quasiconvex function.png which is not convex at all but for which your "ε downhill" argument seems to still work.

Oleg Alexandrov (

talk)

04:55, 7 February 2007 (UTC)

- Not much goes wrong if the function to optimize is not convex. You may just have more than one local minimum. Try to see how your "ε downhill" intuition would work for

Image:Nonquasiconvex function.png which is not convex.

Oleg Alexandrov (

talk)

04:47, 7 February 2007 (UTC)

- OK, I don't know convex optimization so well. What I know is that convexity is profoundly important in the sense that if the function is convex, you are guaranteed to have a global minimum, and if it is strictly convex, that minimum is unique. This is immensely important, since then you will arrive at the minimum starting from anywhere. If your function is not convex, you always need to worry, especially in problems involving millions of variables, that the solution you found is only a lousy local minimum, and there are many other solutions in the search space which are much more optimal but which can't be easily found. There are many algorithms which can help in that case, which search for a solution randomly, etc, but the problem of finding the global minimum of a non-convex function is (I think!)

NP-hard, meaning that it is impossible to do in practice.

Oleg Alexandrov (

talk)

16:30, 7 February 2007 (UTC)

- Thanks. Perhaps someone with more background on the subject can comment. What you said sounds right, although as I understand convexity, there are plenty of non-convex functions for which there is exactly one minimum and that is the only point with a zero derivative, which seems naively like it would be just as easy to minimize. Like I said, I'm trying to grock what makes convexity special, hence the "what goes wrong if..." questions. Thanks agian.

—Ben FrantzDale

16:50, 7 February 2007 (UTC)

- It is just very hard to prove in general that a given non-convex function has a unique minimum. And most non-convex functions have many local minima. So practically speaking, non-convex function == no unique minimum, then what I said above applies. Oleg Alexandrov ( talk) 04:17, 8 February 2007 (UTC)

- Thanks. Perhaps someone with more background on the subject can comment. What you said sounds right, although as I understand convexity, there are plenty of non-convex functions for which there is exactly one minimum and that is the only point with a zero derivative, which seems naively like it would be just as easy to minimize. Like I said, I'm trying to grock what makes convexity special, hence the "what goes wrong if..." questions. Thanks agian.

—Ben FrantzDale

16:50, 7 February 2007 (UTC)

- OK, I don't know convex optimization so well. What I know is that convexity is profoundly important in the sense that if the function is convex, you are guaranteed to have a global minimum, and if it is strictly convex, that minimum is unique. This is immensely important, since then you will arrive at the minimum starting from anywhere. If your function is not convex, you always need to worry, especially in problems involving millions of variables, that the solution you found is only a lousy local minimum, and there are many other solutions in the search space which are much more optimal but which can't be easily found. There are many algorithms which can help in that case, which search for a solution randomly, etc, but the problem of finding the global minimum of a non-convex function is (I think!)

NP-hard, meaning that it is impossible to do in practice.

Oleg Alexandrov (

talk)

16:30, 7 February 2007 (UTC)

- After thinking about it more, it looks like gradient descent solvers can work on nonconvex functions, but e.g., the simplex method does not because the real minimum could "leak" out of the simplex as the simplex shrinks. —Ben FrantzDale 20:43, 10 April 2007 (UTC)

- Gradient descent works for non-convex problems, but one end up only with local minima only. Now, the simplex method works only for linear problems as far as I know, so even more particular than convex problems. Oleg Alexandrov ( talk) 03:03, 11 April 2007 (UTC)

- Hmm. There are non-convex nonlinear functions with a single global minimum (e.g., a bowl with an extra dent at the bottom). Basically I'm trying to figure out why convexity is important. If it is important rather than just "functions with a single global minimum, then there has to be a case for which nonconvex functions that may still have a single global minimum cause an algorithm to fail. It could be that I don't understand the simplex method exactly. —Ben FrantzDale 04:42, 11 April 2007 (UTC)

The theory of convex optimization pertains to convex minimization, not maximization (apart from a maximum boundary principle). Maximizing (even quadratic QP) convex functions (on convex subsets) is NP hard. Shouldn't the title of the article be clarified to "Convex Minimization"? Kiefer.Wolfowitz ( talk) 14:32, 7 June 2009 (UTC) I updated the discussion to specific "convex minimization" where appropriate. Kiefer.Wolfowitz ( talk) 15:49, 7 June 2009 (UTC)

Would somebody improve the illustration of the "problem hierarchy", please?

Convex quadratic programming (QP) (with linear constraints) is more general than linear programming.

I would not object to somebody changing the QP with Q constraints to simply QP (with linear constraints). Thanks. Kiefer.Wolfowitz ( talk) 19:47, 7 January 2010 (UTC)

- I removed the still wrong diagram. Kiefer.Wolfowitz ( talk) 16:39, 21 July 2010 (UTC)

The first sentence is gramatically wrong: "is focuses" -> "focuses" —Preceding unsigned comment added by 139.179.161.17 ( talk) 12:36, 28 February 2010 (UTC)

Does anyone know where I can find some information about "abstract convex programming"? By this I mean minimising a convex function over a convex feasible set. That is, there are no explicit constraints, all you know is that the feasible set is convex. —Preceding unsigned comment added by 203.167.251.186 ( talk) 04:48, 19 July 2010 (UTC)

- The best theoretical and computational work is very challenging. Start with Dimitri Bertsekas's books or his on-line lecture notes. Some current research is described in Claude Lemaréchal's lectures on "Lagrangian Relaxation" at a summer school, which was published in Springer's lecture notes in computer science (Combinatorial Optimization).

- (Such questions are better reserved for your professor or for on-line lists: Look at Optimization Online.)

- Avoid the term "Abstract convex programming", which is established for a very mathematical approach to more general problems (Rolewicz & Pallaschke, Ivan Singer, the late Rubinov, etc.). Thanks! Best regards, Kiefer.Wolfowitz ( talk) 16:37, 21 July 2010 (UTC)

- Thanks. Much appreciated. —Preceding unsigned comment added by 203.167.251.186 ( talk) 21:35, 26 July 2010 (UTC)

In the introductory section it is mentioned that

With recent improvements in computing and in optimization theory, convex minimization is nearly as straightforward as linear programming.

While that is certainly true in theory (existence of a rich duality theory and polynomial-time algorithms), I am not so sure to which extent this is true in practice. For linear programs, simplex and interior-point methods can solve problems with input size (nonzero entries on constraint matrix) on the order of millions on a desktop computer. But even for a well studied and highly structured class of convex optimization problems, namely, semidefinite optimization, current solvers do not fare very well even for problems with input size on the order of a few thousand nonzeroes. See the benchmarks at http://plato.asu.edu/bench.html.

It is obvious that this is not a fair comparison, given that the codes for LPs are obviously much more mature. However, semidefinite optimization solvers (as well as general convex optimization software) faces an apparently intrinsic problem of numerical stability.

If anybody else can confirm that I am not completely outdated about this, could you please add these remarks to the page, or let me know so that I can write the remarks myself? Thanks.

-- Marcelkcs ( talk) 15:20, 4 June 2011 (UTC)

This article would benefit from a section on applications of convex optimization, which might include concrete examples. Akshayka ( talk) 20:11, 14 January 2019 (UTC)

I would like to raise concerns about the recent edits to the article. Specifically, I find the claim that "the field of convex optimization also considers the far more difficult problem of maximizing convex functions" questionable. According to Boyd/Vandenberghe, which is considered a standard reference, a convex optimization problem has three additional requirements as compared to a general optimization problem, namely 1) the objective function must be convex (in the case of minimization), 2) the inequality constraint functions must be convex, and 3) the equality constraint functions must be affine (Section 4.2.1, pp. 136-). In a concave maximization problem, requirements 2) and 3) are fulfilled but the negative of the objective function (which is concave) is maximized. Since they are equivalent, both these problems are referred to as convex optimization problems (p. 137). A problem that does not fulfil these requirements, for example the problem of minimizing a convex function subject to an equality constraint that is not affine, is referred to as a nonlinear optimization problem and is treated with global optimization methods. The term "convex minimization" seems to focus only on requirement 1) above, therefore a reference would be needed to show that this is standard terminology. Isheden ( talk) 19:36, 26 October 2011 (UTC)

- Convex "optimization" is vague. Convex minimization is preferred for minimization problems. Advanced research, following Rockafellar, uses indicator functions or penalty representations of constraints. There is no need to set up a convex subset in the definition (although it has some pedagogical value, and is used by Polyak and Bertsekas's books).

- The Boyd/Vandenberghe book is good, especially for pedagogy and for its engineering applications. It also considers quasi-convex problems, etc. (Don't they mention quasi-convex constraint functions, also?)

- There is a theory of convex maximization, which should be covered in an article on convex optimization. I gave a reference to a theoretical result in Rockafellar, and I mentioned classic articles by Sahni and by Vavasis, et alia.

- Problems with non-convex constraints are not always solved by global optimization methods. Often they are approximately locally minimized with local methods or by global methods. Kiefer. Wolfowitz 19:53, 26 October 2011 (UTC)

I beg to differ. In Rockafellar (1997), Section 28 (p. 273), the term "ordinary convex program" is defined in a very similar way to Boyd's definition of a "convex optimization problem". Rockafellar even suggests a more rigorous definition on the same page as a tuple of functions satisfying the conditions given. Indicator functions and penalty representation are discussed in Boyd as well, see 11.2 for the use of the indicator function to make inequality constraints implicit in the objective, and 5.4.4 for the price interpretation of violating an inequality constraint. Perhaps what you mean is that the Lagrangian (see 5.1.1), which includes the constraints, is a convex function, and that "convex minimization" is about finding the minimum of this function (called the Lagrange dual function, see 5.1.2)? I think that what you call the theory of convex maximization would fit better in the convex analysis article, since this is a basic property of a convex function. The section "Generalized convexity" as it is formulated now would fit better in the article convex function. And you're right that local methods are often used when finding the global optimum is difficult, but the point of convex optimization is that it is in a certain sense the broadest class of nonlinear optimization problems for which any local optimum is the global optimum. Isheden ( talk) 21:51, 26 October 2011 (UTC)

- The article should focus on convex minimization. I suggested moving the article to convex minimization long ago.

- Convex minimization is important for non-convex problems (and their convexifications). See Chapter 14 of Lemaréchal & Hiriart-Urruty or Bertsekas, for example.

- An article entitled "convex optimization" should include "convex maximization" and should mention the NP-hardness of mildly non-convex quadratic problems. It should also include the discussion of unimodal and non-convex problems from c. 1973, which is often mis-attributed to Judin & Nemirovksy: I think the author was Ivanov, who explained why non-convex unimodal problems are worst-case intractible (information complexity). Kiefer. Wolfowitz 22:13, 26 October 2011 (UTC)

I think different interpretations of the word "convex" might cause confusion here. In the term convex optimization, "convex" refers to the optimization problem, not to the objective function. A convex optimization problem or convex program may be either a minimization or a maximization problem. As cited from the article nonlinear optimization: "If the objective function is concave (maximization problem), or convex (minimization problem) and the constraint set is convex, then the program is called convex and general methods from convex optimization can be used in most cases." For this reason, I am against moving the article to convex minimization. Moreover, "convex optimization" and "convex optimization problem" are much more commonly used than "convex minimization" and "convex minimization problem", respectively, as determined by searches on Google Books. I think the article name should be based on the more common use in the literature. I noted also that the term "convex programming" is even more commonly used. On the other hand, I agree that the article should discuss the importance of convex optimization for non-convex optimization problems. I also think it is a good idea to mention that even mildly non-convex problems are often NP-hard. Isheden ( talk) 10:46, 27 October 2011 (UTC)

- Although Isheden asked me to weigh in on this, it's clear to me that he's more on top of the subject than I am. When I have any questions about convex optimization I walk across the road to bug Stephen Boyd. As long as Stephen himself doesn't weigh in on this discussion I'm happy to serve as a go-between here. (I'm just the customer in this case, I use locally convex optimization myself in my work on modern climate change.) -- Vaughan Pratt ( talk) 05:52, 30 October 2011 (UTC)

- Hi Professor Pratt

- It's an honor that you write here. We are very happy that you have joined Wikipedia. :)

- Isheden has rightly clarified an established use of the "CO problem"; it would be worthwhile looking in Nemirovskii et alia, in Nesterov's book (also), in Lemaréchal et alia, and in Bertsekas also, to see how widespread be "CO problem". Also, a look in Papadimitriou & Steiglitz, in Smale/Blum/Shub et alia, and in Vavasis would be prudent, so you don't endorse something that will cause you trouble in the coffee lounge. ;)

- However, the article title is convex optimization, not CO problem. I think it should have a summary style, with sections providing an overview and links to the specialty articles. These sections would cover

- convex minimization (including the "CO problem"),

- duality formulations and methods (for convex and convex problems, following e.g. Lemaréchal or Bertsekas---and not your distinguished colleagues Gill, Murry and Wright!),

- extensions of convex analysis and optimization (especially quasi-convex a la Singer and Rubinov, or the theoretically interesting results on subgradient methods of Nesterov/Robinson/Murty/Kiwiel etc.)

- convex maximization,

- Sorry for sketching a plan for a lot of work. (It's late here.) Best regards, Kiefer. Wolfowitz 06:24, 30 October 2011 (UTC)

- However, the article title is convex optimization, not CO problem. I think it should have a summary style, with sections providing an overview and links to the specialty articles. These sections would cover

- There are many points to discuss and a lot of work ahead of us. However, let me start with the question about the article name. I have tried to search through the works by the authors you mentioned to find out what the most common term is. I limited the search to textbooks that have some kind of reference to convex optimization in the title, and are searchable using Google books, namely the books by Ben-Tal/Nemirovskiĭ, Nesterov/Nemirovskii, Nesterov, Hiriart-Urruty/Lemaréchal, Boyd/Vandenberghe and Rockafellar. I counted the number of occurrences of the phrases "convex programming", "convex optimization", "convex minimization", "concave maximization", "convex maximization", and "concave minimization", excluding hits with the next word being "problem" or "problems". The total number of hits turned out as 64, 156, 6, 0, 0, 0 (in the same order). In each book, "convex programming" and/or "convex optimization" clearly outnumbered "convex minimization". Therefore it seems to me that "convex optimization" or "convex programming" are more accepted terms than "convex minimization". Isheden ( talk) 23:58, 30 October 2011 (UTC)

What's the question here? Convex optimization is certainly a subject, as borne out by Isheden's numbers. How long has "convex minimization" been in Wikipedia? Could that account by itself for the 6 hits it got, vs the 156 hits for "convex optimization?"

Usually optimization minimizes something, such as your cost. It can always be turned into maximizing something, such as what you have left after subtracting your cost.

I'm having difficulty understanding these arguments for an article on something other than convex optimization. -- Vaughan Pratt ( talk) 07:45, 2 November 2011 (UTC)

- The search was carried out exclusively in some of the references suggested by User:Kiefer.Wolfowitz. The term "convex minimization" is sometimes used in the literature, although infrequently. As a next step, I intend to check the references for their definition of convex optimization. My understanding is that convex optimization is about solving convex optimization problems (as opposed to non-convex problems), which can be put in terms of either minimization or maximization (to be verified). Isheden ( talk) 13:42, 2 November 2011 (UTC)

- Look at the title of the book of HU & Claude Lemarechal, which I suggest is at least as authoritative as the California EE profs.

- Look at Variational analysis by Rockafellar and Wets, or the 2-volume monographs by Morkukhovich. The whole terminology of variational analysis, particularly epigraphical/cosmic convergence, relies on minimization being the standard.

-

Kiefer.

Wolfowitz

09:12, 3 November 2011 (UTC)

- No one is questioning that the standard form of a convex optimization problem is the one described in the article, based on minimization. What I do question is your claim that convex optimization includes maximization of convex functions.

- Also, the subject of this article is applied mathematics according to the classification. The theory from the other fields you mention, some of which I must admit I've never heard of, should be kept to a minimum in this article. The article must be accessible for people like me or User:Vaughan Pratt, who want to use the convex optimization framework in other fields. Detailed discussions of theoretical concepts might fit better in the convex analysis article or in an article on variational analysis. Isheden ( talk) 09:53, 3 November 2011 (UTC)

- Isheden,

- You are making demands that have no basis in Wikipedia policy, and in particular your argument that this article is classified as applied mathematics violates the policy that WP is not a reliable source (if I understood your suggestion).

- I don't know what Wikipedia policy says, but it seems natural to me that convex analysis is more theoretical in nature and convex optimization more applied.

- Convex optimization embraces more than the contents of the book by the California EE profs. You should examine Ekeland & Teman and also Ioffe & Tikhomirov, for starters, or Jon Borwein's & Mordukhovich's books for more recent treatments of convex minimization.

-

Kiefer.

Wolfowitz

11:25, 3 February 2012 (UTC)

- I don't know what books you are referring to here. What are the titles? Most references I've found do not specify what the scope of convex optimization is other than the solving convex optimization problems, which in turn are characterized as problems that are tractable to solve computationally. This does not apply to maximization of convex functions in general, unless all points on the boundary can be checked. Or have I missed something here? Isheden ( talk) 23:38, 10 February 2012 (UTC)

- Isheden, this has been going on for months, and I'm growing tired. Let me state bluntly that you don't know what you are talking about. You are stating a "characterization" of tractable problems that is just simply false. You should look at the papers of Nesterov and Kiwiel. I've told you this before.

Kiefer.

Wolfowitz

04:44, 11 February 2012 (UTC)

- Since this discussion has ceased to be constructive, I think it's better to make a cut here and concentrate on expanding the article. Isheden ( talk) 09:40, 11 February 2012 (UTC)

- Isheden, this has been going on for months, and I'm growing tired. Let me state bluntly that you don't know what you are talking about. You are stating a "characterization" of tractable problems that is just simply false. You should look at the papers of Nesterov and Kiwiel. I've told you this before.

Kiefer.

Wolfowitz

04:44, 11 February 2012 (UTC)

- Faigle, Kern, and another author treat convex maximization in the section on convex optimization. (I believe both Faigle and Kern have been elected to the governing board of MPS/MOS.)

- Boyd and Vandenberghe's Convex Optimization treats convex maximization in problem (8.16).

I trust the "factual accuracy" bs is over. Kiefer. Wolfowitz 12:04, 3 February 2012 (UTC)

- No, unfortunately that does not settle the discussion (although it is more about terminology than factual accuracy). I don't understand what Boyd/Vandenberghe problem 8.16 has to do with this, and while the result on convex maximization can be mentioned further down in the article, I'm not convinced that referring to it as convex optimization represents the majority view. In case you are interested in Wikipedia policies, please have a look at

WP:Wikipedia is not about winning and

WP:UNDUE. I believe additional opinions on this from other people would be helpful.

Isheden (

talk)

21:15, 3 February 2012 (UTC)

- Let me know when you find a defined population from which a survey sample was taken, so I might try to understand what you're possibly thinking about with "majority view", an irrelevant concept on WP. I provided a reliable high quality source, which settles the matter of the sentence to which you have been objecting, according to WP policy. Kiefer. Wolfowitz 04:40, 11 February 2012 (UTC)

I'm confused. The theory section states:

- if a local minimum exists, then it is a global minimum.

- the set of all (global) minima is convex.

- for each strictly convex function, if the function has a minimum, then the minimum is unique.

Technically you can have a set of one, but the middle line above loosely implies there can be multiple global minima, which is contradicted by the final statement. Can whatever is meant be better expressed please? Aarghdvaark ( talk) 06:58, 30 August 2012 (UTC)

- The third bullet point applies only if it's a strictly convex function. — Arthur Rubin (talk) 07:58, 30 August 2012 (UTC)

The page is confusing because it seems to be using "minimum" to sometimes refer to the "minimizer". The minimum is a scalar value in the range of the objective function. The minimizers are the points in the domain at which the minima are reached. The global minimum for any optimization problem, or function, or set, is (assuming it exists) unique by definition. Also discussion the set of all local minima, while perhaps ok (though is it much use to talk of its convexity?) is not as interesting as the set of minimizers where those local minima are reached. — Preceding unsigned comment added by 129.81.103.111 ( talk) 17:50, 17 July 2015 (UTC)

The applications section is quite poorly written. Almost all of its citations point to a single set of slides of Boyd et al., but those examples prove only that some problems can be modeled as convex optimization problems, not that convex optimization is actually used in practice to solve any of these problems. I've updated the phrasing appropriately in that section, but I would love it if someone could provide more concrete examples of convex optimization used in real world problems (not just in slides or academic papers). — Preceding unsigned comment added by J2kun ( talk • contribs) 19:35, 21 January 2024 (UTC)