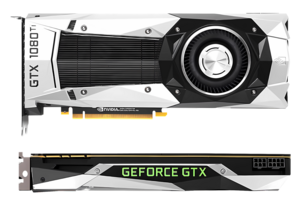

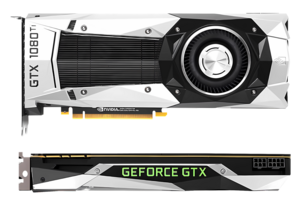

NVIDIA GeForce GTX 1080 Ti of the GeForce 10-line of graphics-cards, was the final major iteration featuring the Pascal microarchitecture (GP102-350-K1-A1). | |

| Launched | May 27, 2016 |

|---|---|

| Designed by | Nvidia |

| Manufactured by | |

| Fabrication process | |

| Codename(s) | GP10x |

| Product Series | |

| Desktop | |

| Professional/workstation | |

| Server/datacenter | |

| Specifications | |

| L1 cache | 24 KB (per SM) |

| L2 cache | 256 KB—4 MB |

| Memory support | |

| PCIe support | PCIe 3.0 |

| Supported Graphics APIs | |

| DirectX | DirectX 12 (12.1) |

| Direct3D | Direct3D 12.0 |

| Shader Model | Shader Model 6.7 |

| OpenCL | OpenCL 3.0 |

| OpenGL | OpenGL 4.6 |

| CUDA | Compute Capability 6.0 |

| Vulkan | Vulkan 1.3 |

| Media Engine | |

| Encode codecs | |

| Decode codecs | |

| Color bit-depth |

|

| Encoder(s) supported | NVENC |

| Display outputs | |

| History | |

| Predecessor | Maxwell |

| Successor | |

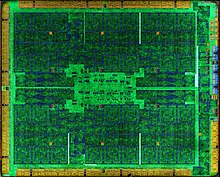

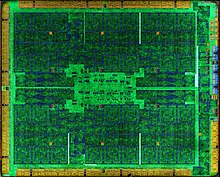

Pascal is the codename for a GPU microarchitecture developed by Nvidia, as the successor to the Maxwell architecture. The architecture was first introduced in April 2016 with the release of the Tesla P100 (GP100) on April 5, 2016, and is primarily used in the GeForce 10 series, starting with the GeForce GTX 1080 and GTX 1070 (both using the GP104 GPU), which were released on May 17, 2016, and June 10, 2016, respectively. Pascal was manufactured using TSMC's 16 nm FinFET process, [1] and later Samsung's 14 nm FinFET process. [2]

The architecture is named after the 17th century French mathematician and physicist, Blaise Pascal.

In April 2019, Nvidia enabled a software implementation of DirectX Raytracing on Pascal-based cards starting with the GTX 1060 6 GB, and in the 16 series cards, a feature reserved to the Turing-based RTX series up to that point. [3] [4]

Details

In March 2014, Nvidia announced that the successor to Maxwell would be the Pascal microarchitecture; announced on May 6, 2016, and released on May 27 of the same year. The Tesla P100 (GP100 chip) has a different version of the Pascal architecture compared to the GTX GPUs (GP104 chip). The shader units in GP104 have a Maxwell-like design. [5]

Architectural improvements of the GP100 architecture include the following: [6] [7] [8]

- In Pascal, a SM (streaming multiprocessor) consists of between 64-128 CUDA cores, depending on if it is GP100 or GP104. Maxwell contained 128 CUDA cores per SM; Kepler had 192, Fermi 32 and Tesla 8. The GP100 SM is partitioned into two processing blocks, each having 32 single-precision CUDA cores, an instruction buffer, a warp scheduler, 2 texture mapping units and 2 dispatch units.

- CUDA Compute Capability 6.0.

- High Bandwidth Memory 2 — some cards feature 16 GiB HBM2 in four stacks with a total bus width of 4096 bits and a memory bandwidth of 720 GB/s.

- Unified memory — a memory architecture where the CPU and GPU can access both main system memory and memory on the graphics card with the help of a technology called "Page Migration Engine".

- NVLink — a high-bandwidth bus between the CPU and GPU, and between multiple GPUs. Allows much higher transfer speeds than those achievable by using PCI Express; estimated to provide between 80 and 200 GB/s. [9] [10]

- 16-bit ( FP16) floating-point operations (colloquially "half precision") can be executed at twice the rate of 32-bit floating-point operations ("single precision") [11] and 64-bit floating-point operations (colloquially "double precision") executed at half the rate of 32-bit floating point operations. [12]

- More registers — twice the amount of registers per CUDA core compared to Maxwell.

- More shared memory.

- Dynamic load balancing scheduling system. [13] This allows the scheduler to dynamically adjust the amount of the GPU assigned to multiple tasks, ensuring that the GPU remains saturated with work except when there is no more work that can safely be distributed to distribute. [13] Nvidia therefore has safely enabled asynchronous compute in Pascal's driver. [13]

- Instruction-level and thread-level preemption. [14]

Architectural improvements of the GP104 architecture include the following: [5]

- CUDA Compute Capability 6.1.

- GDDR5X — new memory standard supporting 10Gbit/s data rates, updated memory controller. [15]

- Simultaneous Multi-Projection - generating multiple projections of a single geometry stream, as it enters the SMP engine from upstream shader stages. [16]

- DisplayPort 1.4, HDMI 2.0b.

- Fourth generation Delta Color Compression.

- Enhanced SLI Interface — SLI interface with higher bandwidth compared to the previous versions.

- PureVideo Feature Set H hardware video decoding HEVC Main10 (10-bit), Main12 (12-bit) and VP9 hardware decoding.

- HDCP 2.2 support for 4K DRM protected content playback and streaming (Maxwell GM200 and GM204 lack HDCP 2.2 support, GM206 supports HDCP 2.2). [17]

- NVENC HEVC Main10 10bit hardware encoding.

- GPU Boost 3.0.

- Instruction-level preemption. [14] In graphics tasks, the driver restricts preemption to the pixel-level, because pixel tasks typically finish quickly and the overhead costs of doing pixel-level preemption are lower than instruction-level preemption (which is expensive). [14] Compute tasks get thread-level or instruction-level preemption, [14] because they can take longer times to finish and there are no guarantees on when a compute task finishes. Therefore the driver enables the expensive instruction-level preemption for these tasks. [14]

Overview

Graphics Processor Cluster

A chip is partitioned into Graphics Processor Clusters (GPCs). For the GP104 chips, a GPC encompasses 5 SMs.

Streaming Multiprocessor "Pascal"

A "Streaming Multiprocessor" is analogous to AMD's Compute Unit. An SM encompasses 128 single-precision ALUs ("CUDA cores") on GP104 chips and 64 single-precision ALUs on GP100 chips. While all CU versions consist of 64 shader processors (i.e. 4 SIMD Vector Units, each 16 lanes wide), Nvidia experimented with very different numbers of CUDA cores:

- On Tesla, 1 SM combines 8 single-precision (FP32) shader processors

- On Fermi, 1 SM combines 32 single-precision (FP32) shader processors

- On Kepler, 1 SM combines 192 single-precision (FP32) shader processors and 64 double-precision (FP64) units (on GK110 GPUs)

- On Maxwell, 1 SM combines 128 single-precision (FP32) shader processors

- On Pascal, it depends:

- On GP100, 1 SM combines 64 single-precision (FP32) shader processors and also 32 double-precision (FP64) providing a 2:1 ratio of single- to double-precision throughput. The GP100 uses more flexible FP32 cores that are able to process one single-precision or two half-precision numbers in a two-element vector. [18] This is intended to better serve machine learning tasks.

- On GP104, 1 SM combines 128 single-precision ALUs, 4 double-precision ALUs (providing a 32:1 ratio), and one half-precision ALU which contains a vector of two half-precision floats which can execute the same instruction on both floats, providing a 64:1 ratio if the same instruction is used on both elements.

Polymorph-Engine 4.0

The Polymorph Engine version 4.0 is the unit responsible for Tessellation. It corresponds functionally with AMD's Geometric Processor. It has been moved from the shader module to the TPC to allow one Polymorph engine to feed multiple SMs within the TPC. [19]

Chips

- GP100: Nvidia's Tesla P100 GPU accelerator is targeted at GPGPU applications such as FP64 double precision compute and deep learning training that uses FP16. It uses HBM2 memory. [20] Quadro GP100 also uses the GP100 GPU.

- GP102: This GPU is used in the Titan Xp, [21] Titan X Pascal [22] and the GeForce GTX 1080 Ti. It is also used in the Quadro P6000 [23] & Tesla P40. [24]

- GP104: This GPU is used in the GeForce GTX 1070, GTX 1070 Ti, GTX 1080, and some GTX 1060 6 GB's. The GTX 1070 has 15/20 and the GTX 1070 Ti has 19/20 of its SMs enabled; both utilize GDDR5 memory. The GTX 1080 is a fully unlocked chip and uses GDDR5X memory. Some GTX 1060 6 GB's use GP104 with 10/20 SMs enabled and GDDR5X memory. [25] It is also used in the Quadro P5000, Quadro P4000, Quadro P3200 (mobile applications) and Tesla P4.

- GP106: This GPU is used in the GeForce GTX 1060 with GDDR5 [26] memory. [27] [28] It is also used in the Quadro P2000.

- GP107: This GPU is used in the GeForce GTX 1050 and 1050 Ti. It is also used in the Quadro P1000, Quadro P600, Quadro P620 & Quadro P400.

- GP108: This GPU is used in the GeForce GT 1010 and GeForce GT 1030.

| GK104 | GK110 | GM204 (GTX 970) | GM204 (GTX 980) | GM200 | GP104 | GP100 | |

|---|---|---|---|---|---|---|---|

| Dedicated texture cache per SM | 48 KiB | — | — | — | — | — | — |

| Texture (graphics or compute) or read-only data (compute only) cache per SM | — | 48 KiB [29] | — | — | — | — | — |

| Programmer-selectable shared memory/L1 partitions per SM | 48 KiB shared memory + 16 KiB L1 cache (default) [30] | 48 KiB shared memory + 16 KiB L1 cache (default) [30] | — | — | — | — | — |

| 32 KiB shared memory + 32 KiB L1 cache [30] | 32 KiB shared memory + 32 KiB L1 cache [30] | ||||||

| 16 KiB shared memory + 48 KiB L1 cache [30] | 16 KiB shared memory + 48 KiB L1 cache [30] | ||||||

| Unified L1 cache/texture cache per SM | — | — | 48 KiB [31] | 48 KiB [31] | 48 KiB [31] | 48 KiB [31] | 24 KiB [31] |

| Dedicated shared memory per SM | — | — | 96 KiB [31] | 96 KiB [31] | 96 KiB [31] | 96 KiB [31] | 64 KiB [31] |

| L2 cache per chip | 512 KiB [31] | 1536 KiB [31] | 1792 KiB [32] | 2048 KiB [32] | 3072 KiB [31] | 2048 KiB [31] | 4096 KiB [31] |

Performance

The theoretical single-precision processing power of a Pascal GPU in GFLOPS is computed as 2 × operations per FMA instruction per CUDA core per cycle × number of CUDA cores × core clock speed (in GHz).

The theoretical double-precision processing power of a Pascal GPU is 1/2 of the single precision performance on Nvidia GP100, and 1/32 of Nvidia GP102, GP104, GP106, GP107 & GP108.

The theoretical half-precision processing power of a Pascal GPU is 2× of the single precision performance on GP100 [12] and 1/64 on GP104, GP106, GP107 & GP108. [18]

Successor

The Pascal architecture was succeeded in 2017 by Volta in the HPC, cloud computing, and self-driving car markets, and in 2018 by Turing in the consumer and business market. [33]

See also

- List of eponyms of Nvidia GPU microarchitectures

- List of Nvidia graphics processing units

- Nvidia NVDEC

- Nvidia NVENC

References

- ^ "NVIDIA 7nm Next-Gen-GPUs To Be Built By TSMC". Wccftech. June 24, 2018. Retrieved July 6, 2019.

- ^ "Samsung to Optical-Shrink NVIDIA "Pascal" to 14 nm". Retrieved August 13, 2016.

- ^ "Accelerating The Real-Time Ray Tracing Ecosystem: DXR For GeForce RTX and GeForce GTX". NVIDIA.

- ^ "Ray Tracing Comes to Nvidia GTX GPUs: Here's How to Enable It". April 11, 2019.

- ^ a b "NVIDIA GeForce GTX 1080" (PDF). International.download.nvidia.com. Retrieved September 15, 2016.

- ^ Gupta, Sumit (March 21, 2014). "NVIDIA Updates GPU Roadmap; Announces Pascal". Blogs.nvidia.com. Retrieved March 25, 2014.

- ^ "Parallel Forall". NVIDIA Developer Zone. Devblogs.nvidia.com. Archived from the original on March 26, 2014. Retrieved March 25, 2014.

- ^ "NVIDIA Tesla P100" (PDF). International.download.nvidia.com. Retrieved September 15, 2016.

- ^ "Inside Pascal: NVIDIA's Newest Computing Platform". April 5, 2016.

- ^ Denis Foley (March 25, 2014). "NVLink, Pascal and Stacked Memory: Feeding the Appetite for Big Data". nvidia.com. Retrieved July 7, 2014.

- ^ "NVIDIA's Next-Gen Pascal GPU Architecture to Provide 10X Speedup for Deep Learning Apps". The Official NVIDIA Blog. Retrieved March 23, 2015.

- ^

a

b Smith, Ryan (April 5, 2015).

"NVIDIA Announces Tesla P100 Accelerator - Pascal GP100 Power for HPC".

AnandTech. Retrieved May 27, 2016.

Each of those SMs also contains 32 FP64 CUDA cores - giving us the 1/2 rate for FP64 - and new to the Pascal architecture is the ability to pack 2 FP16 operations inside a single FP32 CUDA core under the right circumstances

- ^ a b c Smith, Ryan (July 20, 2016). "The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation". AnandTech. p. 9. Retrieved July 21, 2016.

- ^ a b c d e Smith, Ryan (July 20, 2016). "The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation". AnandTech. p. 10. Retrieved July 21, 2016.

- ^ "GTX 1080 Graphics Card". GeForce. Retrieved September 15, 2016.

- ^ Carbotte, Kevin (May 17, 2016). "Nvidia GeForce GTX 1080 Simultaneous Multi-Projection & Async Compute". Tomshardware.com. Retrieved September 15, 2016.

- ^ "Nvidia Pascal HDCP 2.2". Nvidia Hardware Page. Retrieved May 8, 2016.

- ^ a b Smith, Ryan (July 20, 2016). "The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation". AnandTech. p. 5. Retrieved July 21, 2016.

- ^ Smith, Ryan (July 20, 2016). "The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation". AnandTech. p. 4. Retrieved July 21, 2016.

- ^ Harris, Mark (April 5, 2016). "Inside Pascal: NVIDIA's Newest Computing Platform". Parallel Forall. Nvidia. Retrieved June 3, 2016.

- ^ "NVIDIA TITAN Xp Graphics Card with Pascal Architecture". NVIDIA.

- ^ "NVIDIA TITAN X Graphics Card with Pascal". GeForce. Retrieved September 15, 2016.

- ^ "New Quadro Graphics Built on Pascal Architecture". NVIDIA. Retrieved September 15, 2016.

- ^ "Accelerating Data Center Workloads with GPUs". NVIDIA. Retrieved September 15, 2016.

- ^ Zhiye Liu (October 22, 2018). "Nvidia GeForce GTX 1060 Gets GDDR5X in Fifth Makeover". Tom's Hardware. Retrieved February 2, 2024.

- ^ "NVIDIA GeForce 10 Series Graphics Cards". NVIDIA.

- ^ "NVIDIA GeForce GTX 1060 to be released on July 7th". VideoCardz.com. June 29, 2016. Retrieved September 15, 2016.

- ^ "GTX 1060 Graphics Cards". GeForce. Retrieved September 15, 2016.

- ^ Smith, Ryan (November 12, 2012). "NVIDIA Launches Tesla K20 & K20X: GK110 Arrives At Last". AnandTech. p. 3. Retrieved July 24, 2016.

- ^ a b c d e f Nvidia (September 1, 2015). "CUDA C Programming Guide". Retrieved July 24, 2016.

- ^ a b c d e f g h i j k l m n o Triolet, Damien (May 24, 2016). "Nvidia GeForce GTX 1080, le premier GPU 16nm en test !". Hardware.fr (in French). p. 2. Retrieved July 24, 2016.

- ^ a b Smith, Ryan (January 26, 2015). "GeForce GTX 970: Correcting The Specs & Exploring Memory Allocation". AnandTech. p. 1. Retrieved July 24, 2016.

- ^ "NVIDIA Turing Release Date". Techradar. February 2, 2021.

NVIDIA GeForce GTX 1080 Ti of the GeForce 10-line of graphics-cards, was the final major iteration featuring the Pascal microarchitecture (GP102-350-K1-A1). | |

| Launched | May 27, 2016 |

|---|---|

| Designed by | Nvidia |

| Manufactured by | |

| Fabrication process | |

| Codename(s) | GP10x |

| Product Series | |

| Desktop | |

| Professional/workstation | |

| Server/datacenter | |

| Specifications | |

| L1 cache | 24 KB (per SM) |

| L2 cache | 256 KB—4 MB |

| Memory support | |

| PCIe support | PCIe 3.0 |

| Supported Graphics APIs | |

| DirectX | DirectX 12 (12.1) |

| Direct3D | Direct3D 12.0 |

| Shader Model | Shader Model 6.7 |

| OpenCL | OpenCL 3.0 |

| OpenGL | OpenGL 4.6 |

| CUDA | Compute Capability 6.0 |

| Vulkan | Vulkan 1.3 |

| Media Engine | |

| Encode codecs | |

| Decode codecs | |

| Color bit-depth |

|

| Encoder(s) supported | NVENC |

| Display outputs | |

| History | |

| Predecessor | Maxwell |

| Successor | |

Pascal is the codename for a GPU microarchitecture developed by Nvidia, as the successor to the Maxwell architecture. The architecture was first introduced in April 2016 with the release of the Tesla P100 (GP100) on April 5, 2016, and is primarily used in the GeForce 10 series, starting with the GeForce GTX 1080 and GTX 1070 (both using the GP104 GPU), which were released on May 17, 2016, and June 10, 2016, respectively. Pascal was manufactured using TSMC's 16 nm FinFET process, [1] and later Samsung's 14 nm FinFET process. [2]

The architecture is named after the 17th century French mathematician and physicist, Blaise Pascal.

In April 2019, Nvidia enabled a software implementation of DirectX Raytracing on Pascal-based cards starting with the GTX 1060 6 GB, and in the 16 series cards, a feature reserved to the Turing-based RTX series up to that point. [3] [4]

Details

In March 2014, Nvidia announced that the successor to Maxwell would be the Pascal microarchitecture; announced on May 6, 2016, and released on May 27 of the same year. The Tesla P100 (GP100 chip) has a different version of the Pascal architecture compared to the GTX GPUs (GP104 chip). The shader units in GP104 have a Maxwell-like design. [5]

Architectural improvements of the GP100 architecture include the following: [6] [7] [8]

- In Pascal, a SM (streaming multiprocessor) consists of between 64-128 CUDA cores, depending on if it is GP100 or GP104. Maxwell contained 128 CUDA cores per SM; Kepler had 192, Fermi 32 and Tesla 8. The GP100 SM is partitioned into two processing blocks, each having 32 single-precision CUDA cores, an instruction buffer, a warp scheduler, 2 texture mapping units and 2 dispatch units.

- CUDA Compute Capability 6.0.

- High Bandwidth Memory 2 — some cards feature 16 GiB HBM2 in four stacks with a total bus width of 4096 bits and a memory bandwidth of 720 GB/s.

- Unified memory — a memory architecture where the CPU and GPU can access both main system memory and memory on the graphics card with the help of a technology called "Page Migration Engine".

- NVLink — a high-bandwidth bus between the CPU and GPU, and between multiple GPUs. Allows much higher transfer speeds than those achievable by using PCI Express; estimated to provide between 80 and 200 GB/s. [9] [10]

- 16-bit ( FP16) floating-point operations (colloquially "half precision") can be executed at twice the rate of 32-bit floating-point operations ("single precision") [11] and 64-bit floating-point operations (colloquially "double precision") executed at half the rate of 32-bit floating point operations. [12]

- More registers — twice the amount of registers per CUDA core compared to Maxwell.

- More shared memory.

- Dynamic load balancing scheduling system. [13] This allows the scheduler to dynamically adjust the amount of the GPU assigned to multiple tasks, ensuring that the GPU remains saturated with work except when there is no more work that can safely be distributed to distribute. [13] Nvidia therefore has safely enabled asynchronous compute in Pascal's driver. [13]

- Instruction-level and thread-level preemption. [14]

Architectural improvements of the GP104 architecture include the following: [5]

- CUDA Compute Capability 6.1.

- GDDR5X — new memory standard supporting 10Gbit/s data rates, updated memory controller. [15]

- Simultaneous Multi-Projection - generating multiple projections of a single geometry stream, as it enters the SMP engine from upstream shader stages. [16]

- DisplayPort 1.4, HDMI 2.0b.

- Fourth generation Delta Color Compression.

- Enhanced SLI Interface — SLI interface with higher bandwidth compared to the previous versions.

- PureVideo Feature Set H hardware video decoding HEVC Main10 (10-bit), Main12 (12-bit) and VP9 hardware decoding.

- HDCP 2.2 support for 4K DRM protected content playback and streaming (Maxwell GM200 and GM204 lack HDCP 2.2 support, GM206 supports HDCP 2.2). [17]

- NVENC HEVC Main10 10bit hardware encoding.

- GPU Boost 3.0.

- Instruction-level preemption. [14] In graphics tasks, the driver restricts preemption to the pixel-level, because pixel tasks typically finish quickly and the overhead costs of doing pixel-level preemption are lower than instruction-level preemption (which is expensive). [14] Compute tasks get thread-level or instruction-level preemption, [14] because they can take longer times to finish and there are no guarantees on when a compute task finishes. Therefore the driver enables the expensive instruction-level preemption for these tasks. [14]

Overview

Graphics Processor Cluster

A chip is partitioned into Graphics Processor Clusters (GPCs). For the GP104 chips, a GPC encompasses 5 SMs.

Streaming Multiprocessor "Pascal"

A "Streaming Multiprocessor" is analogous to AMD's Compute Unit. An SM encompasses 128 single-precision ALUs ("CUDA cores") on GP104 chips and 64 single-precision ALUs on GP100 chips. While all CU versions consist of 64 shader processors (i.e. 4 SIMD Vector Units, each 16 lanes wide), Nvidia experimented with very different numbers of CUDA cores:

- On Tesla, 1 SM combines 8 single-precision (FP32) shader processors

- On Fermi, 1 SM combines 32 single-precision (FP32) shader processors

- On Kepler, 1 SM combines 192 single-precision (FP32) shader processors and 64 double-precision (FP64) units (on GK110 GPUs)

- On Maxwell, 1 SM combines 128 single-precision (FP32) shader processors

- On Pascal, it depends:

- On GP100, 1 SM combines 64 single-precision (FP32) shader processors and also 32 double-precision (FP64) providing a 2:1 ratio of single- to double-precision throughput. The GP100 uses more flexible FP32 cores that are able to process one single-precision or two half-precision numbers in a two-element vector. [18] This is intended to better serve machine learning tasks.

- On GP104, 1 SM combines 128 single-precision ALUs, 4 double-precision ALUs (providing a 32:1 ratio), and one half-precision ALU which contains a vector of two half-precision floats which can execute the same instruction on both floats, providing a 64:1 ratio if the same instruction is used on both elements.

Polymorph-Engine 4.0

The Polymorph Engine version 4.0 is the unit responsible for Tessellation. It corresponds functionally with AMD's Geometric Processor. It has been moved from the shader module to the TPC to allow one Polymorph engine to feed multiple SMs within the TPC. [19]

Chips

- GP100: Nvidia's Tesla P100 GPU accelerator is targeted at GPGPU applications such as FP64 double precision compute and deep learning training that uses FP16. It uses HBM2 memory. [20] Quadro GP100 also uses the GP100 GPU.

- GP102: This GPU is used in the Titan Xp, [21] Titan X Pascal [22] and the GeForce GTX 1080 Ti. It is also used in the Quadro P6000 [23] & Tesla P40. [24]

- GP104: This GPU is used in the GeForce GTX 1070, GTX 1070 Ti, GTX 1080, and some GTX 1060 6 GB's. The GTX 1070 has 15/20 and the GTX 1070 Ti has 19/20 of its SMs enabled; both utilize GDDR5 memory. The GTX 1080 is a fully unlocked chip and uses GDDR5X memory. Some GTX 1060 6 GB's use GP104 with 10/20 SMs enabled and GDDR5X memory. [25] It is also used in the Quadro P5000, Quadro P4000, Quadro P3200 (mobile applications) and Tesla P4.

- GP106: This GPU is used in the GeForce GTX 1060 with GDDR5 [26] memory. [27] [28] It is also used in the Quadro P2000.

- GP107: This GPU is used in the GeForce GTX 1050 and 1050 Ti. It is also used in the Quadro P1000, Quadro P600, Quadro P620 & Quadro P400.

- GP108: This GPU is used in the GeForce GT 1010 and GeForce GT 1030.

| GK104 | GK110 | GM204 (GTX 970) | GM204 (GTX 980) | GM200 | GP104 | GP100 | |

|---|---|---|---|---|---|---|---|

| Dedicated texture cache per SM | 48 KiB | — | — | — | — | — | — |

| Texture (graphics or compute) or read-only data (compute only) cache per SM | — | 48 KiB [29] | — | — | — | — | — |

| Programmer-selectable shared memory/L1 partitions per SM | 48 KiB shared memory + 16 KiB L1 cache (default) [30] | 48 KiB shared memory + 16 KiB L1 cache (default) [30] | — | — | — | — | — |

| 32 KiB shared memory + 32 KiB L1 cache [30] | 32 KiB shared memory + 32 KiB L1 cache [30] | ||||||

| 16 KiB shared memory + 48 KiB L1 cache [30] | 16 KiB shared memory + 48 KiB L1 cache [30] | ||||||

| Unified L1 cache/texture cache per SM | — | — | 48 KiB [31] | 48 KiB [31] | 48 KiB [31] | 48 KiB [31] | 24 KiB [31] |

| Dedicated shared memory per SM | — | — | 96 KiB [31] | 96 KiB [31] | 96 KiB [31] | 96 KiB [31] | 64 KiB [31] |

| L2 cache per chip | 512 KiB [31] | 1536 KiB [31] | 1792 KiB [32] | 2048 KiB [32] | 3072 KiB [31] | 2048 KiB [31] | 4096 KiB [31] |

Performance

The theoretical single-precision processing power of a Pascal GPU in GFLOPS is computed as 2 × operations per FMA instruction per CUDA core per cycle × number of CUDA cores × core clock speed (in GHz).

The theoretical double-precision processing power of a Pascal GPU is 1/2 of the single precision performance on Nvidia GP100, and 1/32 of Nvidia GP102, GP104, GP106, GP107 & GP108.

The theoretical half-precision processing power of a Pascal GPU is 2× of the single precision performance on GP100 [12] and 1/64 on GP104, GP106, GP107 & GP108. [18]

Successor

The Pascal architecture was succeeded in 2017 by Volta in the HPC, cloud computing, and self-driving car markets, and in 2018 by Turing in the consumer and business market. [33]

See also

- List of eponyms of Nvidia GPU microarchitectures

- List of Nvidia graphics processing units

- Nvidia NVDEC

- Nvidia NVENC

References

- ^ "NVIDIA 7nm Next-Gen-GPUs To Be Built By TSMC". Wccftech. June 24, 2018. Retrieved July 6, 2019.

- ^ "Samsung to Optical-Shrink NVIDIA "Pascal" to 14 nm". Retrieved August 13, 2016.

- ^ "Accelerating The Real-Time Ray Tracing Ecosystem: DXR For GeForce RTX and GeForce GTX". NVIDIA.

- ^ "Ray Tracing Comes to Nvidia GTX GPUs: Here's How to Enable It". April 11, 2019.

- ^ a b "NVIDIA GeForce GTX 1080" (PDF). International.download.nvidia.com. Retrieved September 15, 2016.

- ^ Gupta, Sumit (March 21, 2014). "NVIDIA Updates GPU Roadmap; Announces Pascal". Blogs.nvidia.com. Retrieved March 25, 2014.

- ^ "Parallel Forall". NVIDIA Developer Zone. Devblogs.nvidia.com. Archived from the original on March 26, 2014. Retrieved March 25, 2014.

- ^ "NVIDIA Tesla P100" (PDF). International.download.nvidia.com. Retrieved September 15, 2016.

- ^ "Inside Pascal: NVIDIA's Newest Computing Platform". April 5, 2016.

- ^ Denis Foley (March 25, 2014). "NVLink, Pascal and Stacked Memory: Feeding the Appetite for Big Data". nvidia.com. Retrieved July 7, 2014.

- ^ "NVIDIA's Next-Gen Pascal GPU Architecture to Provide 10X Speedup for Deep Learning Apps". The Official NVIDIA Blog. Retrieved March 23, 2015.

- ^

a

b Smith, Ryan (April 5, 2015).

"NVIDIA Announces Tesla P100 Accelerator - Pascal GP100 Power for HPC".

AnandTech. Retrieved May 27, 2016.

Each of those SMs also contains 32 FP64 CUDA cores - giving us the 1/2 rate for FP64 - and new to the Pascal architecture is the ability to pack 2 FP16 operations inside a single FP32 CUDA core under the right circumstances

- ^ a b c Smith, Ryan (July 20, 2016). "The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation". AnandTech. p. 9. Retrieved July 21, 2016.

- ^ a b c d e Smith, Ryan (July 20, 2016). "The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation". AnandTech. p. 10. Retrieved July 21, 2016.

- ^ "GTX 1080 Graphics Card". GeForce. Retrieved September 15, 2016.

- ^ Carbotte, Kevin (May 17, 2016). "Nvidia GeForce GTX 1080 Simultaneous Multi-Projection & Async Compute". Tomshardware.com. Retrieved September 15, 2016.

- ^ "Nvidia Pascal HDCP 2.2". Nvidia Hardware Page. Retrieved May 8, 2016.

- ^ a b Smith, Ryan (July 20, 2016). "The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation". AnandTech. p. 5. Retrieved July 21, 2016.

- ^ Smith, Ryan (July 20, 2016). "The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation". AnandTech. p. 4. Retrieved July 21, 2016.

- ^ Harris, Mark (April 5, 2016). "Inside Pascal: NVIDIA's Newest Computing Platform". Parallel Forall. Nvidia. Retrieved June 3, 2016.

- ^ "NVIDIA TITAN Xp Graphics Card with Pascal Architecture". NVIDIA.

- ^ "NVIDIA TITAN X Graphics Card with Pascal". GeForce. Retrieved September 15, 2016.

- ^ "New Quadro Graphics Built on Pascal Architecture". NVIDIA. Retrieved September 15, 2016.

- ^ "Accelerating Data Center Workloads with GPUs". NVIDIA. Retrieved September 15, 2016.

- ^ Zhiye Liu (October 22, 2018). "Nvidia GeForce GTX 1060 Gets GDDR5X in Fifth Makeover". Tom's Hardware. Retrieved February 2, 2024.

- ^ "NVIDIA GeForce 10 Series Graphics Cards". NVIDIA.

- ^ "NVIDIA GeForce GTX 1060 to be released on July 7th". VideoCardz.com. June 29, 2016. Retrieved September 15, 2016.

- ^ "GTX 1060 Graphics Cards". GeForce. Retrieved September 15, 2016.

- ^ Smith, Ryan (November 12, 2012). "NVIDIA Launches Tesla K20 & K20X: GK110 Arrives At Last". AnandTech. p. 3. Retrieved July 24, 2016.

- ^ a b c d e f Nvidia (September 1, 2015). "CUDA C Programming Guide". Retrieved July 24, 2016.

- ^ a b c d e f g h i j k l m n o Triolet, Damien (May 24, 2016). "Nvidia GeForce GTX 1080, le premier GPU 16nm en test !". Hardware.fr (in French). p. 2. Retrieved July 24, 2016.

- ^ a b Smith, Ryan (January 26, 2015). "GeForce GTX 970: Correcting The Specs & Exploring Memory Allocation". AnandTech. p. 1. Retrieved July 24, 2016.

- ^ "NVIDIA Turing Release Date". Techradar. February 2, 2021.